StarlingX/Docs and Infra/InstallationGuides/baremetal-Controllers-and-Computes-with-Storage-Cluster

DEPRECATED - Please do not edit.

Contents

- 1 Deployment Diagram

- 2 Hardware Requirements for Bare Metal Servers

- 3 Prepare Servers

- 4 StarlingX Kubernetes

- 4.1 Install the StarlingX Kubernetes Platform

- 4.1.1 Create a bootable USB with the StarlingX ISO

- 4.1.2 Install Software on Controller-0

- 4.1.3 Bootstrap System on Controller-0

- 4.1.4 Configure Controller-0

- 4.1.5 Unlock Controller-0

- 4.1.6 Install Software on Controller-1, Storage Nodes and Compute Nodes

- 4.1.7 Configure Controller-1

- 4.1.8 Unlock Controller-1

- 4.1.9 Configure Storage Nodes

- 4.1.10 Unlock Storage Nodes

- 4.1.11 Configure Compute Nodes

- 4.1.12 Unlock Compute Nodes

- 4.2 Access StarlingX Kubernetes

- 4.1 Install the StarlingX Kubernetes Platform

- 5 StarlingX OpenStack

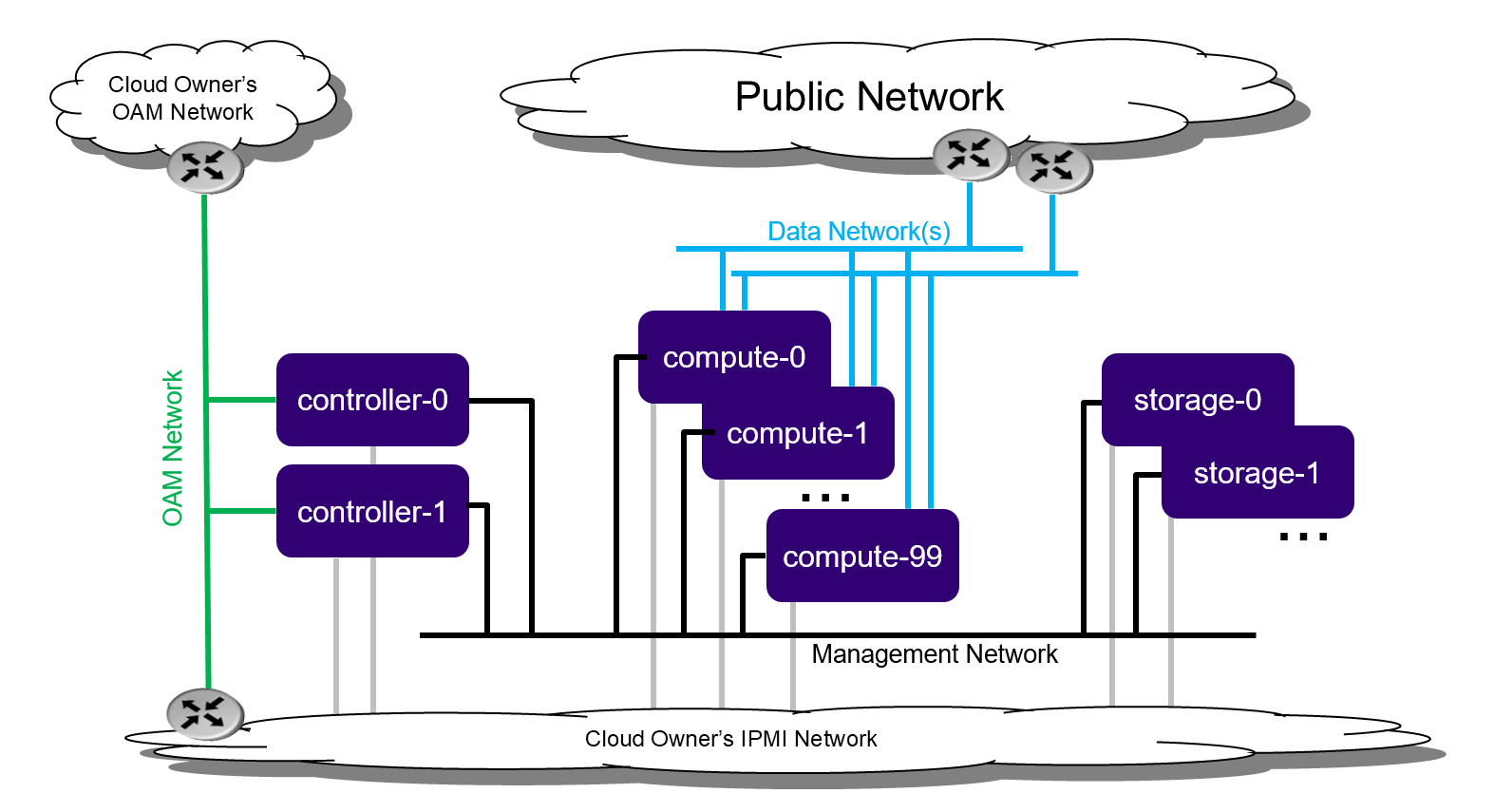

Deployment Diagram

Hardware Requirements for Bare Metal Servers

The recommended minimum requirements for the Bare Metal Servers for the various host types are:

| Minimum Requirement | Controller Node | Storage Node | Compute Node |

|---|---|---|---|

| Number of Servers | 2 | 2 - 9 | 2 - 100 |

| Minimum Processor Class | Dual-CPU Intel® Xeon® E5 26xx Family (SandyBridge) 8 cores/socket | ||

| Minimum Memory | 64 GB | 64GB | 32 GB |

| Primary Disk | 500 GB SDD or NVMe | 120 GB (min. 10K RPM) | 120 GB (Minimum 10K RPM) |

| Additional Disks | None | 1 or more 500 GB (min. 10K RPM) for Ceph OSD. Recommend, but not required, 1 or more SSDs or NVMe drives for Ceph journals (min. 1024 MiB per OSD journal) |

For OpenStack, recommend 1 or more 500 GB (min. 10K RPM) for VM local ephemeral storage |

| Minimum Network Ports | Mgmt/Cluster: 1x10GE OAM: 1x1GE |

Mgmt/Cluster: 1x10GE | Mgmt/Cluster: 1x10GE Data: 1 or more x 10GE |

| BIOS Settings | Hyper-Threading technology enabled Virtualization technology enabled VT for directed I/O enabled CPU power and performance policy set to performance CPU C state control disabled Plug & play BMC detection disabled | ||

Prepare Servers

Prior to starting the installation, the Bare Metal Servers should be:

- physically installed,

- cabled for power,

- cabled for networking

- with the far-end switch ports properly configured to realize the networking of the above deployment diagram

- all disks should be wiped

- such that servers will boot from either the network or USB storage (if present),

- and powered off.

StarlingX Kubernetes

Install the StarlingX Kubernetes Platform

Create a bootable USB with the StarlingX ISO

Get the StarlingX ISO. This can be from a private StarlingX build or, as shown below, from the public Cengen StarlingX build off 'master' branch:

wget http://mirror.starlingx.cengn.ca/mirror/starlingx/master/centos/latest_build/outputs/iso/bootimage.iso

Create a bootable USB with the StarlingX ISO:

# Insert USB stick # Identify USB in mounted filesystems $ df Filesystem 1K-blocks Used Available Use% Mounted on udev 16432268 0 16432268 0% /dev tmpfs 3288884 26244 3262640 1% /run /dev/mapper/md0_crypt 491076512 9641092 456420380 3% / tmpfs 16444408 105472 16338936 1% /dev/shm tmpfs 5120 4 5116 1% /run/lock tmpfs 16444408 0 16444408 0% /sys/fs/cgroup /dev/sdc1 122546800 124876 116153868 1% /boot tmpfs 3288880 24 3288856 1% /run/user/119 tmpfs 3288880 72 3288808 1% /run/user/1000 /dev/sdd1 1467360 1467360 0 100% /media/vivek/data # Unmount $ sudo umount /media/vivek/data # Use 'dd' to copy StarlingX bootimage.iso to USB $ sudo dd if=artful-desktop-amd64.iso of=/dev/sdd bs=1M status=progress

Install Software on Controller-0

Insert the bootable USB into a bootable USB port on the host you are configuring as controller-0.

Power on the host.

Attach to a console, ensure the host boots from the USB and wait for the StarlingX Installer Menus.

Installer Menu Selections:

- First Menu

- Select 'Standard Controller Configuration'

- Second Menu

- Select 'Graphical Console' or 'Textual Console' depending on your terminal access to the console port

- Third Menu

- Select 'Standard Security Profile'

Wait for non-interactive install of software to complete and server to reboot.

This can take 5-10 mins depending on performance of server.

Bootstrap System on Controller-0

Login with username / password of sysadmin / sysadmin.

When logging in for the first time, you will be forced to change the password.

Login: sysadmin Password: Changing password for sysadmin. (current) UNIX Password: sysadmin New Password: (repeat) New Password:

External connectivity is required to run the Ansible bootstrap playbook. The StarlingX boot image will DHCP out all interfaces so if a DHCP server is present in your environment, the server may have obtained an IP Address and have external IP connectivity by these means; check with 'ip add' and 'ping 8.8.8.8'.

Otherwise, manually configure an IP Address and Default IP Route.

Use the PORT, IP-ADDRESS/SUBNET-LENGTH and GATEWAY-IP-ADDRESS applicable to your deployment environment.

sudo ip address add <IP-ADDRESS>/<SUBNET-LENGTH> dev <PORT> sudo ip link set up dev <PORT> sudo ip route add default via <GATEWAY-IP-ADDRESS> dev <PORT> ping 8.8.8.8

Ansible is used to bootstrap StarlingX on Controller-0:

- The default Ansible inventory file, /etc/ansible/hosts, contains a single host, localhost.

- The Ansible bootstrap playbook is at /usr/share/ansible/stx-ansible/playbooks/bootstrap/bootstrap.yml .

- The default configuration values for the bootstrap playbook are in /usr/share/ansible/stx-ansible/playbooks/bootstrap/host_vars/default.yml .

- By default Ansible looks for and imports user configuration override files for hosts in the sysadmin home directory ($HOME), e.g. $HOME/<hostname>.yml .

Specify the user configuration override file for the ansible bootstrap playbook, by copying the above default.yml file to $HOME/localhost.yml and edit the configurable values as desired, based on the commented instructions in the file.

or

Simply create the minimal user configuration override file as shown below, using the OAM IP SUBNET and IP ADDRESSing applicable to your deployment environment.

cd ~ cat <<EOF > localhost.yml system_mode: standard dns_servers: - 8.8.8.8 - 8.8.4.4 external_oam_subnet: <OAM-IP-SUBNET>/<OAM-IP-SUBNET-LENGTH> external_oam_gateway_address: <OAM-GATEWAY-IP-ADDRESS> external_oam_floating_address: <OAM-FLOATING-IP-ADDRESS> external_oam_node_0_address: <OAM-CONTROLLER-0-IP-ADDRESS> external_oam_node_1_address: <OAM-CONTROLLER-1-IP-ADDRESS> admin_username: admin admin_password: <sysadmin-password> ansible_become_pass: <sysadmin-password> EOF

Run the Ansible bootstrap playbook:

ansible-playbook /usr/share/ansible/stx-ansible/playbooks/bootstrap/bootstrap.yml

Wait for Ansible bootstrap playbook to complete.

This can take 5-10 mins depending on performance of HOST machine.

Configure Controller-0

Acquire admin credentials:

source /etc/platform/openrc

Configure the OAM and MGMT interfaces of controller-0 and specify the attached networks:

(Use the OAM and MGMT port names, e.g. eth0, applicable to your deployment environment.)

OAM_IF=<OAM-PORT>

MGMT_IF=<MGMT-PORT>

system host-if-modify controller-0 lo -c none

IFNET_UUIDS=$(system interface-network-list controller-0 | awk '{if ($6=="lo") print $4;}')

for UUID in $IFNET_UUIDS; do

system interface-network-remove ${UUID}

done

system host-if-modify controller-0 $OAM_IF -c platform

system interface-network-assign controller-0 $OAM_IF oam

system host-if-modify controller-0 $MGMT_IF -c platform

system interface-network-assign controller-0 $MGMT_IF mgmt

system interface-network-assign controller-0 $MGMT_IF cluster-host

Configure NTP Servers for network time synchronization:

system ntp-modify ntpservers=0.pool.ntp.org,1.pool.ntp.org

OpenStack-specific Host Configuration

The following configuration is only required if the OpenStack application (stx-openstack) will be installed.

For OpenStack ONLY, assign OpenStack host labels to controller-0 in support of installing the stx-openstack manifest/helm-charts later.

system host-label-assign controller-0 openstack-control-plane=enabled

For OpenStack ONLY, configure the system setting for the vSwitch.

StarlingX has OVS (kernel-based) vSwitch configured as default:

- Running in a container; defined within the helm charts of stx-openstack manifest.

- Shares the core(s) assigned to the Platform.

If you require better performance, OVS-DPDK should be used:

- Running directly on the host (i.e. NOT containerized).

- Requires that at least 1 core be assigned/dedicated to the vSwitch function.

To deploy the default containerized OVS:

system modify --vswitch_type none

I.e. do not run any vSwitch directly on the host, and use the containerized OVS defined in the helm charts of stx-openstack manifest.

To deploy OVS-DPDK (OVS with the Data Plane Development Kit, which is supported only on bare metal hardware, run the following command:

system modify --vswitch_type ovs-dpdk system host-cpu-modify -f vswitch -p0 1 controller-0

Once vswitch_type is set to OVS-DPDK, any subsequent nodes created will default to automatically assigning 1 vSwitch core for AIO Controllers and 2 vSwitch cores for Computes.

When using OVS-DPDK, Virtual Machines must be configured to use a flavor with property: hw:mem_page_size=large.

After controller-0 is unlocked, changing vswitch_type would require locking and unlocking all computes (and/or AIO Controllers) in order to apply the change.

Unlock Controller-0

Unlock controller-0 in order to bring it into service:

system host-unlock controller-0

Controller-0 will reboot in order to apply configuration change and come into service.

This can take 5-10 mins depending on performance of HOST machine.

Install Software on Controller-1, Storage Nodes and Compute Nodes

Power on the controller-1 server and force it to network boot with the appropriate BIOS Boot Options for your particular server.

As controller-1 boots, a message appears on its console instructing you to configure the personality of the node.

On console of controller-0,

list hosts to see newly discovered controller-1 host, i.e. host with hostname of None:

system host-list +----+--------------+-------------+----------------+-------------+--------------+ | id | hostname | personality | administrative | operational | availability | +----+--------------+-------------+----------------+-------------+--------------+ | 1 | controller-0 | controller | unlocked | enabled | available | | 2 | None | None | locked | disabled | offline | +----+--------------+-------------+----------------+-------------+--------------+

Using the host id, set the personality of this host to 'controller':

system host-update 2 personality=controller

This initiates the install of software on controller-1.

This can take 5-10 mins depending on performance of HOST machine.

While waiting on this, repeat the same procedure for storage-0 server and the storage-1, except for setting the personality to 'storage' and assigning a unique hostname, e.g.:

system host-update 3 personality=storage hostname=storage-0 system host-update 4 personality=storage hostname=storage-1

This initiates the install of software on storage-0 and storage-1.

This can take 5-10 mins depending on performance of HOST machine.

While waiting on this, repeat the same procedure for compute-0 server and compute-1 server, except for setting the personality to 'worker' and assigning a unique hostname, e.g.:

system host-update 5 personality=worker hostname=compute-0 system host-update 6 personality=worker hostname=compute-1

This initiates the install of software on compute-0 and compute-1.

Wait for the install of software on controller-1, storage-0, storage-1, compute-0 and compute-1 to all complete, all servers to reboot and to all show as locked/disabled/online in 'system host-list'.

system host-list +----+--------------+-------------+----------------+-------------+--------------+ | id | hostname | personality | administrative | operational | availability | +----+--------------+-------------+----------------+-------------+--------------+ | 1 | controller-0 | controller | unlocked | enabled | available | | 2 | controller-1 | controller | locked | disabled | online | | 3 | storage-0 | storage | locked | disabled | online | | 4 | storage-1 | storage | locked | disabled | online | | 5 | compute-0 | compute | locked | disabled | online | | 6 | compute-1 | compute | locked | disabled | online | +----+--------------+-------------+----------------+-------------+--------------+

Configure Controller-1

Configure the OAM and MGMT interfaces of controller-0 and specify the attached networks:

(Note that the MGMT interface is partially setup automatically by the network install procedure.)

(Use the OAM and MGMT port names, e.g. eth0, applicable to your deployment environment.)

OAM_IF=<OAM-PORT> MGMT_IF=<MGMT-PORT> system host-if-modify controller-1 $OAM_IF -c platform system interface-network-assign controller-1 $OAM_IF oam system interface-network-assign controller-1 $MGMT_IF cluster-host

OpenStack-specific Host Configuration

The following configuration is only required if the OpenStack application (stx-openstack) will be installed.

For OpenStack ONLY, assign OpenStack host labels to controller-1 in support of installing the stx-openstack manifest/helm-charts later.

system host-label-assign controller-1 openstack-control-plane=enabled

Unlock Controller-1

Unlock controller-1 in order to bring it into service:

system host-unlock controller-1

Controller-1 will reboot in order to apply configuration change and come into service.

This can take 5-10 mins depending on performance of HOST machine.

Configure Storage Nodes

Assign the cluster-host network to the MGMT interface for the storage nodes:

(Note that the MGMT interfaces are partially setup automatically by the network install procedure.)

for COMPUTE in compute-0 compute-1; do system interface-network-assign $COMPUTE mgmt0 cluster-host done

Add OSDs to storage-0:

HOST=storage-0

DISKS=$(system host-disk-list ${HOST})

TIERS=$(system storage-tier-list ceph_cluster)

OSDs="/dev/sdb"

for OSD in $OSDs; do

system host-stor-add ${HOST} $(echo "$DISKS" | grep "$OSD" | awk '{print $2}') --tier-uuid $(echo "$TIERS" | grep storage | awk '{print $2}')

while true; do system host-stor-list ${HOST} | grep ${OSD} | grep configuring; if [ $? -ne 0 ]; then break; fi; sleep 1; done

done

system host-stor-list $HOST

Add OSDs to storage-1:

HOST=storage-1

DISKS=$(system host-disk-list ${HOST})

TIERS=$(system storage-tier-list ceph_cluster)

OSDs="/dev/sdb"

for OSD in $OSDs; do

system host-stor-add ${HOST} $(echo "$DISKS" | grep "$OSD" | awk '{print $2}') --tier-uuid $(echo "$TIERS" | grep storage | awk '{print $2}')

while true; do system host-stor-list ${HOST} | grep ${OSD} | grep configuring; if [ $? -ne 0 ]; then break; fi; sleep 1; done

done

system host-stor-list $HOST

Unlock Storage Nodes

Unlock storage nodes in order to bring them into service:

for STORAGE in storage-0 storage-1; do system host-unlock $STORAGE done

The storage nodes will reboot in order to apply configuration change and come into service.

This can take 5-10 mins depending on performance of HOST machine.

Configure Compute Nodes

Assign the cluster-host network to the MGMT interface for the compute nodes:

(Note that the MGMT interfaces are partially setup automatically by the network install procedure.)

for COMPUTE in compute-0 compute-1; do system interface-network-assign $COMPUTE mgmt0 cluster-host done

OPTIONALLY for Kubernetes, i.e. if planning on using SRIOV network attachments in application containers, or

REQUIRED for OpenStack,

configure data interfaces for compute nodes:

(Use the DATA port names, e.g. eth0, applicable to your deployment environment.)

# For Kubernetes SRIOV network attachments

# configure SRIOV device plugin

for COMPUTE in compute-0 compute-1; do

system host-label-assign ${COMPUTE} sriovdp=enabled

done

# If planning on running DPDK in containers on this hosts, configure number of 1G Huge pages required on both NUMA nodes

for COMPUTE in compute-0 compute-1; do

system host-memory-modify ${COMPUTE} 0 -1G 100

system host-memory-modify ${COMPUTE} 1 -1G 100

done

# For both Kubernetes and OpenStack

DATA0IF=<DATA-0-PORT>

DATA1IF=<DATA-1-PORT>

PHYSNET0='physnet0'

PHYSNET1='physnet1'

SPL=/tmp/tmp-system-port-list

SPIL=/tmp/tmp-system-host-if-list

# configure the datanetworks in sysinv, prior to referencing it

# in the ``system host-if-modify`` command'.

system datanetwork-add ${PHYSNET0} vlan

system datanetwork-add ${PHYSNET1} vlan

for COMPUTE in compute-0 compute-1; do

echo "Configuring interface for: $COMPUTE"

set -ex

system host-port-list ${COMPUTE} --nowrap > ${SPL}

system host-if-list -a ${COMPUTE} --nowrap > ${SPIL}

DATA0PCIADDR=$(cat $SPL | grep $DATA0IF |awk '{print $8}')

DATA1PCIADDR=$(cat $SPL | grep $DATA1IF |awk '{print $8}')

DATA0PORTUUID=$(cat $SPL | grep ${DATA0PCIADDR} | awk '{print $2}')

DATA1PORTUUID=$(cat $SPL | grep ${DATA1PCIADDR} | awk '{print $2}')

DATA0PORTNAME=$(cat $SPL | grep ${DATA0PCIADDR} | awk '{print $4}')

DATA1PORTNAME=$(cat $SPL | grep ${DATA1PCIADDR} | awk '{print $4}')

DATA0IFUUID=$(cat $SPIL | awk -v DATA0PORTNAME=$DATA0PORTNAME '($12 ~ DATA0PORTNAME) {print $2}')

DATA1IFUUID=$(cat $SPIL | awk -v DATA1PORTNAME=$DATA1PORTNAME '($12 ~ DATA1PORTNAME) {print $2}')

system host-if-modify -m 1500 -n data0 -c data ${COMPUTE} ${DATA0IFUUID}

system host-if-modify -m 1500 -n data1 -c data ${COMPUTE} ${DATA1IFUUID}

system interface-datanetwork-assign ${COMPUTE} ${DATA0IFUUID} ${PHYSNET0}

system interface-datanetwork-assign ${COMPUTE} ${DATA1IFUUID} ${PHYSNET1}

set +ex

done

OpenStack-specific Host Configuration

The following configuration is only required if the OpenStack application (stx-openstack) will be installed.

For OpenStack ONLY, assign OpenStack host labels to the compute nodes in support of installing the stx-openstack manifest/helm-charts later.

for NODE in compute-0 compute-1; do system host-label-assign $NODE openstack-compute-node=enabled system host-label-assign $NODE openvswitch=enabled system host-label-assign $NODE sriov=enabled done

For OpenStack Only, setup disk partition for nova-local volume group, needed for stx-openstack nova ephemeral disks.

for COMPUTE in compute-0 compute-1; do

echo "Configuring Nova local for: $COMPUTE"

ROOT_DISK=$(system host-show ${COMPUTE} | grep rootfs | awk '{print $4}')

ROOT_DISK_UUID=$(system host-disk-list ${COMPUTE} --nowrap | grep ${ROOT_DISK} | awk '{print $2}')

PARTITION_SIZE=10

NOVA_PARTITION=$(system host-disk-partition-add -t lvm_phys_vol ${COMPUTE} ${ROOT_DISK_UUID} ${PARTITION_SIZE})

NOVA_PARTITION_UUID=$(echo ${NOVA_PARTITION} | grep -ow "| uuid | [a-z0-9\-]* |" | awk '{print $4}')

system host-lvg-add ${COMPUTE} nova-local

system host-pv-add ${COMPUTE} nova-local ${NOVA_PARTITION_UUID}

done

for COMPUTE in compute-0 compute-1; do

echo ">>> Wait for partition $NOVA_PARTITION_UUID to be ready."

while true; do system host-disk-partition-list $COMPUTE --nowrap | grep $NOVA_PARTITION_UUID | grep Ready; if [ $? -eq 0 ]; then break; fi; sleep 1; done

done

Unlock Compute Nodes

Unlock compute nodes in order to bring them into service:

for COMPUTE in compute-0 compute-1; do system host-unlock $COMPUTE done

The computes will reboot in order to apply configuration change and come into service.

This can take 5-10 mins depending on performance of HOST machine.

Your Kubernetes Cluster is up and running.

Access StarlingX Kubernetes

Use Local/Remote CLIs, GUIs and/or REST APIs to access and manage StarlingX Kubernetes and hosted containerized applications. See details here.

StarlingX OpenStack

Install StarlingX OpenStack

Other than the OpenStack-specific configurations required in the underlying StarlingX/Kubernetes infrastructure done in the above installation steps for the StarlingX Kubernetes Platform, the installation of containerized OpenStack is independent of deployment configuration and can be found here.

Access StarlingX OpenStack

Use Local/Remote CLIs, GUIs and/or REST APIs to access and manage StarlingX OpenStack and hosted virtualized applications. See details on accessing StarlingX OpenStack here.