Trove-Guest-Agent-Upgrades

Introduction

This article describes the design for Guest Agent upgrades in Trove. Currently Guest Agent upgrades are implemented through external deployment tools that push new code to each guest instance. Usually the same deployment tools for upgrading the control plane handles guest agent upgrades. This can create a bottle neck on the deployment infrastructure.

Blueprint

https://blueprints.launchpad.net/trove/+spec/upgrade-guestagent

Future Follow up Blueprints

- Version the RPC API and tie it to the API version (see nova for examples)

- This is to help prevent non-backward compatibility between the Trove API and the guest but is not necessarily a dependency for upgrades

- Automatic guestagent upgrades

- The is a logical progression of the Trove Guest Agent Upgrade. Guests will have the ability to go check for an update on it's own. (without an admin notification) See discussion below.

- Events Table

- Create an events history table that could potentially be used by multiple features in trove for trouble shooting/auditing

Goals

- Implement a notification based upgrade path for guest agent

- Allow for different upgrade strategies (swift, jenkins, local disk, rysnc, etc)

- Avoid upgrading during times when guest agents are doing other work (i.e. backups, resize, restart)

- This doesn't seem to be a concern given that the agent can only handle a single message at a time

- Reduce overall downtime during upgrade cycles

Description

Configuration

New properties will be added to the trove configs to allow:

- Specifying an upgrade strategy

guest_upgrade_strategy: swift (trove.guestagent.strategies.upgrade.Swift)

Affected Trove Components

- python-troveclient (optional)

- trove admin API

- guest agent

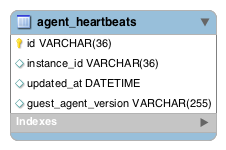

Schema Changes

Workflow

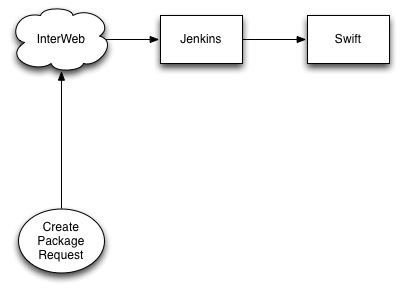

1. An external process (outside of Trove) will create an upgrade package or artifact * This process will be mapped to a defined strategy in the Guest Agent. * It's possible the admin may want to trigger an automatic backup at this point

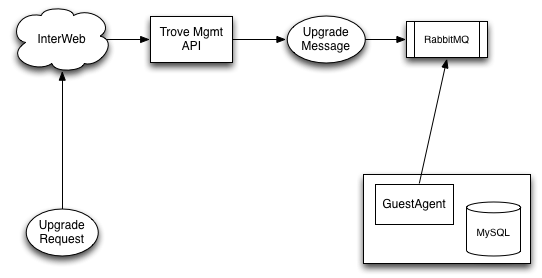

2. An Admin user will notify a Guest Agent that an upgrade is available through the Trove Management API 3. A new upgrade record will be create in the DB for the request. 4. The Guest Agent will process the RPC message created by the API call and handle the upgrade accordingly

5. The Guest Agent will download the package from the location specified in the RPC message

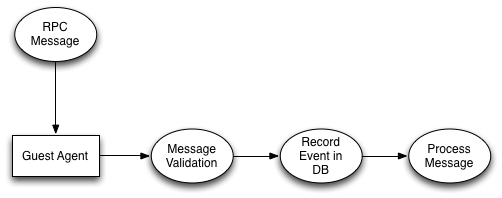

Guest Agent Message Handling

1. Guest agent will handle the message for upgrading to a particular version

2. Simple validation on the message will occur after the message is parsed

a. Check the strategy type, check to see if guest_automatic_updates is enabled

b. Check the location of the package in the message and whether it exists

3. Update the upgrade 'event' in the Trove upgrades database table

4. Execute or process the message

Guest Agent Process Upgrade Message

1. Choose the correct strategy to process the upgrade

2. Download the file from the given location (retry n-times before Failing)

3. Decrypt the package

4. Validate the package

a. check size, check version, checksum, format etc

5. Install the package (pip install)

6. Restart (retry n-times before Failing)

* on start up update the status of the upgrade to SUCCESS

** if start up fails try to install the last known working version, record the status as FAILED

I can see this being a config value that gets updated or a file that is written to disk on the instance

Trove Management REST API

Create a notification request to upgrade a trove guest agent

Relative URL: /v1.0/{admin_tenant_id}/mgmt/upgrade/

HTTP Method: POST

HTTP Headers:

Accept: application/json

Content-Type: application/json

User-Agent: python-troveclient

X-Auth-Project-Id: tenant_name

X-Auth-Token: HPAuth10_xxxx

HTTP Post Body

{

"instance_id": "27e25b73-88a1-4526-b2b9-919a28b8b33f",

"instance_version": "v1.0.1",

"strategy": "pip",

"location": "http://swift/tenant/container/trove-guestagent-v1.0.1.tar.gz"

...

# optional

"metadata": {...}

...

}

HTTP Response

HTTP/1.1 202 Accepted Content-Length: 0 Content-Type: application/json Date: Tue, 18 Mar 2014 23:37:05 GMT

- Note: Will need a call return a list of upgrades filtered by state and/or instance_id

Trove RPC API

unpacked context

{

...

"is_admin": True,

"tenant":"<SANITIZED>",

"method": "upgrade",

"instance_id": "27e25b73-88a1-4526-b2b9-919a28b8b33f"

"instance_version": "v1.0.1",

"strategy": "pip",

"location": "http://swift/tenant/container/trove-guestagent-v1.0.1.tar.gz"

...

# optional

"metadata": {...}

...

}

Versioning and Package Validation

- The Guest Agent will be responsible for validating the package before upgrading

Scenarios

- What happens when the Guest Agent is in a non-upgradeable state? (backup/restore, resize, restart, error)

- The message should remain in the queue until the next time the Guest Agent checks and the state is in 'Running'

- What happens when an upgrade fails, and how does that feedback to Trove?

- Record it as a FAIL in the Trove Database, Admin will have to query.

- Can we rollback or install a previous version?

Feedback/Discussion

Trove Meeting Discusstion

trove.2014-03-12-17.59.log.html

Configs

- amcrn (talk) 22:56, 4 March 2014 (UTC): "New properties will be added to the trove configs to allow Enabling/Disabling Guest Agent Upgrades and Specifying an upgrade strategy". Is it necessary to add a CONF switch for either of these? If the cloud admin doesn't want to initiate a guestagent upgrade via API/RPC, then as long as they don't issue the request, all is well. Or are you suggesting that users can initiate their own upgrades (i.e. this operation isn't limited to the admin role)? As for the upgrade strategy (e.g. "swift"), this is already in the message payload in your examples, so why the need for the CONF? Is the idea to mimic the 'datastore_registry_ext' concept to allow providers to write and add their own strategies?

- esp (talk) 23:06, 4 March 2014 (UTC): We could leave out the CONF flag for disable/enable upgrades. I'm a little hesitant about removing the strategy key/value though. For instance if for some reason we have different strategies for upgrading guests in dev or staging one day. For now I think we are only considering Admin users for sending upgrade notifications. I think the workflow might resemble the restore from backup concept a little more but yes we want to allow folks to extend this to define their own strategies.

- esp: I thought of another use for the CONF switch to enable/disable. We might want to use it for disabling REST API calls that are specific to upgrades.

- grapex: Just to make sure, when we talk about a strategy for the guest upgrade this will be on the Trove side, yes?

- amcrn (talk) 17:58, 10 March 2014 (UTC): "We might want to use it for disabling REST API calls that are specific to upgrades.". I go back to the point of: if only an admin can use the upgrade-related api calls, then as long as your admins don't call it, there's no need to disable it. As for the defining their own strategies: I believe it should be extensible, but I'll make the point that the default strategy should be useable in production if someone so chooses. The default strategy then should be a reasonable default, that a majority of deployers would find acceptable.

- esp: grapex: yes the strategy for the guest upgrade will be in the Trove Guest code. The Trove API code only has to put a message in Rabbit that specifies the strategy.

- esp: amcrn: you are correct, if this is all in the admin API we may not care to have an extra config to disable upgrades. Strategy will resemble how we implemented backup/restore.

Supported Packaging Types

- amcrn (talk) 22:56, 4 March 2014 (UTC): Is there a short list of packaging schemes everyone believes we should support? In Austin it looked like some were ok with a simple "pip install", whereas others had strict requirements on package signing, crypto, etc.

- esp (talk) 23:06, 4 March 2014 (UTC): Initially we are looking at creating a tarball that would include all dependencies to avoid pulling down other packages. We could come up with a list though. (pypi, tar.gz, etc) The crypto piece should be optional and part of a strategy. The concern was having credentials unencrypted in swift.

- grapex I think the rule should be that we keep things flexible enough that operators can use whatever upgrade strategies works best for them. Whatever goes into CI should be very simple IMO.

- amcrn (talk) 17:58, 10 March 2014 (UTC): As mentioned above, it should be flexible, but the default strategy should be a reasonable default that a majority of deployers would find acceptable.

- esp: grapex: amcrn: Agree that the upgrade feature should allow for different upgrade strategies. The RPC message seems general enough to support this and the strategy that consumes it will contain all the logic to handle the upgrade itself.

Upgrade Status

- amcrn (talk) 22:56, 4 March 2014 (UTC): It seems this is inferred, but since it's not explicitly mentioned I'll ask: There is no introduction of a new INSTANCE state (e.g. ACTIVE, BACKUP), correct? To take it a step further then, the idea would be that the user sees ACTIVE, despite a possible in-flight upgrade? In the case of an upgrade failure, would the user still see ACTIVE but we'd have FAILED recorded in the upgrade table?

- esp (talk) 23:06, 4 March 2014 (UTC): The thought was to 'hide' the upgrade status from the user although I'm not sure we decided that yet. That being said I think we need to create a set of upgrade states that are granular enough to handle various failures. For example if the instance can't talk to swift due to network problem we could just fail/abort the upgrade. An Admin will have to come back and retry again later. The user should not see any issues. But if we botch the install and the guest can't start at all then we got a big problem that the user will see.

- grapex The table is called "upgrade" but it seems to me the more useful bit of information to store in the database is the current version of the guest agent as reported by the agent itself during it's heartbeat / status update. We could store that along with a new status which would be, more generally, the status of the guest agent itself. This new status would be set to error states if the upgrade was botched, which the user wouldn't see but admins could peek at. Why not call the table "agent_status"?

- amcrn (talk) 17:58, 10 March 2014 (UTC): I believe that the heartbeat table could be modified to include the current guestagent version (and possibly new error states), but having a separate history table for upgrades is still valuable. The name could use some work though, guest_upgrade_history is probably more appropriate.* **

- esp grapex: amcrn: It might make sense to move version and guest_status to a new home. I'm playing around with the idea in my head. The table could definitely be more descriptive and I really did want to name it something like guestagent_upgrade_history but I went with upgrades (with an 's') to stay consistent with what we currently have in the DB. (i.e. instances, backups). Also because it looks like we try to do things in a Rails like way with naming models controllers and views closely to the table I didn't want to wear out my keyboard.

Trove Database Schema

- amcrn (talk) 22:56, 4 March 2014 (UTC): Every upgrade attempt will be a new record in the upgrades table, or will each instance have a dedicated row? I would much prefer the former.

- esp (talk) 23:06, 4 March 2014 (UTC): I was thinking a new record for each upgrade event. Kind of like an audit trail or upgrade history table.

- amcrn (talk) 22:56, 4 March 2014 (UTC): What is the purpose of deleted/deleted_at for upgrades? In the scenario of a botched upgrade, a new upgrade request should be fired, incurring a new row to be inserted.

- esp (talk) 23:06, 4 March 2014 (UTC): The deleted flag was put there to stay consistent with some of the existing tables. In the event that we might want to purge the table that would support a logical delete for a batch delete job that would run once in a while. I'd be willing to drop both of these columns as I can't see a scenario for it at the moment.

- grapex I'll be honest- I feel like there are dozens of features in Trove which could use something like this to compliment the logs. I don't think building this audit trail as part of this feature makes sense- we should probably set our sights on making a real audit trail that would be more general. We talked awhile back about having "events" in the database, and Basnight also worked on having something similar awhile back which never was completed.

- esp I like the idea of an events table but I'm not sure we want to tackle it here. A guestagent upgrade could potentially fail in many areas and being to easily query it's state will be necessary. We sort of have this idea with the backups table already for better or worse.

Rollback Support

- amcrn (talk) 22:56, 4 March 2014 (UTC): As for whether a rollback to a previous install should be supported: that's likely contingent upon the packaging schemes supported.

- esp (talk) 23:06, 4 March 2014 (UTC): Agreed. This one is a bit weird. It was my attempt to address the question of what happens when we upgrade and the guest can't start. My current approach was to write to a file (like the config file or a new one) when an upgrade is successful to keep track of that last known running version.

- agingh1pster: question 2 - what happens if a agent never upgrades. Is the instance considered dead?

- esp: I think that if we got into a situation where a Guest Agent failed to update (indefinitely) the cloud admin would have to figure out something else. i.e. contact the user, migrate the instance via backup/restore etc.

- grapex: I agree with Amcrn- rollbacks is very contingent on the packaging schemes. At Rackspace we're using Debian packages, which works well. I'm for supporting whatever people need to use but I think we should try to avoid inventing a new packaging system. At the very least I think the issue of rollbacks should be saved for a later iteration.

- amcrn (talk) 17:58, 10 March 2014 (UTC): The default strategy we use should have a rollback facility for free (much like how a failed pip install will not botch an existing install, etc.). In that scenario, if a guest agent never upgrades, the instance should still be considered ACTIVE.

- esp: I'm good with not doing anything special to address rollback. If the strategy supports it by default then we should allow it.

Guest Agent Version

- amcrn (talk) 22:56, 4 March 2014 (UTC): Can you elaborate on the instance_version logic?

- esp (talk) 23:06, 4 March 2014 (UTC): This was kind of a convenience thing to be able to track the instance_version through an entire upgrade cycle. It saves us from parsing the name of the package/file. At first I was thinking, maybe you need to be at a particular version before upgrading. So if for some reason a guest agent is at version 1, version 2 failed but the next upgrade available is version 3. Not sure this is a valid case or not, especially if we just make sure that version 3 has everything it needs from version 1 and 2. Not to mention that if discover that version 2 was crap and we want to install version 3 instead that would definitely not be valid.

- esp A question came up regarding where exactly the guest agent would get it's version number to send to conductor in order to populate the Trove DB during start up. The idea was that what ever installer used for a particular strategy or the strategy itself would provide that. (i.e. pip freeze) It could conceivably be part of the PBR_VERSION that is written to setup.py.

Trove Managment API

- agingh1pster: I don't see api for getting the status of the agent upgrades

- esp: good point. I was gonna add that in but forgot. it would have to be a management API addition if we are really gonna hide the upgrade status from the user

- esp: We can update the existing /mgmt/instance route (the one that shows the details of the instance). In addition to this we can create a new route that will allow an admin to list all the failed upgrades. Need to document what this might look like.

- amcrn (talk) 17:58, 10 March 2014 (UTC): Good catch agingh1pster, the mgmt-show command should be amended to include some of this new information.

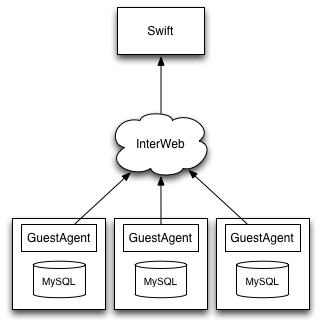

Non-Admin Call Initiated Upgrades

- grapex: I don't see any talk here about the idea of allowing Trove code to make the upgrade RPC call automatically if it detects an agent is out of date. If we stored the version of the guest in the database it would be very easy to detect this during any of the guest RPC calls. Trove could even wait for the upgrade to finish if it was in the middle of a task that was already long running (such as anything deferred to task manager). In my experience the upgrade time for a guest agent using debian packages is very small, and during an upgrade only a fraction of users are actually hitting the API, so it would be enormously helpful to be able to upgrade the users who need the newer version the most.

- amcrn (talk) 17:58, 10 March 2014 (UTC): As long as this feature can be turned off via a CONF, and as an alternative there's a mgmt api to initiate an upgrade for a instance-id.

- esp: I'd like to address the non-admin call initiated upgrade in a follow up blue print. It's probably the logical next step for upgrades.

Throttling Upgrade Notifications

- agingh1pster: What happens when there are lots of guest agents? (Hundreds of thousands) This could flood the API with requests and move to clog up the whole system (including rabbitmq, swift, networking, etc) This is the scenario where the Cloud Admin has a script that reads from a list of guest agent id's and hammers the API.

- esp: We can handle this a number ways but I think the best idea is to use the rate limiting feature we implemented a while back. We would modify it to rate limit the number of requests for the POST /{upgrade_url}. The client would receive an error response indicating that they have been rate limited. The admin would have to make a call to the Trove API to determine which guest agents got updated and which did not.

https://bugs.launchpad.net/trove/+bug/1294421

Breaking REST API or RPC API

- esp: When the APIs are modified without considering backward compatibility, deployers are forced to upgrade components(control plane, guest agents) in a particular order. This could mean downtime or a rolling deployment is needed to avoid running mismatched versions of code. Implementing RPC versioning will help but the underlying problem is that we need to carefully consider accepting code that is not backwards compatible. In my opinion it should not matter if a deployer chooses to upgrade the control plane prior to the guest agents or vice versa.

- esp: I think we want to do our best to support a guest agent upgrade process that is eventually consistent (this already happens today). During any given deployment there will be a mix of guest agent versions until they are all upgraded to the latest. For cases where this isn't possible we would have to schedule downtime for the service.