TripleO/TuskarJunoPlanning

Contents

Overview

This planning document is born from discussions from the TripleO mid-cycle Icehouse meetup in Sunnyvale (TripleO/TuskarJunoInitialDiscussion, OpenStack Management Features). The ideas iterated there were then individually fleshed out and detailed, with much input coming from conversations with other OpenStack projects.

Our principal concerns duing the Juno cycle are:

- integrating further with other OpenStack services, using their capabilities to enhance our TripleO management experience

- ensuring that Tuskar does not try to implement functionality that is better located in other projects

This document details our high-level goals for Juno. It does so at multiple levels; for each we provide:

- a description of the goal

- a list of project requirements and/or blueprints needed

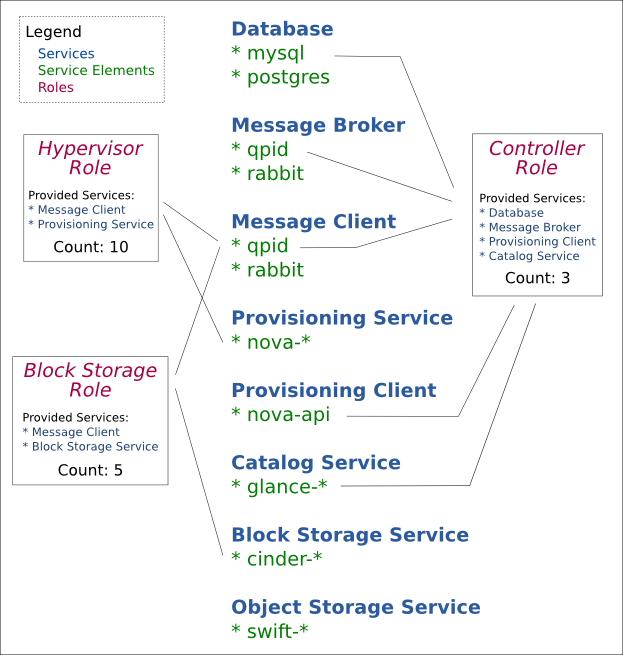

Cloud Service Representation

A cloud service represents a cloud function - provisioning service, storage service, message broker, database server, etc. A cloud service is fulfilled by a cloud service element; for example, a user can fulfill the message broker cloud service by either choosing the qpid-server element or the rabbitmq-server element.

With the cloud service concept in play, overcloud roles are updated as follows: instead of being associated with images, they are associated with a list of cloud service elements. This provides greater flexibility for the Tuskar user when designing the scalable components of a cloud. For example, instead of being limited to a Controller role, the user can separate out the network components of that role into a Network role and scale it individually.

For Juno, Tuskar will provide role defaults or suggestions corresponding to current default roles in Icehouse, as well as an "All-in-One" role. Each default role will be automatically associated with a list of cloud service elements and a pre-created image. Later iterations of Tuskar will allow for more user customization in this area.

If possible, we would also like to support customized roles that specify their own elements. This would require the development of additional TripleO features as described in a later section (Custom Roles).

Tuskar

- ensure existence of default images

- create default roles, each associated with a list of elements, an image, and a Heat template

TripleO-UI

- update deployment workflow to accomodate cloud services

Overcloud Planning and Deployment

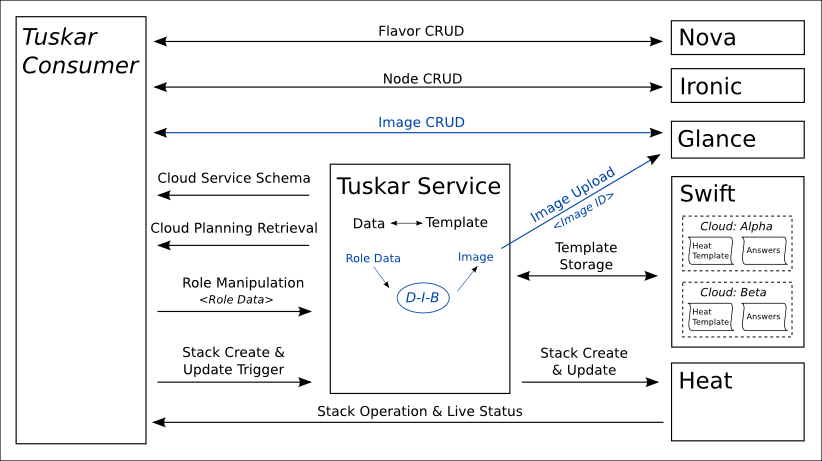

In Icehouse, the planning stage of overcloud deployment is represented by data stored in Tuskar database tables. For Juno, we would like to remove the database from Tuskar. Instead, the planning stage of a deployment will be represented by the full Heat template that would be used to deploy it. Since Heat does not intend to be a template store, this template will be stored in Swift instead. When the overcloud is ready to be deployed, the Tuskar service will pull the template out of Swift.

Once an overcloud is deployed, all Tuskar interactions with that overcloud should be done through Heat. This includes queries about running Nova instances and relevant Ironic nodes.

Heat

- Allow stacks to be updated without forcing the user to re-provide all parameters (https://bugs.launchpad.net/heat/+bug/1224828)

- Stack update, allow retry from failed states (https://blueprints.launchpad.net/heat/+spec/update-failure-recovery)

- Stack update, rolling updates (canary deployments etc) (https://blueprints.launchpad.net/heat/+spec/rolling-updates)

- Stack update, enable cancelling in-progress update (e.g to pause or rollback) (https://blueprints.launchpad.net/heat/+spec/cancel-update-stack)

- Possibly allow inline specification of provider resources, e.g have a resource which generates a heat template, then refer to it, e.g like type: {get_attr: [SomeResource, template]} (may not be required by Tuskar)

- nested resource templates

- Stack preview (preview what would happen via stack-create) (https://blueprints.launchpad.net/heat/+spec/preview-stack) (may not be required for Juno)

- Stack check (sync state of stack with the real state of underlying resources, e.g persist out-of-band failures in stack resource states) (https://blueprints.launchpad.net/heat/+spec/stack-check) (may not be required for Juno)

- Stack converge - "fixes" stack and returns to known state (https://blueprints.launchpad.net/heat/+spec/stack-convergence) (may not be required for Juno)

TripleO

- update Heat templates in TripleO for: HOT, provider resources, software config

Tuskar

- provide default templates for default roles (these will be wrapped in ProviderResources)

- construct an overcloud template that references the default roles through ProviderResources

- ensure constructed templates define the appropriate ResourceAttributes to be queried through the UI/CLI

- given a Heat template constructed in this way, parse it in such a way as to retrieve the role specification and Heat parameters

- rebuild Tuskar to save and retrieve Heat templates from Swift

- update CLI as necessary

TripleO-UI

- update stack queries to take advantage of Heat ResourceAttributes (make sure information is gotten through Heat); for example, Role

- update deployment workflow

Custom Roles

Although default, predefined roles are the immediate goal, it would be nice to also allow users to define their own roles. To do so, they would specify their own list of elements. Tuskar would then use that information to call out to diskimage-builder to create a custom image matching the role; and it would create a custom Heat template for that role that the master overcloud template could then use.

If the above cannot be achieved within Juno, a simpler alternative would be to allow users to specify a custom role, and then associate it with manually created templates and images.

The remainder of the workflow is the same as with default roles.

Heat

- Diskimage builder resource (question raised wrt ability to spawn VM to host it in seed/undercloud), also OS::GlanceImageUploader resource

TripleO

- given an image element, return the list of Heat parameters that it needs

Tuskar

- given a role specification, use diskimage-builder to construct a matching image (derived from the role's image elements)

- given a role specification, return the list of Heat parameters that it needs (derived from the role's image elements)

- given a role specification and its Heat parameters, construct a Heat template for that role that meets those specifications

- allow user to specify a custom role and associate it with a manually created template and image

TripleO-UI

- create custom role workflow

High Availability

One of TripleO's top priorities for Juno is to allow the deployment of a High-Availability (HA) overcloud. We would like to extend Tuskar to ensure that it can be used to deploy a HA overcloud as well.

TripleO

- Deploy HA Overcloud

- glusterfs

- pacemaker, corosync

- neutron (?)

- heat-engine A/A

- qpid proton (assuming amqp 1.0 have merged into oslo.messaging and oslo.messaging have merged in each core project. If not, will use rabbitmq)

- etc etc

Tuskar

- allow the generation of Heat templates that support HA

TripleO-UI

- deployment workflow support for HA architecture

Auto-Scaling

Having the option for an auto-scaling cloud deployment would be greatly appealing to many users. Heat is actively working on auto-scaling support, as are other projects.

Ceilometer

- Inhibit autoscaling during stack abandon/adopt (quiesce and revitalize)

Heat

- hooks to do cleanup on scale-down (e.g host evacuation etc) (https://blueprints.launchpad.net/heat/+spec/update-hooks)

- choose victim on scale-down, or specify strategy for choosing (e.g oldest first or newest first) (https://blueprints.launchpad.net/heat/+spec/autoscaling-parameters)

- support complex conditionals when choosing victim on scale-down (e.g get notification or poll metric via ceilometer related to occupancy or other application metrics); possibly handled by above, if we can get ceilometer to pass us appropriate data when signalling the scaling policy, TBC (may not be required for Juno)

- Method to inhibit autoscaling/alarms during abandon/adopt (and suspend/resume?)

Tuskar

- allow the generation of Heat templates that support auto-scaling

TripleO-UI

- update deployment workflow to include options that allow an auto-scaled overcloud deployment

Node Management (Ironic)

We would like to switch over to using Ironic in the near future, as there are a host of features that would depend upon it.

Ironic

- Ironic graduation

- CI jobs

- Nova driver

- Serial console

- Migration path

- User documentation

- Autodiscovery of nodes

- Ceilometer

- Tagging

- scalability

Nova

- create a nova filter for exact matches to Ironic nodes (including all node metadata) (https://review.openstack.org/#/c/83728/)

TripleO

- Allow settings for Nova Scheduler (https://review.openstack.org/#/c/84131/)

Tuskar

- use exact match filter when deploying overcloud

TripleO-UI

- added node tag values to flavor extra_specs

Metric Graphs

Data visualization is a key part of maintaining a cloud. We would like to start integrating Ceilometer usage into Tuskar.

Ceilometer

- Combine samples for different meters in a transformer to produce a single derived meter

- Rollup of course-grained statistics for UI queries

- Configurable data retention based on rollups

- Overarching "health" metric for nodes

- Ceilometer native auth for alarm notifications (and possibly metrics in future) (https://blueprints.launchpad.net/ceilometer/+spec/trust-alarm-notifier)

- Eliminate central agent SPoF

- SNMP batch mode, one bulk command per node per polling cycle

For additional data:

- Acquire hardware-oriented metrics via IPMI (e.g., voltage, fan speeds, etc.)

- Keystone v3 usage would avoid IPMI credentials; allowing pollster-style interaction

- Look into consistent hashing; see if it can be reused in ceilometer -- though it requires stateful DB

Ironic

- Add a periodic task to send hardware sensor data to ceilometer (https://blueprints.launchpad.net/ironic/+spec/send-data-to-ceilometer)

TripleO

- update undercloud images to allow for the monitoring of hardware (setup Central Agent for polling all hardware)

Tuskar

- add SNMP agents to overcloud images

TripleO-UI

- Add Ceilometer-based graphs and metric data

User Interfaces

This is a general category intended to encapsulate work needed in the UI and CLI.

Horizon

- separate horizon from openstack-dashboard

- test the replacement of lesscpy with pyScss

- improve error handling so that messages are less generic

- additional work on the plugin architecture to support dynamic hardware-specific views

Tuskar-CLI

- create a tuskar-cli plugin for OpenStackClient

TripleO-UI

- increase the modularity of views

- investigate usage of Heat UI components in Horizon (for example, parameter form building)

- create a mechanism for asynchronous communication