Oslo/blueprints/message-proxy-server

Contents

Oslo.messaging: message proxy

NOTE: The consensus at the design summit in Atlanta is to go for "enhancing neutron metadata agent and use notification over HTTP(Clint Byrum)"

The blueprint needs to be updated according to the consensus and new BP for neutron for http proxy needs to be filed. and then come up with the first implementation.

- blueprint https://blueprints.launchpad.net/oslo.messaging/+spec/message-proxy-server

- https://review.openstack.org/77862 _driver: implement unix domain support

- https://review.openstack.org/77863 proxy: implement proxy server

- https://etherpad.openstack.org/p/juno-summit-oslo-messaging-rpc-proxy

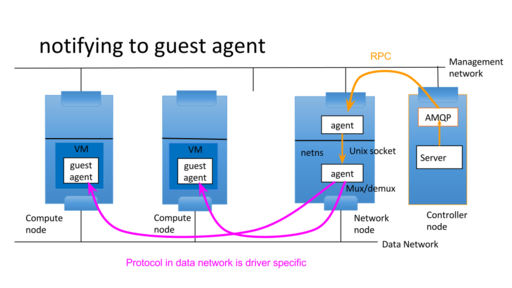

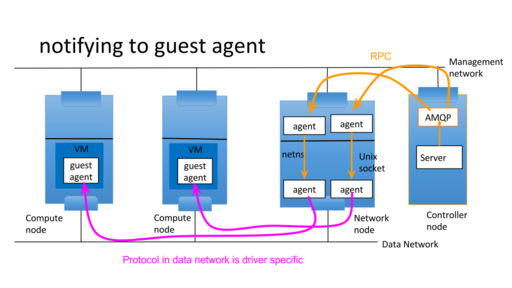

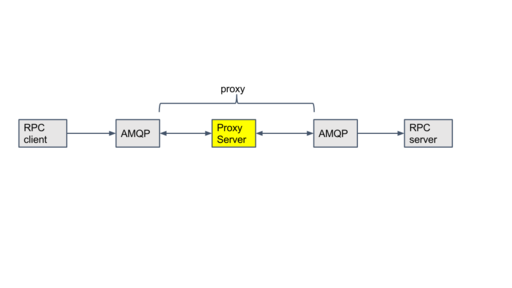

proxy message between two messaging servers or transports

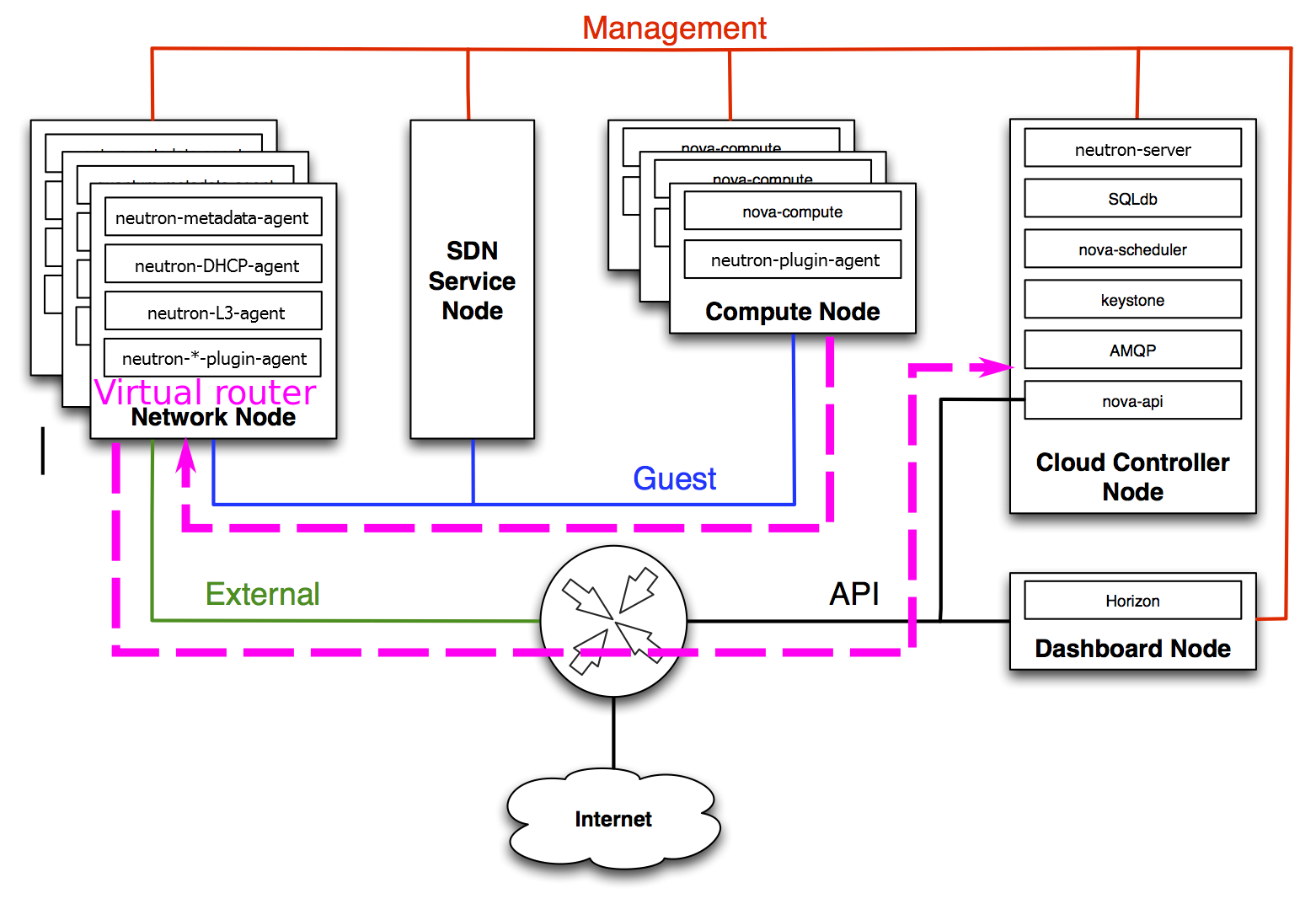

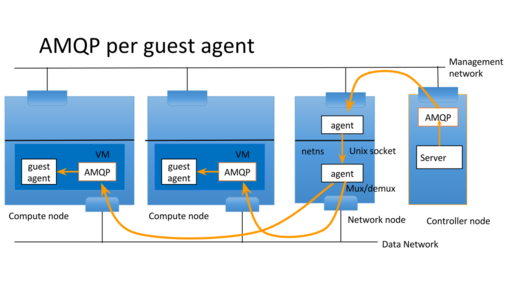

One message server is in openstack control network which openstack servers connect to and another message server is in openstack tenant network which agents connect to. Openstack server wants to send RPC message to agents in tenant networks. The control network isn't directly connected to tenant network. So proxy server relays RPC message over unix domain socket to bypass Linux netns. The supported RPC is cast, call and fanout. notification isn't supported because it's not needed at this moment. But it's easy to add it.

Use Cases

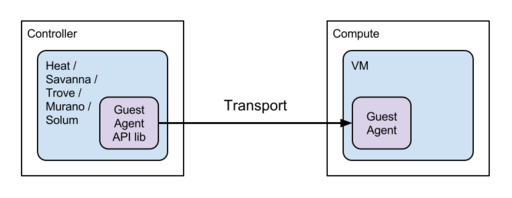

Many openstack projects uses server<-> guest agent pattern. Murano, Heat, savanna,

- Heat, Savanna, Trove, Murano, Solum

- Neutron for NFV(Network Function Virtualization)

Neutron also wants similar communication to guest agent for NFV support.

https://blueprints.launchpad.net/neutron/+spec/adv-services-in-vms Each guest agents have its own UUIDs which are used as host part of RPC topic. The server bookkeeps those agent UUID and sends RPC requests with UUID as hosts.

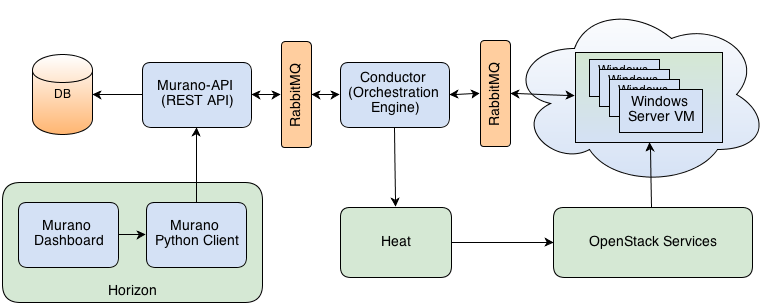

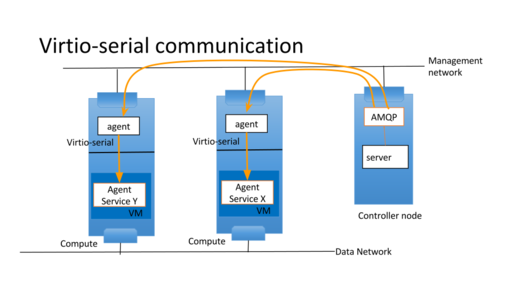

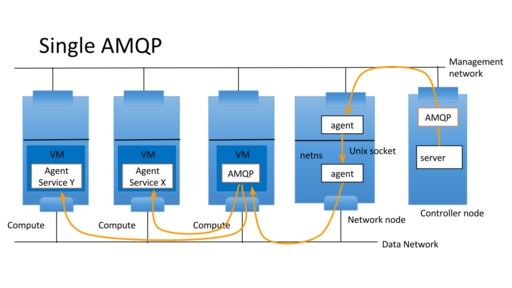

- example with amqp in guest

It is convenient for guest agent to utilize RPC. In that case AMQP service can be deployed in tenant network

- 0MQ (suggested by Dmitry Mescheryakov)

Instead of AMQP, 0MQ can also be used as oslo.messaging also supports it.

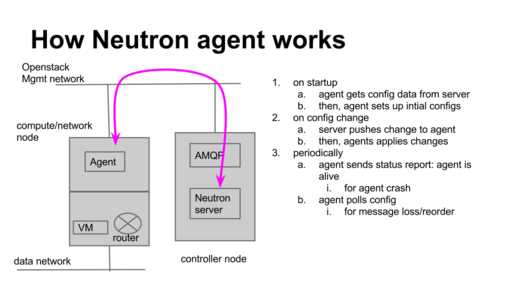

How neutron agents (not only guest agent, but also existing agent in host) works

- communiocation from server to agents

- neutron server sends message for configuration changes.

- guest agents update setting/configuration on new request: For example, user changes firewall rules, the requested change is pushed to the agent.

- communiocation from guest to server

- guest agent sends messages to neutron server for maintenance.

- status report: update stats/agent liveness: In case of loadbalancer example, the loadbalancer needs to report its status(active/slave) to neutron server. then reaction will be taken.

- periodically get the current configuration in case of message reorder/loss: agents needs to poll and resync its configuration in case that the agent local configuration is out of sync with what neutron server

design/implementation

what's added to oslo.messaging

- RPC proxy server which relays RPC from one message queue server to another message queue server

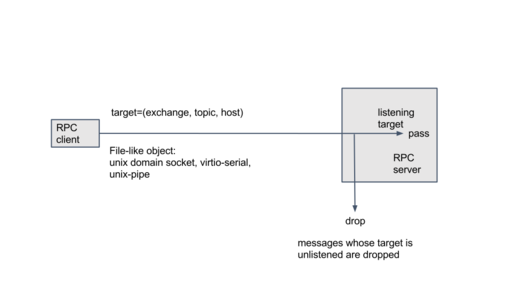

- new transport support over unix domain socket. Although it supports only support unix domain socket, it's easy to add support with file-like object. like unix pipe.

supported RPC semantics

The RPC cast/call/fanout is supported. But notification isn't supported because I don't need it. However it is easy to add it. A tricky part is timeout with call semantics because there is no timeout information in the message.

Agent Management

It is assumed that the agent in VM is run by start up script or something equivalent(cloud-init) and is running all the time. If a sort of agent management is necessary, super-agent that amanges subagents can be introduced. the super-agent starts/kills sub agent based on request from openstack server.

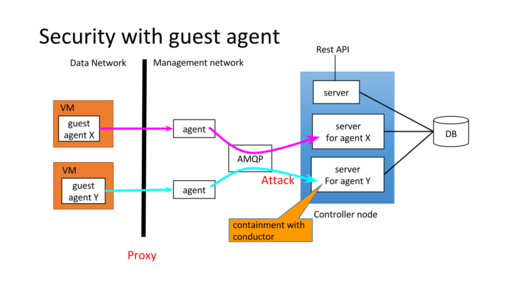

Security Considerations

There are security concerns to allowing guest agent to communicate with openstack servers. There several ways to mitigate it.

- One-way cast: write-only with unix pipe

- guest agent can’t send anything to server

- One way call: needs bidirectional communication

- guest agent can send only RPC reply

- allow guest agent to cast

- allow guest agent to call

- limit target(exchange, topic, host) to listen

- conductor service to audito request/mitigate DoS

Other things to consider

- live migration(Daniel P. Berrange)

The virtio serial channel can get closed due to QEMU migrating while the proxy is in the middle of sending data to the guest VM, potentially causing a lost or mangled message in the guest and the sender won't know this if this channel write-only since there's no ACK.

Agent part

Agent part is up to subproject which uses it. It's out of scope of oslo.messaging for now. It would be addressed by unified agent effort. https://wiki.openstack.org/wiki/UnifiedGuestAgent

Other approaches/ideas

Here are ideas/approaches which appeared during discussions for record

network based agent (Sahara/Marconi case)

Sahara communicate with guest agent over public network. Thus it doesn't need to cross the boundary.

server <-mgmt netowrk-> MQ (Marconi) <-pulic network-> agent

guest agents talks to Marconi via public REST API and issue RPCs over it. The assumption is that VM in which guest agent runs on tenant network connected to public network

enhancing neutron metadata agent and use notification over HTTP(Clint Byrum)

What if we swapped your local socket out for a connection managed by something similar to the neutron metadata agent that forwards connections to the EC2 metadata service?

- guest boots, agent contacts link-local on port 80 with a REST request

for a communication channel to service XYZ.

- metadata agent is allocated a port on the network of the agent and

proxies that port to the intended endpoint.

- guest now communicates directly with that address, still nicely

confined to the private network without any sort of gateway, but with an ability to talk to "under the cloud" services.

It will be generically usable no matter the transport desired (marconi, amqp, 0mq, whatever) and is directly modeled in Neutron's terms, rather than requiring tight coupling with Nova and the hypervisors.

using provider network

create provider network which ServiceVM is connected to.