NetworkLoadBalancingIntegrationsWithQuantum

Contents

- 1 Network Load Balancing Integrations with Quantum Networks

- 2 Accepted Network Load Balancing Techniques

- 3 Stateful MAC Frame Forwarding – Transparent Bridging

- 4 Stateful Flow Initiation and Direct Server Return

- 5 Stateful Connection Managers

- 6 Stateful Proxies

- 7 Highly Available Load Balancing Clusters

- 8 Additional Network Interfaces

- 9 MAC Layer Redundancy and High Availability

- 10 Network Layer High Availability

- 11 Quantum Network Load Balancing Connectivity Requirements

- 12 Load Balancing Device Provisioning Requirements

- 13 Per Tenant Provisioning Requirements

- 14 Quantum Provider Network Load Balancer Connectivity Requirements

- 15 Quantum Controlled Network Load Balancer Connectivity Requirements

- 16 Deploying Multi-Tenant Devices with Routable Shared Networks

- 17 Deploying Multi-Tenant Devices with Shared and Quantum Tenant Networks

- 18 Deploying A Single-Tenant Service Load Balancer

- 19 Deploying Single-Tenant Devices as the Gateway for Quantum Tenant Networks

Network Load Balancing Integrations with Quantum Networks

In the contemporary data center, network based load balancing is a requirement for many application deployments. Network based load balancing services provide high availability, scaling, and operational convenience by virtualizing the network connectivity to varying degrees. The higher the degree of network virtualization, the more transparent and beneficial to the application itself the service becomes. The ability to offload key application deployment components, combined with ever increasing 'onwire' application level functionality, has lead to application architects and developers viewing network based load balancing devices as an assumed service. By its application transparent nature, the load balancing service can be very complex in its use of the network. This makes the importance of providing a flexible network integration model for load balancing as a service, LbaaS, with Quantum networks an important task to understand properly.

Another key adoption factor to virtualized network deployments is their use of well established and available technologies. You see this concept throughout the core OpenStack projects with their emphasis on commodity components verses alternative deployment approaches which are less traditional, though often more advanced. This factor should apply to the network integration of the load balancing service with Quantum as well. The fact that the load balancing service is deployed in a, or integrated with, a Quantum managed network does not mean that a fundamental shift in the technology used for network based load balancing should occur. While virtualization technologies, like network load balancing, have progressed faster than traditional networking infrastructure, the Quantum network integration must assume some degree of best practice deployment utilizing well established technologies. Quantum should be as transparent to the network load balancing service as the network load balancing service aims to be to the application deployment.

While alternative approaches to distributing network traffic exist, the need to be as transparent to the application as possible lead the industry to prefer stateful network designs. In contrast to the stateless concepts found in network transport and routing, stateful networking purposely inserts a data plane controller, or cluster of controllers, which dictates a particular flow path to a specific application instance. This control point is the means of enforcing high availability, scaling, and advanced session, presentation, and application level offloading. The dependency of a single network path for a flow means the technology providing this path must be highly available and often synchronized across cluster elements. This makes network load balancers themselves heavy consumers of the network. Accommodating the synchronization, mirroring, and network fail-over techniques in use by contemporary network load balancers is a requirement. The on demand self service nature of a cloud service dictates the total automation of the deployment of load balancing devices. Understanding the network topology, adjacency, fail-over mechanisms, and network addressing requirements to deploy load balancing services becomes a key management plane concern. This includes both base system setup and per tenant provisioning of the load balancing service.

As of the Folsom release, Quantum supports two types of network integrations.

One is based on what are called provider networks. Provider networks create a mapping in Quantum's data model representing network elements which is not directly in Quantum's control. This allows the utilization of traditional networking technologies, often hardware based, to be integrated with fully controlled Quantum network elements for provisioning. The example given in the Quantum Administrator's Guide is the notion of a provider router for Internet access, where multiple interfaces and VLANs on an external router would be provisioned and then mapped into Quantum's data model. The assumption made is that all the necessary provisioning of the networking elements, external VLANs, device interfaces, and policies, will be done by the provider and referenced by Quantum. Certain deployments of network load balancers could be integrated in this model as well.

The second integration deployment is where the load balancer's networking is under the control of Quantum directly. The load balancer's network interfaces would be controlled just like any other Quantum controlled port. This would be the case for load balancing devices deployed as nova virtual machines.

As with all network engineering documents, this is an attempt to capture certain requirements for both topology design and provisioning information. In particular, this document attempts to capture what is needed to support a network based load balancing service integration. The community is diverse. Some of this information will be historical for some, but required for others. It is a work in progress and a starting point for conversation. As both Quantum based technologies progress, and new network based load balancing technologies are accepted into practice, this document will no doubt require updating.

Accepted Network Load Balancing Techniques

There are well established method for providing network based load balancing. This section will outline the most common deployed methods and discuss relevant concerns for integration within a Quantum network.

For Quantum integration, the initial targets for consideration will be Stateful Connection Managers in both Routed Mode and One Arm Mode.

Stateful MAC Frame Forwarding – Transparent Bridging

MAC layer network addressing was designed to be provide a logical means for a node to ingress and egress a particular network media. Manipulation of the MAC layer access is one means by which network load balancing can be accomplished.

To support network load balancing at the MAC layer, the network device becomes a bridge sitting between access devices with varying degrees of opacity. Often network discovery protocols must be filtered or proxied to provide appropriate level of control without effectively becoming detectable to the network itself. This means that the load balancing element must perform some services, like proxy ARP functionality, which would dictate it be highly coupled with Quantum ports themselves.

MAC forwarding network load balancers typically rewrite the destination MAC address thus forcing the frames to be delivered to a particular adjacent node. At the networking layer, the load balancer can be transparent, which leads many to refer to MAC forwarding load balancing as transparent mode.

This technology was widely deployed before TCP/IP was ubiquitous at the networking layer. Since TCP/IP can be assumed in most Quantum networks, the discussion of stateful MAC forwarding should can be diminished in the community.

It is often not desirable to enforce the network adjacency requirements of injecting a bridge adjacent to the network nodes themselves. For Quantum to support stateful MAC frame forwarding it would have to force MAC frames through a device. Likely this would be viewed as either a core service insertion technology that Quantum provides natively, or outside of normal Quantum device deployment.

At the MAC layer some network load balancing technologies have taken advantage of multicast MAC forwarding in Ethernet as a way to inject traffic to multiple elements in a cluster. Each MAC frame is sent to every devices in the cluster via MAC layer multicast flooding. This distribution of frames to each element, allows each elment, usually based on hashing, to determine which flows to process and which to discard. This was popular in software based deployments where the load balancing elements utilized standard operating system network stacks. Because multicast MAC forwarding typically reduced adjacent hardware Ethernet switching to control plane bound hub technologies, the leading proponents of multicast MAC forwarding for load distribution, Microsoft corporation and others, have largely abandoned it. It does not work well in environments which expect hardware network elements to work at peak utilization. Multicast MAC flooding can be viewed as a difficult and complex integration for distributed network elements.

For stateful MAC frame forwarding of any form, a means to let Quantum provide the L2 adjacency and bridge insertion when a network node is added or removed from a service deployment would be required.

Stateful Flow Initiation and Direct Server Return

In the light of the ubiquitous nature of IP layer networking, network load balancing took advantage of the routed network to provide a packet level load balancing technique where only the ingress traffic in a flow was controlled. This allowed for intelligent flow based load distribution without making the network load balancing element a bottle neck for the return traffic from the nodes providing the service. This method is often called direct server return (DSR) because the network node return traffic is routed directly to the client without being forced to transverse the load balancing element.

To make this work, the node hosting the service itself needs to function as a gateway for the flow traffic by either having the MAC layer destination address rewritten, if it is network adjacent to the network load balancing element, or accept forwarded IP packets destined for a service specific IP address. In either case, a service specific IP address is often applied as an aliased address to the loopback adapter on each node hosting the service.

For DSR to function, either manual provisioning of the service specific address would need to be done on each node providing the service, or Quantum would need to be able to manipulate tenant node IP aliases whenever a service was provisioned. Likewise, deprovisioning of the service address is desireable when a sevice is disabled.

Route health injection can be used as a course grained, stateless form of DSR. Anycast network addressing, where many service endpoints assume the same IP address, has largely usurped the use of DSR network load balancers for stateless UDP services, like DNS. As routing elements become more flow aware in scale, DSR will become an function of policy routing. Currently Quantum involvement in policy routing is more security based, using security zones for filtering based on source addresses, then as a way to distribute traffic, but could be expanded to include basic DSR services.

Because DSR is really a routing design, it is probably best left to a general discussions of dynamic routing and how guest node network stack updates will be done from Quantum itself.

Stateful Connection Managers

With the dominant use of UDP and TCP as the connection level protocols over IP, network load balancing started to take advantage of the layer 4 port information to distribute network load. The network load balancing element would assume the connection, at least from a IP network layer and UDP or TCP port layer addressing perspective, and then rewrite the destination IP address, and often the source and destination layer 4 port, before sending the traffic to a node providing a service.

A consequence of transport, or connection level, traffic management is the need to be completely stateful in IP packet routing. With the exception of algorithmic carrier grade NAT solutions, the same connection management device must recieve both the inbound traffic to a virtual service endpoint and also all the packets associated with the return traffic from the node providing the service. Ayschronous routing must not occur.

To guarantee the network node return traffic is routed through the same network element which took the initial client packets, one of two solutions are utilized.

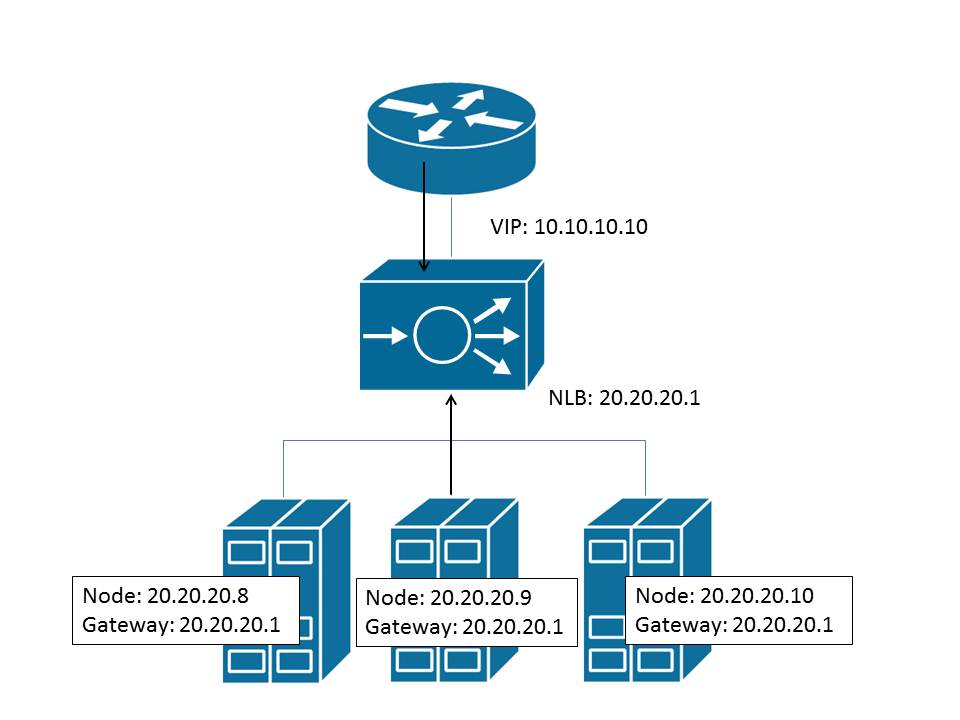

The first, often termed routed mode, requires an IP address on the load balancing element act as the default gateway for the network nodes providing the service. In addition to the default gateway setting for the network node, no local subnet network service initiation can be performed which would bypass the network load balancing element. If this occurs the tracking of load will be skewd as this local access will not be accounted for. As the default gateway for all network traffic issuing from the network nodes, the requirement for load balancing elememnt to be a high availablity IP packet forwarder becomes critical.

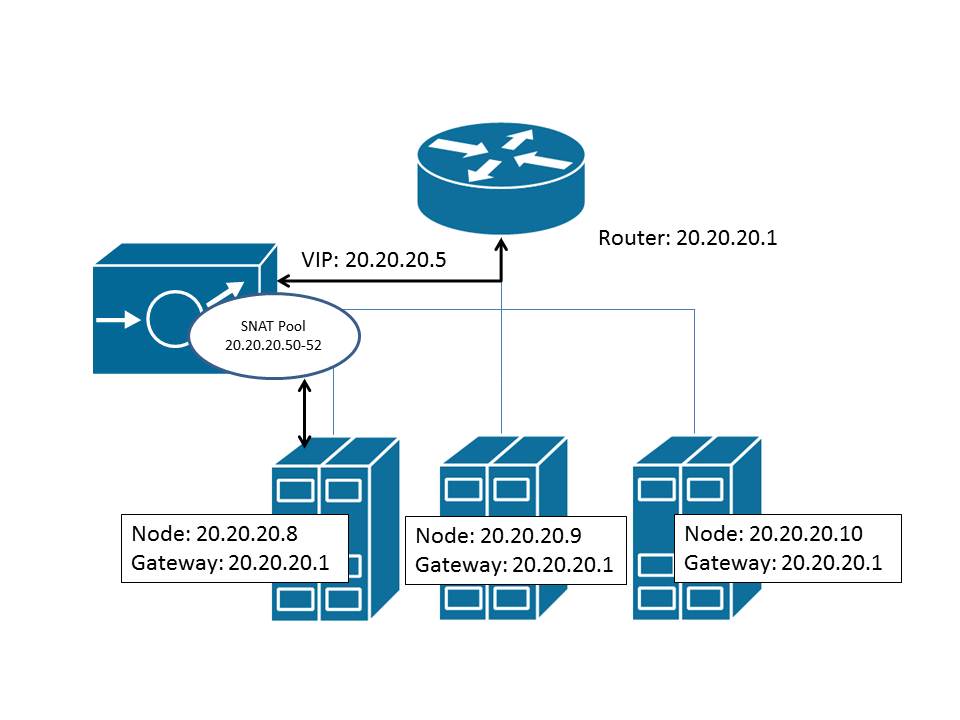

Alternatively, a second form of deployment, often termed one armed mode, utilizes source network address translation to force the route of the return traffic to the appropriate load balancing element. Source network address translation utilizes layer 4 source port multiplexing and specific source IP addresses to uniquely identify a network flow as belonging to a specific load balancing element. This is the same port based address translation used to provide Internet routing to multiple unroutable addresses within many homes. Source address translation over comes the return path routing issues as the return packets are sent to the translated address rather than the original client. Source address translation creates a need for the load balancing element to support multiple IP source addresses, usually in a pool, for the address translation so that exhaustion of ephemeral layer 4 source ports, which are multiplexed, does not limit the number of connections which can be taken for a service. In addition, source network address translation makes the traffic received by the node appear to originate from the translated IP address belonging to the load balancing element, rather than the originating client. This obscures the logging of client network addresses at the node itself, unless either higher level protocol fields, like HTTP X-Forwarded-For headers, can be used to provide this information. Alternatively the load balancing element often provides the logging for the service.

As mentioned, connection managers are often used not just for load balancing, but as dedicated IP network address translators. Network address translation is used as a multitenant network address space overloading scheme, a security mechanism, and as a means to conserve network address space by using unroutable addresses. The Quantum L3 Routing and NAT network node documented in the Quantum Administrator's Guide is such a device. While the aim of that extension was to support the replacement of nova's network service, it is a connection manager nonetheless as it tracks state at the connection layer. Basic connection based load balancing is a natural addition to this Quantum network integration. [A brief note on terminology; in the Quantum Administrator's Guide, destination network addresses, which are often termed the VIP or virtual service in a load balancer, were referred to as a floating IP addresses. For the load balancing industry, a floating IP address is typically an IP address for which the active load balancing element answers ARP requests and can migrate between HA elements. It provides IP mobility. This is a bit of a distinction in the documentation. See the section on HA requirements for more information regarding IP mobility addresses.]

For Quantum to support a provider network load balancing service in routed mode, it must support allocation of IP network addresses for virtual service endpoints and addresses from a different network subnet for the nodes supplying the service. In addtion, the network load balancing element must be given the default gateway address for the nodes providing the service. This requires that the network load balancing element be adjacent to the network nodes at the MAC layer. For a Quantum controlled integration in routed mode, the load balancing element need only be provisioned on the same tenant network as the nodes themselves to support the network adjency requirement. As with the L3 Routing and NAT extension in Quantum today, assumption of the default gateway IP address will mean changing the typical DHCP settings to use the appropriate load balancing device.

For Quantum to support a provider network load balancing service in one are mode, it must support allocation of IP network addresses for the virtual service endpoints and support one or multiple IP addresses to be used for the source network address translations. The addresses used for the virtual service endpoint and the source network address translations must be routable from the nodes supplying the service.

Stateful Proxies

To offload more and more of the application deployment complexities to the load balancing device, various higher level proxies become requirements. For TCP WAN optimizations, dual TCP stack full L4 proxies became desirable. For client side SSL offload, session and presentation layer proxies we required. To support higher level message base load balancing, full application level proxies became necessary. Load balancing devices became content caching proxies, security gateways, identity context enforcement, and message transformation engines. In addition to this level of on-the-wire 'middleware', data plane integrated business logic provided advanced traffic steering, security, and content rewriting. This level of application stream control became the mainstay for ISV enterprise software deployments.

Use of higher level proxies have the same MAC, network, and transport level dependencies as the connection management gateways. They too are deployed in both routed and one arm modes. In addition to stateful connection management requirements, stateful proxies usually require additional connectivity to external services. This often includes the presence of Internet connectivity for data and signature updates, reputation or identity service querying, and call home alerting. Full proxy deployments become dependent on provider based AAA systems, NTP, DNS, and a host of other systems which are considered infrastructure for modern enterprise applications.

The network dependencies to integrate a stateful proxy network load balancer into a Quantum network are the same for the stateful connection management gateways with the exception of additional interfaces for dependent services. As the additional services are probably in the domain of future OpenStack integrated services, a mode of operation where the load balancing gateway is fully connected to each of the networks illustrated in the Quantum Administrator's Guide, including the provider management network, the shared public network, as well as virtual networks for tenants, is likely a requirement for all full proxy deployments.

Highly Available Load Balancing Clusters

By the nature of their task, load balancing devices use the network for high availability and scaling of both tenant data traffic as well as their own management and control planes. The integrated Quantum network will need to support the high availability and scaling techniques used by load balancing devices and clusters. This section will discuss the high availability and clustering techniques most often used by load balancing devices.

Additional Network Interfaces

Load balancing clusters require additional network interfaces for control plane traffic. Best practice deployments of IP unicast or multicast communications for heartbeats, stateful mirroring, provisioning synchronization, and security traffic, dictate dedicated networking for these functions. This is done to separate out this highly sensitive cluster traffic from data plane traffic being load balanced. In the Quantum Administrator's Guide, a separate management network is setup to allow inter-service provisioning information to be exchanged. This very well might be sufficient for security and provisioning data, it will likely still be desirable to separate out heartbeats and stateful mirroring traffic to dedicated network interfaces.

For management plane traffic it is also common to see a floating HA interface being used to lessen the need for multi-master replication between devices configurations. This means yet additional network addressing for each of the cluster members. It is often a requirement that this HA management traffic not to be addressed on the same subnet as device specific, non-HA, management traffic.

For the data plane traffic, best practice deployments suggest that each layer of the network stack be redundant and scalable. Below is a consideration of both MAC and networking layer redundancy and high availability techniques commonly used with network load balancing devices.

MAC Layer Redundancy and High Availability

Starting with Ethernet link redundancy and scaling, link aggregation is a best practice feature for physical network load balancing devices. As this is a localized mechanism, it is likely outside of the realm of the Quantum network, but needs to be considered in the device setup of a load balancer device.

For Quantum controlled virtualized load balancing devices, the link level redundancy functionality is usually found in the hypervisor's networking stack and uplink interfaces. Typically at least network interface teaming is supplied if not full link aggregation control. For advanced integrations where virtual machines are allowed to communicate directly with hardware networking contexts, like SR-IOV deployments, some form of link aggregation support will be required. This would effect the bare metal setup of the servers and their network interfaces themselves.

Between the MAC and networking layers, HA clustering typically support the notion of MAC address mobility. This includes the ability to notify the network when a MAC address has moved from one cluster member to another (typically seen as a port). MAC mobility is used to provide virtual service mobility within the cluster and service takeover in the event of a failure. MAC addressing is typically provided out of a locally administered MAC address space and generated for each cluster grouping requiring such mobility. There can be multiple such locally administered addresses per MAC broadcast domain. Typically notification comes in the form of gratuitous ARPing in IPv4 and the proper ICMPv6 notifications in IPv6.

In a Quantum provider network, MAC mobility is handled between the load balancing device and the local networking device to which it is attached. Quantum would not be aware of the process.

In a Quantum controlled network, many different ports will need to be assigned to a load balancer, up to one per mobile MAC address in the cluster. Load balancing devices generate MAC addresses for this mobility functionality. Quantum will need to understand these MAC addresses and make sure network connectivity works appropriately for MAC mobility. It bares mentioning that reverse ARP techniques (RARP) are no longer sufficient as hardware networking devices require the update of MAC, IP and port information to guarantee port level failover. Load balancing devices have abandoned RARP and use GARP. In a Quantum controlled network, understanding of port forwarding of frames, MAC mobility and appropriate GARP response will be expected in order to deploy MAC mobility in the network load balancer.

Network Layer High Availability

At the network layer, clustering typically involves the use of floating, or mobile, IP addresses. Each cluster member typically has a stationary address in each IP subnet used for local forwarding, and then the cluster will have several addresses which are mobile across the cluster itself. These mobile addresses can be used for a highly available IP forwarding gateway address, source network address translation pooling, monitor probing addresses, and of course as the endpoint for a virtual service which terminates a client network connection.

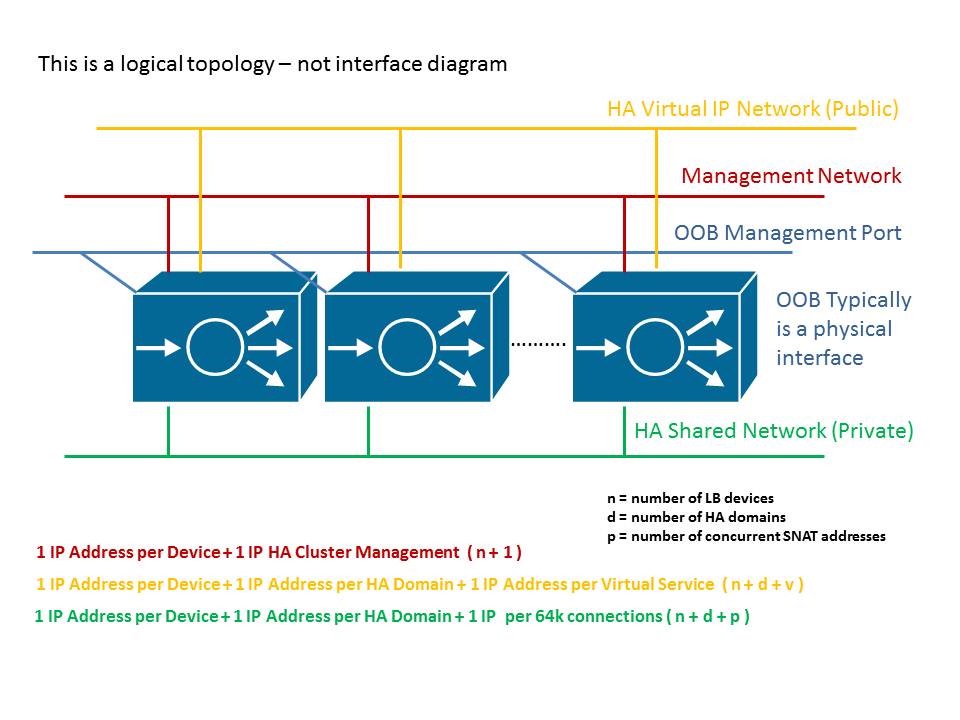

As Quantum's core interaction with the network layer is currently only to function as the address management solution, the primary concern for either a Quantum provider network or a Quantum controlled integration is the acquiring of the necessary IP addresses for the load balancing devices and cluster.

Device setup for each each logical network, as well as the provisioning of tenant virtual services, will involve an interaction with Quantum to supply the needed IP addresses. Some device clusters require contiguous addressing, however this is no longer very common. If contiguous addressing in a subnet is required, the amount of network addresses available will have to be known before the load balancing devices are deployed and Quantum consulted for the appropriate addresses.

In a routing mode load balancing deployment, the presence of a floating IP address as the default gateway for the service nodes becomes a critical networking component as it is the only means for the nodes to communicate beyond their own local networks. This means this IP address must be highly available. So the mechanisms for both MAC and network level mobility must be supported.

Network layer redundancy may also include the use of dynamic routing updates between network elements in the Quantum network. Anycast addressing, route health injection (RHI), as well as the advertising of routes behind load balancing devices are all common. While Quantum networks do not currently consider dynamic routing to be under their control, any additional extensions in this area must consider the use of dynamic routing updates as a form of high availability for service clusters.

Quantum Network Load Balancing Connectivity Requirements

While reduced requirements may be achievable by removing redundancy or HA functionality from the solution, what is proposed is a requirement set that would allow for a variety of load balancing solutions to integrate at the MAC and network layers for their management, control, and data plane traffic.

The deployment itself is matched to the networking design described in the Quantum Administrator's Guide. This includes the following notions:

- Management Network for inter-service provisioning communications

- Shared Network for provided networking which can be common to one or many tenants

- Data Network containing all traffic to tenant nodes providing a service

Any additional networking requirements for load balancing devices will be assumed as deployed from the data networking topologies. They may not be utilized for tenant data traffic, but will be supported out of that topology space according to Quantum's understanding.

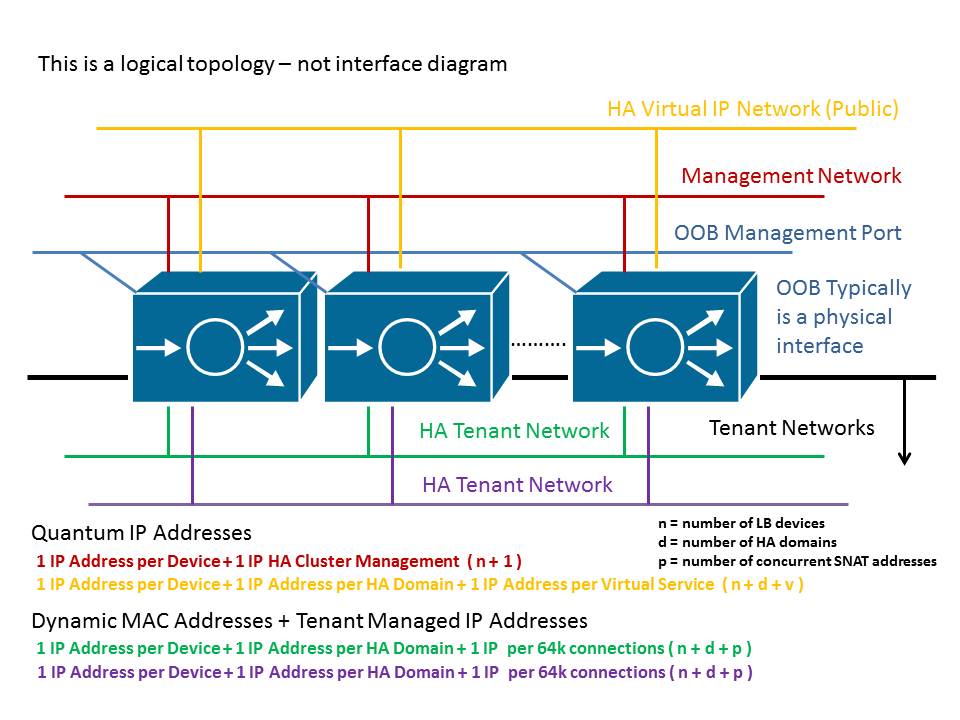

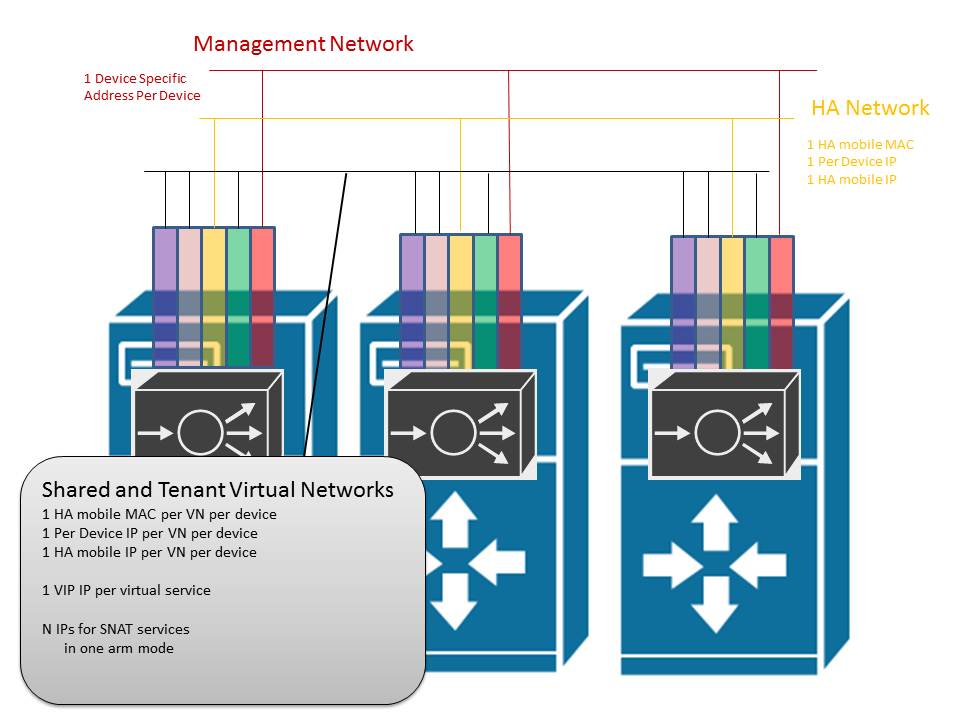

Load Balancing Device Provisioning Requirements

Each load balancing device will require a network interface and an IP address from the management network. This will be used for the automation of processes distinct to the load balancing device. Each load balancing device will likely require provisioning of network dependent management services like DNS, NTP, AAA services, and routing protocols.

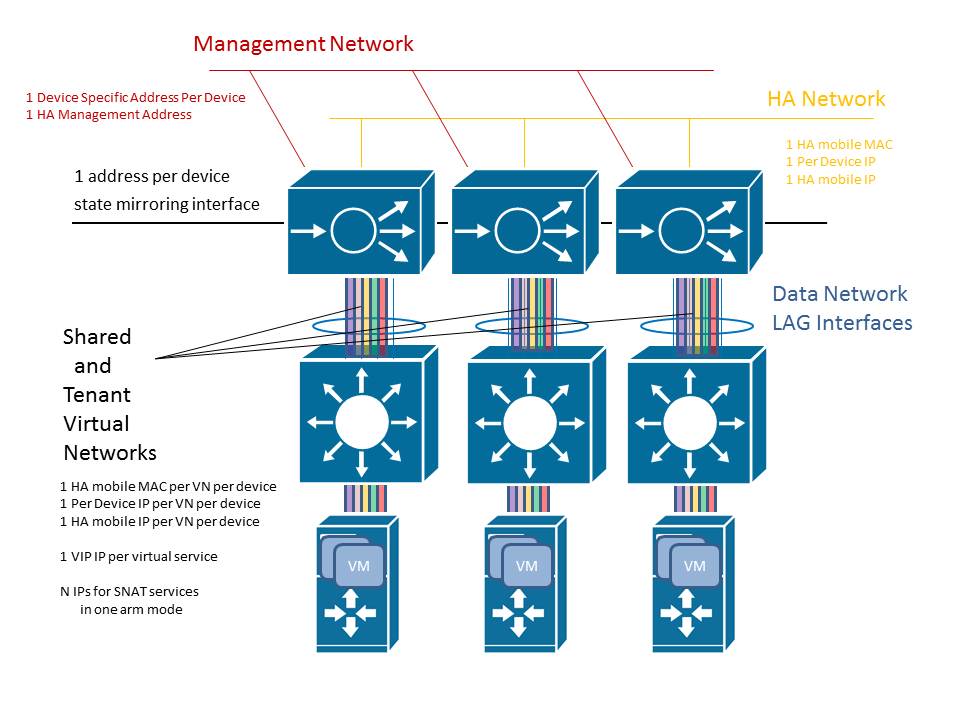

Each load balancing device will require a separate network interface and IP address for the use of inter-cluster heartbeats, state mirroring, and configuration synchronization. This should be a separate network provisioned from the data network space. In addition, devices may require the use of IP layer multicast between these interfaces. Appropriate multicast addressing may also need to be provisioned, and possibly managed by Quantum. In addition to per device addressing, one or more floating, or mobile, IP addresses will need to be assigned to the clsuter. These addresses provide master HA endpoints for provisioning the load balancing device cluster.

Each load balancer will require one or more interfaces with appropriate aggregation at the MAC layer to support tenant data networking. This should be an interface provisioned from the data network space.

In the Quantum Administrator's Guide, shared networks are defined separately because of the difference in provisioning of addresses and interfaces.

Each shared networks will require a VLAN, tunnel, or whatever MAC virtualization is supported per device.

For each shared network, the load balancing cluster will require a generated MAC address for each network redundancy group. This is the MAC address used in the GARP or ICMPv6 process for MAC mobility.

Each shared network will require an IP address allocated to each load balancing device in the shared subnet.

Each shared network will require an IP address per network redundancy group for the load balancing cluster.

Per Tenant Provisioning Requirements

For each tenant defined virtual network, each load balancing device will require the setup of the virtual network at the MAC layer. This can be a VLAN, a tunnel, or whatever MAC layer virtualization is supported in the load balancing device.

For each tenant defined virtual network, the load balancing cluster will require a generated MAC address for each network redundancy group. This is the MAC address used in the GARP or ICMPv6 process for MAC mobility.

For each tenant defined virtual network, each load balancing device will require an IP address from the tenant IP subnet address space.

For each tenant defined virtual network, the load balancing cluster will require an IP address per network redundancy group. This is the address used to support the use of the cluster as a highly available forwarding gateway. This address too comes from the tenant subnet address space.

For each tenant defined virtual network, the load balancer cluster will require an address per virtual service endpoint used to terminate client connections on that virtual network. This is the VIP or virtual service address.

Quantum Provider Network Load Balancer Connectivity Requirements

Utilizing a load balancing device, physical or virtual, in a provider network integration involves deploying devices where their connectivity to the network are not full managed Quantum ports. This does not mean they will not require quantum logical networking provisioning to make service available to OpenStack tenant virtual machines.

Supporting load balancing device clusters will mean supporting a management address for each device and an HA management address per cluster. Likely this would be the same management network used by Quantum and other OpenStack services. Additionally, if session mirroring is required, the device vendor best practice might dictate the creation of a secondary management network for the transport of network state between devices. This means an additional separate management network in its own layer 2 domain.

Virtual services defined on shared networks will require an interface per device, one floating address per HA domain for routing, and one floating address per defined virtual service. This segment must support dynamic MAC learning and the use of dynamically generated MAC addresses for device failover.

Lastly the device must have connectivity to the OpenStack tenant virtual machines themselves. This could be as simple as having their address space be routable to the load balancing device or it could be as complex having the load balancing device require separate L2 adjacent networks defined per tenant. These network interface too will require dynamic MAC learning and the use of dynamically generated MAC address for failover of load balancing addresses used as layer three forwarding gateways for the tenant virtual machines.

Quantum Controlled Network Load Balancer Connectivity Requirements

If the load balancing devices themselves are under the control of Quantum, likely as virtual machines today, their network connectivity will be expressed as Quantum ports. This does not mean that any of the requirements for the load balancing devices considered above have been removed, only that they will be managed by Quantum. The connectivity for device management, the use of sharened networks for virtual services (VIPs), HA capabilities on shared or tenant networks, and state mirroring still exist.

Any requirements to support dynamic MAC addresses, or to follow failover via GARP needs to be considered and supported in order for device cluster functionality to continue to work. In addition, other guest virtual machine requirements, such as the need to run certain load balancing devices on different hypervisors or quality of service performance requirements for synchronization and state mirroring interfaces, would require deeper integration into OpenStack services like Nova to assure the load balancing device needs are met.

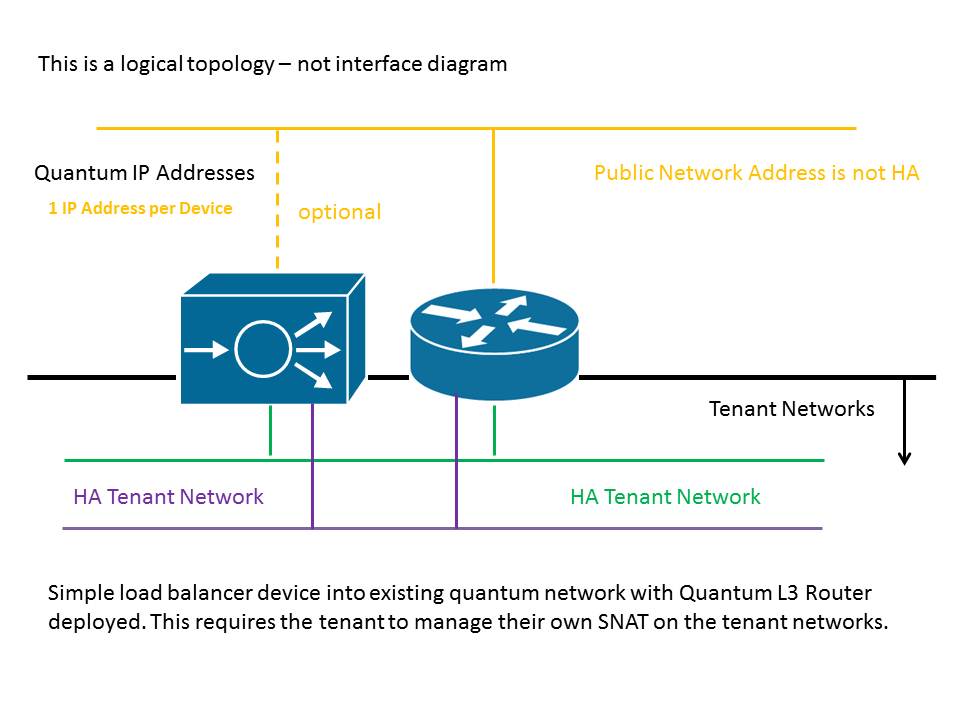

One-Arm Provider Networks

[Text about requirement for provider intergration goes here]

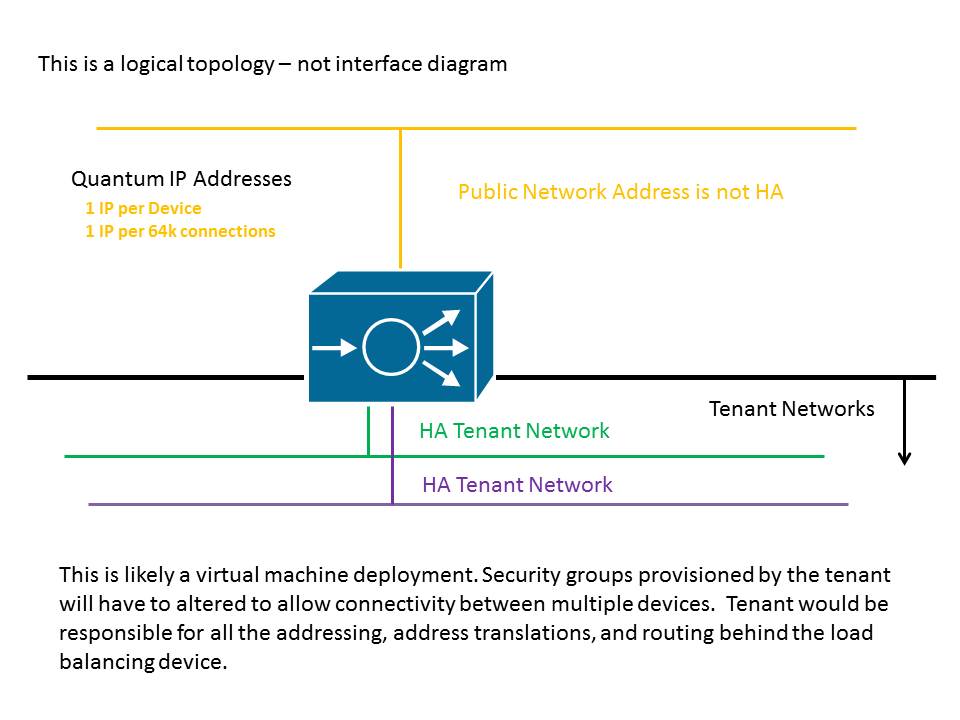

One-Arm Quantum Controlled

[Text about requirement for quantum controlled intergration goes here]

One-Arm Provider Networks with Quantum Controlled Tenant Networks

[Insert text]

Deploying A Single-Tenant Service Load Balancer

One-Arm Mode

[Insert Text]

Deploying Single-Tenant Devices as the Gateway for Quantum Tenant Networks

Routed Mode

[Insert Text]

One-Arm Mode

[Insert Text]