MultiClusterZones

- Launchpad Entry: NovaSpec:multi-cluster-in-a-region

- Created:

- Contributors: SandyWalsh

Contents

Summary

Zones are logical groupings of Nova Services and VM Hosts. Not all Zones need to contain Hosts; they may also contain other Zones to permit more manageable organizational structures. Our vision for Zones also allows for multiple root nodes (top-level Zones) so business units can partition the hosts in different ways for different purposes (i.e. geographical zones vs. functional zones).

This proposal will outline our understanding of the issues surrounding Multi-Cluster and discuss some implementation ideas.

Discussion

Please direct all feedback / discussion to the mailing list or the following Etherpad: http://etherpad.openstack.org/multiclusterdiscussion

I will maintain this page to reflect the feedback. -SandyWalsh

See Also

Older notes: http://etherpad.openstack.org/multicluster and http://etherpad.openstack.org/multicluster2

Release Note

todo

Rationale

In order to scale Nova to 1mm host machines and 60mm guest instances we need a scheme to divide-and-conquer the effort.

Assumptions

Design

Let's have a look at how Nova hangs together currently.

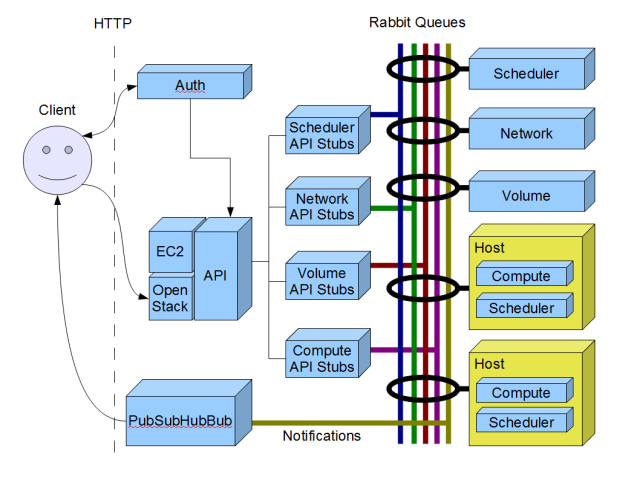

There are a collection of Nova services that communicate together via AMQP (RabbitMQ currently). Each service has its own Queue for sending messages in. As a convenience, there are also a set of Service API stubs which handle the marshaling of commands onto these queues. There is one Service API per Service. The outside work communicates to Nova via one of the public-facing API's (currently EC2 and Rackspace/OpenStack over HTTP). Before a client can talk to the public-facing API it must authenticate against the Nova Auth Service. Once authenticated the Auth Service will tell the Client which API Service to use. This means that we can stand up many API Services and delegate the caller to the most appropriate one. The API service does very little processing of the request. Instead, it uses the Service Stub to put the message on the appropriate Queue and the related Service handles the request.

There are currently about a half-dozen Nova Services in use. These include:

- API Service - as described above

- Scheduler Service - the first stop for events from the API. The Scheduler Service is responsible for routing the request to the appropriate service. The current implementation doesn't do much. This Service will likely get affected the most by this proposal.

- Network Service - For handling Nova Networking issues.

- Volume Service - For handling Nova Disk Volume issues.

- Compute Service - For talking to the underlying hypervisor and controlling the guest instances.

and then there are the other services like Glance, Swift, etc.

This flow is shown in illustration below. Note that I have shown a proposed notification scheme at the bottom, but this currently isn't in Nova.

This architecture works fine for our existing deployments. But as we scale up, it will degrade in performance until it is unusable. Likewise it may fail due to hard limitations such as the number of network devices that are available on a subnet. We need to find a way to partition our hosts so that larger deployments are possible.

One method for doing so is by supporting "Zones".

Zones are logical groupings of Nova Services. Zones can contain other Zones (thus the Nested aspect of this proposal). As opposed to a conventional tree structure Zones may have multiple root nodes. If we only permitted a single root node, only one organizational scheme might be used. But different business groups may need to view the collection of Hosts from different angles. Operations may want to see the Hosts based on capabilities but end-users, sales or marketing may want to have them organized by Geography. Geography is the most common organizational scheme.

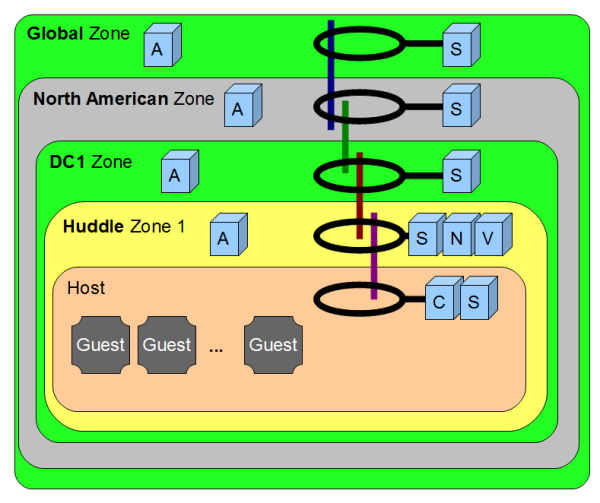

Within each Zone we may stand up a collection/subset of Nova Services and delegate commands between zones. Zones will communicate to each other via the AMQP network.

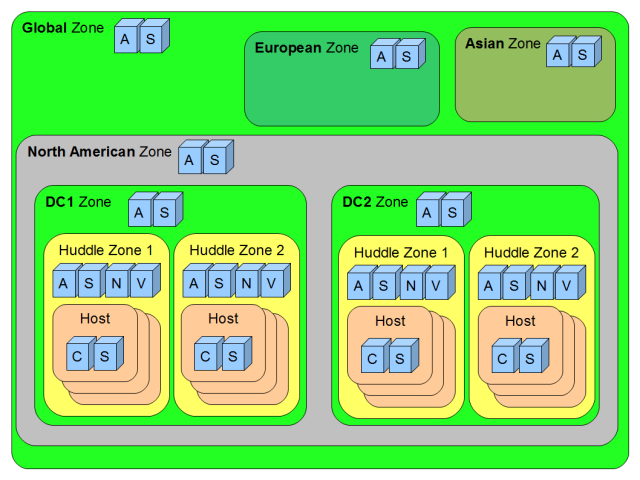

A sample Nested Zone deployment might look something like this:

(A = API Service, S = Scheduler Service, N = Network Service, V = Volume Service, etc.)

As you can see there is a single top-level Zone called the "Global" Zone. The Global Zone contains the North American, European and Asian Zones. Drilling into the North American Zone we see two Data Center (DC) Zones #1 & #2. Each DC has two Huddle Zones (to steal the Rackspace parlance) where the actual Host servers live. A Huddle Zone is limited in size to 200 Hosts due to networking restrictions.

Certainly the largest zones will be the DC Zones, which hold a large collection of Huddle Zones. We can assume, in a service provider deployment, that a DC Zone may contain 200 or more Huddle Zones. Assuming about 50-60 Guest instances per Host a single DC could be responsible for as many as 200 Huddle Zones/DC * 200 Hosts/HuddleZone * ~50 Guests/Host = ~2mm guests/DC.

Our intention is to keep Hosts separated from a Zone's decision making responsibilities until the very last moment, thus keeping the working set as small as possible.

Inter-Zone Communication and Routing

As mentioned previously, AMQP is used for services to communicate with each other. Also, we mentioned that the Scheduler Service is used for routing requests between Services. The strategy for Multi-Cluster is for the Scheduler Service to route calls between Zones before handing the request off to its ultimate destination.

It was initially intended that each Zone would have its own AMQP Queue for receiving messages. There will be a Scheduler deployed in each Zone that can listen for requests coming from the parent Zone. This implied we needed to deploy our AMQP network so that only the inter-zone queues are replicated in the AMQP Cluster and not every queue in the network.

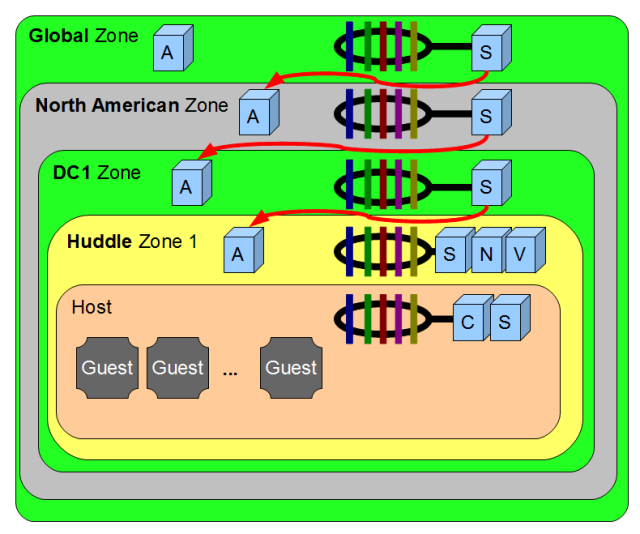

It turns out that this is not really a feasible approach. Configuring RabbitMQ to span a WAN does work and the numbers we got from our DFW/ORD tests weren't that bad. But we did run into some quirky problems with Celery which only appeared in the WAN setup. Also, RabbitMQ does no support the idea of Cluster-Cluster communications without using another tool called Shovel. While Shovel appears to be used in large production situations the development effort around the project seems light and solely depending on this could be a risk. The combination of factors suggests we might also want to consider Inter-Zone communication be performed via our already existing API.

It's clunky, but we get nice isolation between Zones.

My proposal is to put the proper abstractions in place so we can use either approach with a focus on the public API approach initially. Hopefully, someone will have more time down to the road to dig into the Shovel route.

Should We Use The Queues Or The Public APIs?

What are the Pro's and Con's with this approach?

Using AMQP for inter-zone communication:

Pro's

- AMQP is made for this.

- Less Marshalling required, which means less code.

- Callbacks easily supported.

- Easier setup for Nova administrators.

Con's

- WAN RabbitMQ cluster required.

- RabbitMQ requires Shovel for inter-cluster communication.

- Some quirky error conditions arose during testing with Celery.

- Building a very monolithic structure (central db), which increases our dependencies on a single point of failure.

Using Public API for inter-zone communication:

Pro's

- Easier deployments

- Communications are easier to understand for developers.

- Good isolation between Zones in the event of failures.

Con's

- Caller Authentication has to be stored and forwarded to each API Service for each call.

- No means for Callbacks or error notification on long running operations other than the proposed

[[PubSubHubBub]]Service. - The expense of marshaling/unmarshaling each request from HTTP -> Rabbit -> HTTP -> Rabbit all the way down.

- Having to register not only the public API, but the admin-only API's at each layer. We would need to detect that a call is an admin-only call and correctly route to the proper API server.

Routing, Database Instances, Zones, Hosts & Capabilities

Each deployment of a Nova Zone has a complete copy of the Nova application suite. Each deployment also gets its own Database. The Schema of each database is the same for all deployments at all levels. The difference between deployments is, depending on the services running within that Zone, not all the database tables may be used.

We will need to add a Zone table to the Nova DB which will contain all the child zones. Parent Zones will poll down to its children for Capabilities, Zone names and current state. Child Zones will have no concept of their parents. This will allow for multiple parent Zones and varying Zone hierarchies.

Hosts do not have to only live at the leaf nodes. Hosts may live at any level. Hosts are contained within Zones. I think, but I'm not sure, that they may also belong to multiple zone tree. I need to expand on this.

As mentioned, Zones and Hosts have Capabilities. Capabilities are key-value pairs that indicate the types of resources contained within the Zone or Host. Capabilities are used to decide where to route requests.

The value portion of a Capability Key-Value pair is a (String/Float, Type) tuple. The Type field is used for coercing the two value fields (string/float). We have a String & and Float field so we can do range comparisons in the Database. While this is expensive, we cannot predict in advance all the desired Capabilities and denormalize the table. (jaypipes offered this schema: http://pastie.org/1515576)

While some Keys will be binary in nature: is-enabled, can-run-windows, accept-migrations others will be float-based and changing dynamically (especially the Host Capabilities). For example: free-disk, average-load, number-of-instances, etc.

The Host will publish its presence to the Scheduler Queue to let the schedulers know the current state of the Hosts. This way, no database entry is required for the Hosts and Hosts can go on/offline without causing database integrity problems.

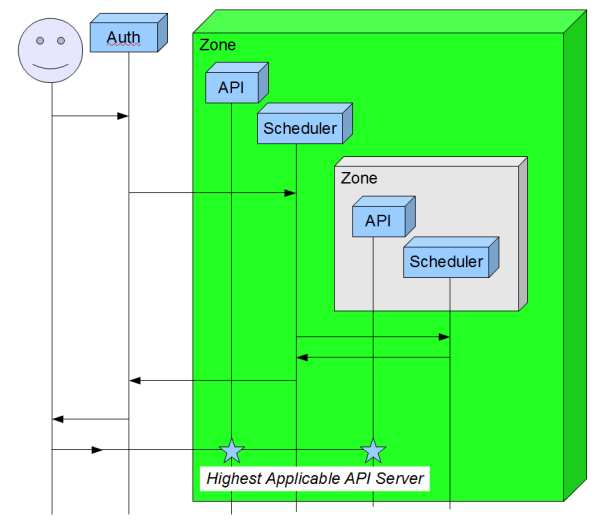

Selecting the Correct API Server

As we described earlier, the Auth Service tells the Client which API Service to use for subsequent operations. In order reduce the chatter in the queues, we would like to send the client to the lowest level Zone that contains all the instances managed by that client. It is our intention to use the same inter-zone communication channel for making this decision.

The Auth Service will always come in at the top level Zone and ask "Do you manage Client XXXX?" This request will sink down to each nested Zone and if a match is found return up. If more than one child responds "Yes", then the parent Zone API is returned. If a single child responds "Yes", that Zone's API Service is returned. If no child responds the Zone returns "No" and its parent Zone makes the decision.

This information may be cached in a later implementation. This can be pre-computed whenever an instance is created, migrated or deleted.

User stories

todo

Implementation

This section should describe a plan of action (the "how") to implement the changes discussed.

The intention for Cactus is to work on the data model, api and related client tools for Sprint-1 (first 3 wks) of Cactus and then work on the inter-queue communication for Sprint-2.

Sprint 1 Tasks

zone-add(api_url, username, password) zone-delete(zone) zone-list zone-details(zone)

Nova API

GET /zones/ GET /zones/#/detail POST /zones/ PUT /zones/# DELETE /zones/#

DB

add_zone(...) delete_zone(...)

Unresolved issues

This should highlight any issues that should be addressed in further specifications, and not problems with the specification itself; since any specification with problems cannot be approved.

BoF agenda and discussion

Use this section to take notes during the BoF; if you keep it in the approved spec, use it for summarising what was discussed and note any options that were rejected.

RabbitMQ Spike Results

Running VMWare Ubuntu 10.10 instances on (256M, 1 cpu) on a 12G, quad core duo i7 PC.

https://github.com/SandyWalsh/rabbit-test

Parallel "add": Client running on Node 1 (Warn level)

No cluster (2.3)

1 Worker

Finished 1/1 in 0:00:00.192947 (avg/req 0:00:00.192947) Finished 10/10 in 0:00:00.901358 (avg/req 0:00:00.090135) Finished 100/100 in 0:00:08.180311 (avg/req 0:00:00.081803) Finished 1000/1000 in 0:01:20.539726 (avg/req 0:00:00.080539) Finished 10000/10000 in 0:13:26.883584 (avg/req 0:00:00.080688) --didn't do more--

2 Node Cluster (2.3)

1 Worker/Node

Finished 1/1 in 0:00:00.157986 (avg/req 0:00:00.157986) Finished 10/10 in 0:00:00.411800 (avg/req 0:00:00.041180) Finished 100/100 in 0:00:02.256900 (avg/req 0:00:00.022569) Finished 1000/1000 in 0:00:21.738734 (avg/req 0:00:00.021738) Finished 10000/10000 in 0:03:37.536059 (avg/req 0:00:00.021753) Finished 100000/100000 in 0:34:32.088134 (avg/req 0:00:00.020720)

3 Node Rabbit Cluster (1.8)

2 Ram nodes, 1 disk node

Finished 1/1 in 0:00:02.347252 (avg/req 0:00:02.347252) Finished 100/100 in 0:00:03.300854 (avg/req 0:00:00.033008) Finished 1000/1000 in 0:00:17.758042 (avg/req 0:00:00.017758) Finished 10000/10000 in 0:02:50.025154 (avg/req 0:00:00.017002) Finished 100000/100000 in 0:29:52.013909 (avg/req 0:00:00.017920)

3 nodes - failure conditions

Finished 1000/1000 in 0:00:16.667371 (avg/req 0:00:00.016667) - no failures Finished 1000/1000 in 0:00:24.568783 (avg/req 0:00:00.024568) - 1 worker killed, no loss Finished 1000/1000 in 0:00:35.739763 (avg/req 0:00:00.035739) - 2 workers killed, no loss

Killed a Rabbit Node - Boom: http://paste.openstack.org/show/630/ ... client fix required.

sudo rabbitmqctl stop_app

asksol says join() doesn't support the new error handling features of Kombu yet. Reapplying join() doesn't work.

2 nodes in DC

Both in DFW1. Worker on DFW1b

Finished 1/1 in 0:00:00.235317 (avg/req 0:00:00.235317) Finished 10/10 in 0:00:00.346868 (avg/req 0:00:00.034686) Finished 100/100 in 0:00:01.812593 (avg/req 0:00:00.018125) Finished 1000/1000 in 0:00:17.602759 (avg/req 0:00:00.017602) Finished 10000/10000 in 0:02:54.620607 (avg/req 0:00:00.017462)

3 nodes spanning 3 DC's

2 in DFW1, 1 in ORD. Workers in DFW1b and ORD

Repeated problems with dropped connections. Finished 1/1 in 0:00:00.752433 (avg/req 0:00:00.752433) Finished 10/10 in 0:00:04.470553 (avg/req 0:00:00.447055) Finished 100/100 in 0:00:41.711515 (avg/req 0:00:00.417115) Finished 1000/1000 in 0:06:33.388602 (avg/req 0:00:00.393388)

4 nodes spanning 4 DC's

2 in DFW1, 2 in ORD. 3 workers: 1 in DFW1b, 1 in each ORD

Failed everytime. AMQPConnectionException