Designate/Blueprints/Server Pools/Archive

Contents

Current State

Phase One

| Gerrit Patch | [1] |

|---|---|

| Launchpad Blueprint | [2] |

Overview

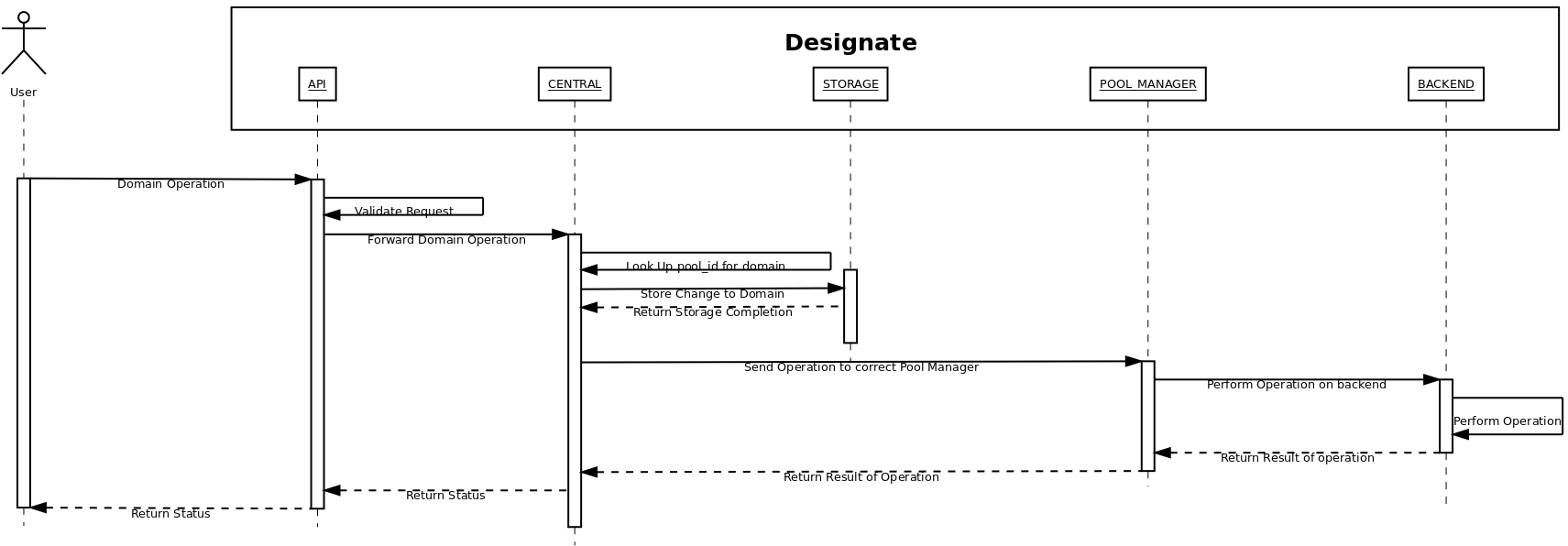

This is an initial change to the codebase to introduce the concept of a "server pool"

This has introduced a new service that sits where the agent would have traditionally sat for bind9

This has caused all servers and domains to assigned to a "pool", which we will use in the future to submit changes to the right pools.

Phase One State Diagram

API v2 Changes

Servers are now a full sub resource of pools, and are accessed using HTTP requests like:

GET http://designate-server:9001/v2/pools/pool_id/servers - get all servers in <pool_id> GET http://designate-server:9001/v2/pools/pool_id/servers/server_id - get <server_id> details

This was to avoid massive amounts of changes to SOA records for domains, and allowing us to notify backends [3] of just the server that changed.

Phase One Limitations

- Currently there is only support for one pool

- This is currently a synchronous operation - async will be in a later phase

- When designate is set up / upgraded for the first time, you will need set up a default pool in the /etc/designate/designate.cfg file.

- There is a default pool created in the SQLAlchemy migrations, so this will just copying and pasting the pool_id into the right section in the config file.

Next Steps

Phase Two

| Gerrit Patch | N/A Currently |

|---|---|

| Launchpad Blueprint | [4] |

Overview

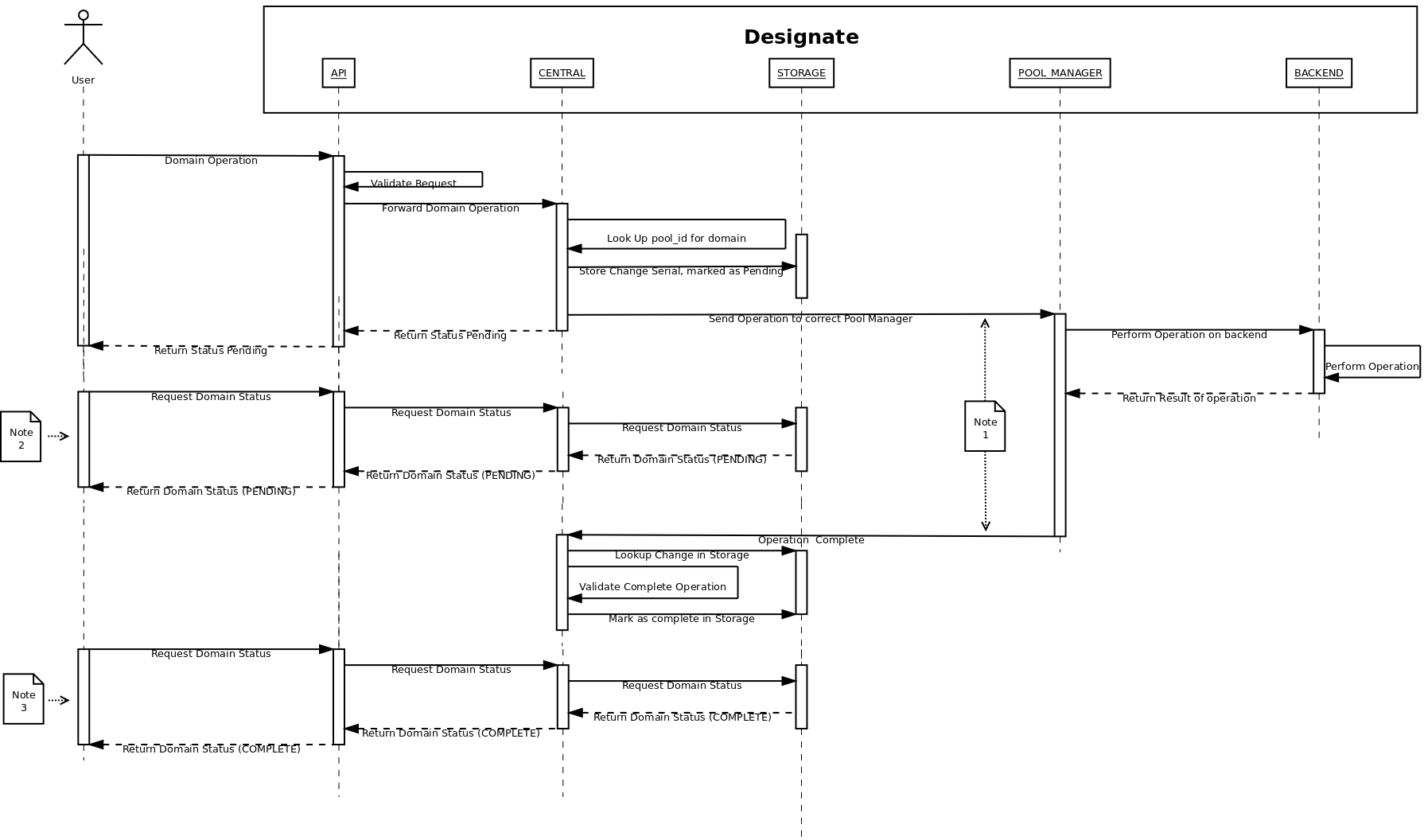

As part of phase two, we will introduce the concept of Async operations on backends, using the pool-manager interfaces.

There will be a couple of changes to the core of designate:

- There will be a new table added for tracking of async operations.

- Zone serial numbers will move to a incrementing integer (from the current date/time stamp format)

- All responses from the API will move to a 202 - Accepted for modifying operations on zones.

- The response data for zone operations will include a status field, that will show PENDING.

- When the operation is complete, this will be changed to a ACTIVE status

Phase Two State Diagram

Notes

- This is 2 rpc calls, that no returns (oslo.messaging casts vs calls), meaning the central is not blocked on the pool-manager operation

- This is an example API call while the backend is still working on the change

- This is an example API call on the completion of the change

API v2 Changes

- Changing response code for async operations

Database Changes

This will document the example changes to the SQLAlchemy implementation

Additional Tables

- Pending Operations

- Resource ID - UUID

- Resource Type - UUID

- ChangeSetSerial - Integer (this name may need to be examined)

Phase Three

| Gerrit Patch | N/A Currently |

|---|---|

| Launchpad Blueprint | [5] |

Overview

This will need fleshing out, but will be the creation of a pools scheduler to allow for multiple pools, and per tenant pools

Discussion

Any ideas for phase three ( Schedulers ) please put them here, and tag your user name, so we know who to ask

- Decide how much pools are exposed in the API (artom)

- Use case example: private and public pools. Completely transparent, per-tenant pools work in this case (public and private tenant).

- Use case example: single tenant wants multiple pools. No choice but to expose pools in the API so he can control what zones go to what pool.

- Can we support both of the above simultaneously?

- Look at nova's to see if there is reusable code (graham)

Questions

- Could you add how the request to create/get a zone would look like? I am trying to understand if you specify the pool id only in the request or if you specify both a pool id and a server id? (vinod)

- The request to get a zone would remain the same. Until Phase 3, the creation of zones would remain the same - they would be assigned to the default pool.

- At that point, for most users I would imagine it would remain the same, unless they wanted to specify the pool for this domain. - (graham)

- Would each zone in a pool have all the servers in the pool as NS records? How would adding a new server or deleting/updating a server in a pool affect existing zones in the pool? (vinod)

- Yes. It would require a change to the NS records for each zone in pool - (graham)

- How is the authoritative name server in the SOA record for a zone file specified? Does the tenant creating the zone dictate this? When the server is deleted/updated do we go update all the relevant zones? (vinod)

- I would imagine it would come from the Pool. We would have to update all the zones as above - (graham)

- Earlier above, you mentioned "This was to avoid massive amounts of changes to SOA records for domains". An example of how this is achieved would be helpful for me to understand this. (vinod)

- Instead of re-creating all the servers, (delete all and rewrite), it can change just one. That is probably miss phrased to be honest, it should be "massive changes", as the number of changes would be the same, just smaller in size - (graham)

- How do we specify the tenant/pool relationship? Can any tenant create/update/delete a zone in any pool? (vinod)

- Unknown, I need to get feedback on what people want.

- I would envisage public pools, that the schedualer would distribute general zones to and then private / per tenant one, to allow for people to have internal DNS records, say in VPCs, or non public envionments - (graham)

- Can zones be moved from one pool to another pool? If yes, how does the user do this - do we need to add new apis for this? How would the NS servers/auth name server in the SOA record change in this case? (vinod)

- That is a good question. I think that needs some discussion. Would this be a useful feature?- (graham)

- In the Phase Two diagram, you show a request going from Central to Pool Manager with the text as "Send operation to correct Pool Manager". Is this the pool id or pool manager? Would there be one pool manager managing all the various pools/pool ids like one central service or would there be multiple pool managers? (vinod)

- No, there is one pool-manager per pool. - (graham)

- In Phase2 & 3, Async Response (202 Accepted), the current planned implementation has a "Status" field added to the Domains and Records tables in Central that is updated based on the response from the backend. How do we go about returning details of Errors if operation failed for some reason? (eankutse)

- In Phase2 & 3, Async Response (202 Accepted), we should consider returning Location Header that points to the URL of the resource (that is being created). The user can check the status of the operation using this.(eankutse)

- Not directly related to achieving Async but should we consider following recommendations from http://docs.openstack.org/api/openstack-compute/2/content/LinksReferences.html and add "self" and "bookmark" links in the final response body after the resource is available? (eankutse)