Difference between revisions of "Tacker"

(Added what Tacker solves and technical issues in NFV) |

m (→Challenges of NFV and MANO) |

||

| Line 42: | Line 42: | ||

| − | == | + | == What Tacker solves ? == |

| − | === | + | === Challenges of NFV and MANO === |

In order to maximize the effect of virtualization using NFV, operator should resolve challenges in initial phase and expansion phase to introduce NFV in commercial. | In order to maximize the effect of virtualization using NFV, operator should resolve challenges in initial phase and expansion phase to introduce NFV in commercial. | ||

| Line 81: | Line 81: | ||

| Operation || White box operation using static design, e.g. IP address, configuration, status, faults, and performance || Black box operation using DHCP and outside function. | | Operation || White box operation using static design, e.g. IP address, configuration, status, faults, and performance || Black box operation using DHCP and outside function. | ||

|} | |} | ||

| − | |||

= Use Cases = | = Use Cases = | ||

Revision as of 02:59, 10 January 2020

Tacker - OpenStack NFV Orchestration

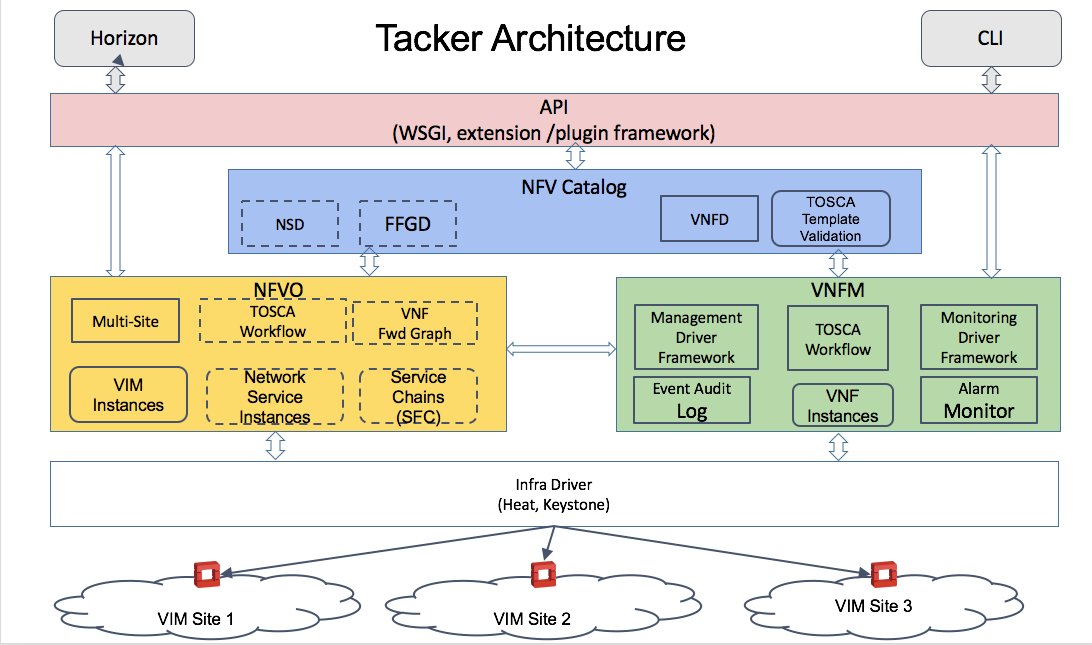

Tacker is an official OpenStack project building a Generic VNF Manager (VNFM) and an NFV Orchestrator (NFVO) to deploy and operate Network Services and Virtual Network Functions (VNFs) on an NFV infrastructure platform like OpenStack. It is based on ETSI MANO Architectural Framework and provides a functional stack to Orchestrate Network Services end-to-end using VNFs.

High-Level Architecture

NFV Catalog

- VNF Descriptors

- Network Services Descriptors

- VNF Forwarding Graph Descriptors

VNFM

- Basic life-cycle of VNF (create/update/delete)

- Enhanced platform-aware (EPA) placement of high-performance NFV workloads

- Health monitoring of deployed VNFs

- Auto Healing / Auto Scaling VNFs based on Policy

- Facilitate initial configuration of VNF

NFVO

- Templatized end-to-end Network Service deployment using decomposed VNFs

- VNF placement policy – ensure efficient placement of VNFs

- VNFs connected using an SFC - described in a VNF Forwarding Graph Descriptor

- VIM Resource Checks and Resource Allocation

- Ability to orchestrate VNFs across Multiple VIMs and Multiple Sites (POPs)

Documentation

https://docs.openstack.org/tacker/

Install Guide

https://docs.openstack.org/tacker/latest/#installation

What Tacker solves ?

Challenges of NFV and MANO

In order to maximize the effect of virtualization using NFV, operator should resolve challenges in initial phase and expansion phase to introduce NFV in commercial.

In initial phase, operator should resolve "integration" issues between VNF and NFVI, because VNF and NFVI are strongly coupled to ensure carrier SLA. According to characteristics of VNF and NFVI, operator should tune performance using various virtualization support techniques, determine VM allocation rules from NFVI failure points, consider O&M for both VNF and MANO, and integrate between VNFM and VIM to realize them. These integrations are overloaded as operator and providers of MANO and 3GPP system discuss all usecase without a reference implementation.

In expansion phase, operator faces "combination" issues among VNF, NFVI, VIM and VNFM in proportion to the increase a number of VNF instances and NFVI instances. Because product lifecycle among IA server, SDN, storage, APL of VNFs, VIM and VNFM are different, operator should consider test combination, migrate pattern, dependencies of each product version. This upgrade continues to require big effort to integrate them in very big combination.

Technical Issues

Visualize virtual resource and physical server to analyze

Decoupling the software from the hardware also requires finer root-cause analysis tools. Lacking of such tools made us maintain detailed mapping between virtualized and physical resources e.g. which VM is running where and how they are internally connected, with in-house detailed ID schemes. We had to visualize everything in the NFVI to feel confident about the operation. This is a quite cumbersome maintenance process and automation is necessary. OpenStack Blazar project is expected to ease the resource guarantee problem, necessary in telecom.

Difference of the handle virtual resource

The way different VNFs consume infrastructure resources (e.g. booting, QoS control point, affinity rules, creating of maintenance networks, configuration methods etc.) are different. We had to create per-VNF operation procedure documents to reduce errors in these processes. As VNF to MANO interactions were different, instantiation, update/upgrade- all VNF related operations were also different. Such processes also need to be automated. We have already contributed to the ETSI NFV standardization our findings on information element, state migration, fail over sequences of VNFs from our detailed documentation process – knowledge achieved from running multi-vendor VNFs in a commercial network. Such differences in VNF operation procedures are expected to disappear once the VNF vendors develop their products based on ETSI NFV standards.

Refactor of existing functionality to be containerized

Container technology requires big changes to the legacy network of operators while operators cannot make big jumps in operations. Operators' networks have kept high SLAs with networks based on point-to-point detailed designs and rich VNF APLs function based on statefull. Operator will change these architecture to use container while operator will keep carrier SLA with low cost.

| Aspects | Virtualized | Containerized |

|---|---|---|

| Network | Points-to-point based on VLAN and static IP addres | Flat nw based on DNS and QoS |

| State | Each VM has state in memory and shared storage. | Decouple processing and DB with high functional storage. |

| Monitoring | VNF APL collects information about fault, performance. | Outside function collects information fault, performance. |

| Configuration | EMS and VNF manages a lot of configuration to provide service. | VNF APL will get configuration from outside function. |

| Operation | White box operation using static design, e.g. IP address, configuration, status, faults, and performance | Black box operation using DHCP and outside function. |

Use Cases

vCE

Tacker API can be used by SP's OSS / BSS or an NFV Orchestrator to deploy VNFs in SP's network to deliver agile network services for remote Customer networks

vCPE

Tacker API can be used by SP's OSS / BSS or an NFV Orchestrator to manage OpenStack enabled remote CPE devices to deploy VNFs to provide locally network services at the customer site.

vPE

Tacker API can be used by SP's OSS / BSS or an NFV Orchestrator to deploy VNFs within SP's network to virtualize existing network services into a Virtual Function.

ETSI NFV SPECS

TOSCA for NFV

Tacker uses TOSCA for VNF meta-data definition. Within TOSCA Tacker used NFV profile schema.

- TOSCA YAML

- TOSCA NFV Profile:

- Latest spec is available here: https://www.oasis-open.org/committees/document.php?document_id=56577&wg_abbrev=tosca

- Current latest (as of Oct 2015) is: https://www.oasis-open.org/committees/download.php/56577/tosca-nfv-v1.0-wd02-rev03.doc

Tacker + SFC (Service Function Chaining)

The proposal to enable SFC for Tacker is captured in these slides,

https://docs.google.com/presentation/d/18AGaiysVgHOd_fIHVpObMO7zUkMjJGOQ98CUwkxU1xo/edit?usp=sharing

Weekly Meetings and Mailing List

IRC Channel: #tacker

Meetings: Tuesday 08:00 UTC [Weekly] @ #openstack-meeting

Tags: [NFV] [Tacker]

Meeting Minutes

http://eavesdrop.openstack.org/meetings/tacker/

Repos

Reviews

Tacker Open Code and Spec Reviews

Bugs

https://bugs.launchpad.net/tacker

Points of contact

- Launchpad project page: https://launchpad.net/tacker

- IRC meeting information: https://wiki.openstack.org/wiki/Meetings/Tacker

- IRC channel on Freenode:

#tacker

Quick Links

| OpenStack Summit Tacker Talks | https://www.openstack.org/videos/search?search=Tacker |

| Team | Team Members |

| Tacker API doc | https://developer.openstack.org/api-ref/nfv-orchestration/ |

| Tacker Client doc | https://docs.openstack.org/ocata/cli-reference/tacker.html |

| Tacker Installation | https://wiki.openstack.org/wiki/Tacker/Installation |