Difference between revisions of "Neutron/ServiceInsertionAndChaining"

| Line 1: | Line 1: | ||

| + | = Contributors = | ||

| + | * [https://launchpad.net/~cathy-h-zhang Cathy Zhang (Project Lead)] | ||

| + | * [https://launchpad.net/~lfourie Louis Fourie] | ||

| + | * [https://launchpad.net/~pcarver Paul Carver] | ||

| + | * [https://launchpad.net/~vikschw Vikram] | ||

| + | * [https://blueprints.launchpad.net/~mohankumar-n Mohankumar] | ||

| + | * [https://launchpad.net/~milo-frao Rao Fei] | ||

| + | * [https://launchpad.net/~xiaodongwang991481 Xiaodong Wang] | ||

| + | * [https://launchpad.net/~ramanjieee Ramanjaneya Reddy Palleti] | ||

| + | * [https://launchpad.net/~s3wong Stephen Wong] | ||

| + | * [https://launchpad.net/~igordcard Igor Duarte Cardoso] | ||

| + | * [https://launchpad.net/~prithiv Prithiv] | ||

| + | * [https://launchpad.net/~amotoki Akihiro Motoki] | ||

| + | * [https://launchpad.net/~swaminathan-vasudevan Swaminathan Vasudevan] | ||

| + | |||

= Weekly IRC Project Meeting Information = | = Weekly IRC Project Meeting Information = | ||

Every Thursday 1700 UTC on #openstack-meeting-4 | Every Thursday 1700 UTC on #openstack-meeting-4 | ||

Revision as of 23:26, 21 January 2016

Contents

Contributors

- Cathy Zhang (Project Lead)

- Louis Fourie

- Paul Carver

- Vikram

- Mohankumar

- Rao Fei

- Xiaodong Wang

- Ramanjaneya Reddy Palleti

- Stephen Wong

- Igor Duarte Cardoso

- Prithiv

- Akihiro Motoki

- Swaminathan Vasudevan

Weekly IRC Project Meeting Information

Every Thursday 1700 UTC on #openstack-meeting-4 https://wiki.openstack.org/wiki/Meetings/ServiceFunctionChainingMeeting

Overview

Service Function Chaining is a mechanism for overriding the basic destination based forwarding that is typical of IP networks. It is conceptually related to Policy Based Routing in physical networks but it is typically thought of as a Software Defined Networking technology. It is often used in conjunction with security functions although it may be used for a broader range of features. Fundamentally SFC is the ability to cause network packet flows to route through a network via a path other than the one that would be chosen by routing table lookups on the packet's destination IP address. It is most commonly used in conjunction with Network Function Virtualization when recreating in a virtual environment a series of network functions that would have traditionally been implemented as a collection of physical network devices connected in series by cables.

A very simple example of a service chain would be one that forces all traffic from point A to point B to go through a firewall even though the firewall is not literally between point A and B from a routing table perspective.

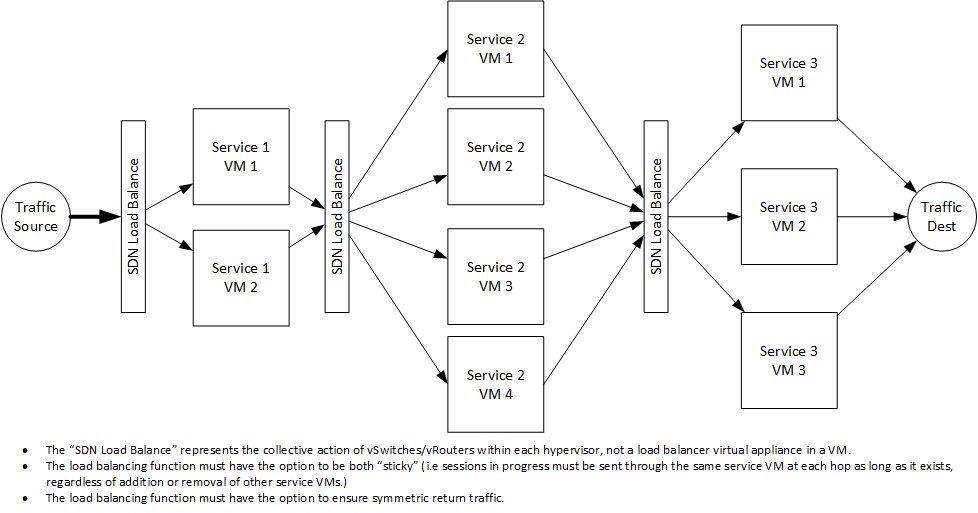

A more complex example is an ordered series of functions, each implemented in multiple VMs, such that traffic must flow through one VM at each hop in the chain but the network uses a hashing algorithm to distribute different flows across multiple VMs at each hop.

An API and initial reference implementation of Service Function Chaining is being developed for Neutron during the Liberty cycle.

- The API specification may be found here: https://github.com/openstack/networking-sfc/blob/master/doc/source/api.rst

- The Blueprint for the overall effort may be found here:https://blueprints.launchpad.net/neutron/+spec/openstack-service-chain-framework

- The Blueprint for the initial API work may be found here: https://blueprints.launchpad.net/neutron/+spec/neutron-api-extension-for-service-chaining

- The reviews related to the initial API work may be found here: https://review.openstack.org/#/q/topic:networking-sfc,n,z

networking-sfc installation steps and testbed setup

If you have previous networking-sfc patches installed on your testbed, then do the following to get the new updated patch code set

1. Clean up networking-sfc

- cd /opt/stack

- rm –rf networking-sfc

2. clone networking-sfc into local repository

3. get networking-sfc unmerged code patches (add missing db migration files, Common driver manager, SFC OVS Driver, OVS agent) into local repository

- cd networking-sfc

- git fetch https://review.openstack.org/openstack/networking-sfc refs/changes/88/249488/17 && git merge FETCH_HEAD

(or the latest patch set (the number at the end of the URL is the patch set) if there's one newer than PS17 shown here)

4. cd /opt/stack/networking-sfc and run "sudo python setup.py install"

5. cd ~/devstack/ and run unstack.sh and stack.sh

If you have not installed networking-sfc on your testbed before, then do the following to get the patch code set

1. install Linux 14.04

- install git and configure user.email and user.name

- sudo apt-get install software-properties-common

- sudo add-apt-repository cloud-archive:liberty

2. clone networking-sfc into local repository

- sudo mkdir /opt/stack

- sudo chown stack.stack /opt/stack

- cd /opt/stack/

- git clone git://git.openstack.org/openstack/networking-sfc.git

3. get networking-sfc unmerged code patches (add missing db migration files, Common driver manager, SFC OVS Driver, OVS agent) into local repository

- cd networking-sfc

- git fetch https://review.openstack.org/openstack/networking-sfc refs/changes/88/249488/17 && git merge FETCH_HEAD

(or the latest patch set (the number at the end of the URL is the patch set) if there's one newer than PS17 shown here)

4. download devstack

- cd /opt/stack

- git clone git://git.openstack.org/openstack-dev/devstack.git -b stable/liberty

5. copy local.conf to local repository and add the following line:

- enable_plugin networking-sfc git://git.openstack.org/openstack/networking-sfc stable/liberty

OR

- enable_plugin networking-sfc /opt/stack/networking-sfc

6. run stack.sh

- cd devstack

- ./stack.sh

if it reports some errors, read the error messages and try to fix it.

7. Install OVS 2.3.2 or OVS 2.4. Note that the OVS version should match its supported Linux kernel version in order for the OVS to work properly.

8. run unstack.sh and stack.sh

- ./unstack

- ./stack

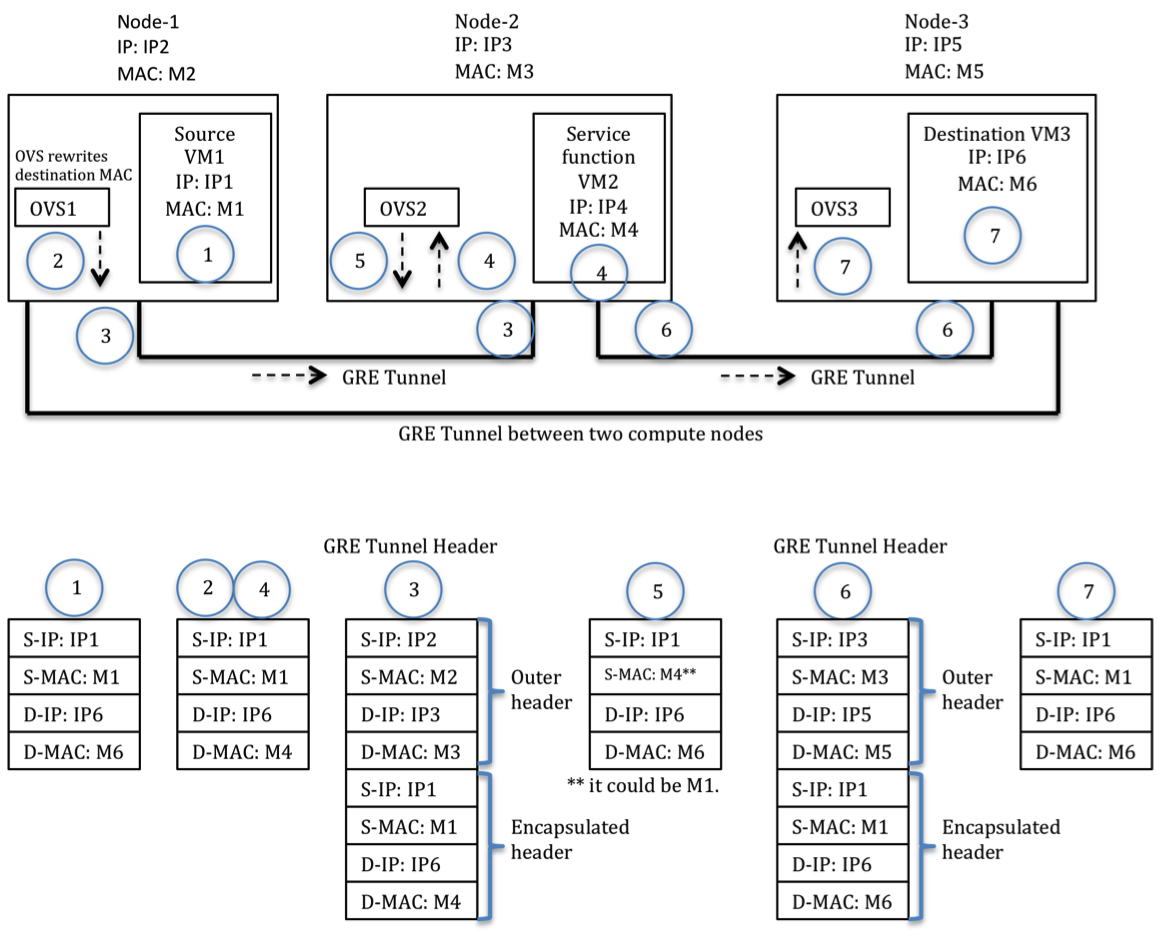

In our one-server testbed, all OpenStack components run on one physical server. We created 3 VMs, one for source VM, another for Service Function VM, the last one for destination VM.

Data Path Encapsulation Flow

The initial reference implementation will be based on programming Open vSwitch with flow table entries that override the default MAC based forwarding and instead forward frames based on criteria defined via the Neutron SFC API. It will also be possible for third party SDN implementations with Neutron integration and SFC capabilities (e.g. Contrail, Nuage, etc) to program their respective forwarding planes based on the Neutron SFC API, but this will be dependent upon the respective vendors updating their SDN Controller integration with Neutron.

Multi-Host Installation

Terminology: H1 – Physical Host1 (Controller + Network + Compute) H2 – Physical Host 2 (Compute) H3 – Physical Host 3 (Compute)

SRC – Source SF1 – Service Function 1 SF2 – Service Function 2 DST – Destination

SRC DST | | H1=============H2===============H3 | | SF1 SF2

Important Note:

Currently, with OVS 2.4.0, the kernel module is dropping MPLS over tunnel packets hitting the flood entry.

This can be worked around by enabling l2population.

Please see steps below for detailed setup instructions.

Step1: Prepare H1 (Controller + Network + Compute)

Step 1.1:

local.conf for H1: localrc

SERVICE_TOKEN=abc123 ADMIN_PASSWORD=abc123 MYSQL_PASSWORD=abc123 RABBIT_PASSWORD=abc123 SERVICE_PASSWORD=$ADMIN_PASSWORD

HOST_IP=10.145.90.160 SERVICE_HOST=10.145.90.160 SYSLOG=True SYSLOG_HOST=$HOST_IP SYSLOG_PORT=516

LOGFILE=$DEST/logs/stack.sh.log

LOGDAYS=2

disable_service tempest

RECLONE=no PIP_UPGRADE=False

MULTI_HOST=TRUE

- Disable Nova Networking

disable_service n-net

- Disable Nova Compute

- disable_service n-cpu

- Neutron - Networking Service

enable_service q-svc enable_service q-agt enable_service q-dhcp enable_service q-l3 enable_service q-meta enable_service neutron

- Cinder

disable_service c-api disable_service c-sch disable_service c-vol

- Disable security groups

Q_USE_SECGROUP=False LIBVIRT_FIREWALL_DRIVER=nova.virt.firewall.NoopFirewallDriver

enable_plugin networking-sfc /opt/stack/networking-sfc

Step 1.2: ./stack.sh from your /opt/stack/devstack

Step 1.3: Once devstack finishes, overwrite the key neutron configuration files manually. I do it this way. I am sure there is a way to do this using configurations directly in the local.conf file. I modify the following files manually /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/l3_agent.ini /etc/neutron/dhcp_agent.ini /etc/neutron/dnsmasq-neutron.conf

/etc/neutron/plugins/ml2/ml2_conf.ini

[ml2] tenant_network_types = vxlan extension_drivers = port_security type_drivers = local,flat,vlan,gre,vxlan mechanism_drivers = openvswitch,linuxbridge,l2population

[ml2_type_flat] flat_networks=public

[ml2_type_vxlan] vni_ranges = 8192:100000

[securitygroup] enable_security_group = False enable_ipset = False firewall_driver = neutron.agent.firewall.NoopFirewallDriver

[agent] tunnel_types = vxlan l2_population = True arp_responder = True root_helper_daemon = sudo /usr/local/bin/neutron-rootwrap-daemon /etc/neutron/rootwrap.conf root_helper = sudo /usr/local/bin/neutron-rootwrap /etc/neutron/rootwrap.conf

[ovs] datapath_type = system tunnel_bridge = br-tun bridge_mappings = public:br-ex local_ip = 192.168.2.160

/etc/neutron/l3_agent.ini [DEFAULT] l3_agent_manager = neutron.agent.l3_agent.L3NATAgentWithStateReport external_network_bridge = br-ex interface_driver = neutron.agent.linux.interface.OVSInterfaceDriver ovs_use_veth = False use_namespaces = True debug = True verbose = True

[AGENT] root_helper_daemon = sudo /usr/local/bin/neutron-rootwrap-daemon /etc/neutron/rootwrap.conf root_helper = sudo /usr/local/bin/neutron-rootwrap /etc/neutron/rootwrap.conf

/etc/neutron/dhcp_agent.ini

[DEFAULT]

dhcp_agent_manager = neutron.agent.dhcp_agent.DhcpAgentWithStateReport

interface_driver = neutron.agent.linux.interface.OVSInterfaceDriver

ovs_use_veth = False

use_namespaces = True

debug = True

verbose = True

dnsmasq_config_file = /etc/neutron/dnsmasq-neutron.conf

[AGENT] root_helper_daemon = sudo /usr/local/bin/neutron-rootwrap-daemon /etc/neutron/rootwrap.conf root_helper = sudo /usr/local/bin/neutron-rootwrap /etc/neutron/rootwrap.conf

/etc/neutron/dnsmasq-neutron.conf

dhcp-option-force=26,1450

Step 1.4: After modifying the above files, restart the following services q-svc, q-agt,q-dhcp,q-l3

Your Host1 (controller+network+compute) is now ready for multi-node operation

Step2: Prepare H2 (Compute)

Step 2.1:

local.conf for H2

SERVICE_TOKEN=cisco123123 ADMIN_PASSWORD=cisco123123 MYSQL_PASSWORD=cisco123123 RABBIT_PASSWORD=cisco123123 DATABASE_PASSWORD=cisco123123 SERVICE_PASSWORD=$ADMIN_PASSWORD DATABASE_TYPE=mysql

HOST_IP=10.145.90.166 SERVICE_HOST=10.145.90.160 SYSLOG=True SYSLOG_HOST=$HOST_IP SYSLOG_PORT=516 MYSQL_HOST=$SERVICE_HOST RABBIT_HOST=$SERVICE_HOST Q_HOST=$SERVICE_HOST GLANCE_HOSTPORT=$SERVICE_HOST:9292

NOVA_VNC_ENABLED=True NOVNCPROXY_URL="http://$SERVICE_HOST:6080/vnc_auto.html" VNCSERVER_LISTEN=$HOST_IP VNCSERVER_PROXYCLIENT_ADDRESS=$VNCSERVER_LISTEN

LOGFILE=$DEST/logs/stack.sh.log

LOGDAYS=2

disable_service tempest

RECLONE=no PIP_UPGRADE=False

MULTI_HOST=TRUE

- Disable Nova Networking

disable_service n-net disable_service neutron

- Neutron - Networking Service

ENABLED_SERVICES=n-cpu,q-agt

- Disable security groups

Q_USE_SECGROUP=False LIBVIRT_FIREWALL_DRIVER=nova.virt.firewall.NoopFirewallDriver

enable_plugin networking-sfc /opt/stack/networking-sfc

Step 2.2: ./stack.sh from your /opt/stack/devstack

Step 2.3: Overwrite the /etc/neutron/plugins/ml2/ml2_conf.ini as follows

/etc/neutron/plugins/ml2/ml2_conf.ini

[ml2] tenant_network_types = vxlan extension_drivers = port_security type_drivers = local,flat,vlan,gre,vxlan mechanism_drivers = openvswitch,linuxbridge,l2population

[ml2_type_vxlan] vni_ranges = 8192:100000

[securitygroup] enable_security_group = True enable_ipset = False

- firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver

firewall_driver = neutron.agent.firewall.NoopFirewallDriver

[agent]

tunnel_types = vxlan

root_helper_daemon = sudo /usr/local/bin/neutron-rootwrap-daemon /etc/neutron/rootwrap.conf

root_helper = sudo /usr/local/bin/neutron-rootwrap /etc/neutron/rootwrap.conf

l2_population = True

arp_responder = True

[ovs] datapath_type = system tunnel_bridge = br-tun local_ip = 192.168.2.166

Step 1.4: After modifying the above file, restart the q-agt service.

Step3: Prepare H3 (Compute) similar to H2

Step4: Your 3-node multi-host setup is now ready to be configured for service chaining.