PeriodicSecurityChecks

Contents

Design specification

Introduction

The decision about whether a particular node is in the trusted pool or not is made based on performing security checks against the node. If the node passes check it is moved automatically to the trusted pool (if it’s not already there); if it fails the check, it is removed from the trusted pool.

Currently there is only one type of security checks implemented in OpenStack: static integrity checks performed by OpenAttestation component using TPM/IMA technology. And OpenAttestation check nodes only once, when the node is booted.

Our project has two main high-level goals:

- Extend OpenStack so that administrator of OpenStack could add any customized security checks against the computing nodes, not only OpenAttestation checks. Thus we will provide more agility to the administrator for customizing criteria of deciding which nodes are trusted and which are not.

- Provide common interface that must be implemented by a check to be considered pluggable according to the first goal. The example interface implementation should be provided, which might be re-used by the developer to implement his own checks.

The following components comprise core functionality of our system. This is just a high-level description refined from the objectives listed above. The components’ layout naturally flows from the existing OpenStack architecture and our high-level objectives.

- Nova Scheduler Extension

Nova is the core OpenStack component responsible for managing the VM instances and computing pools. One of our goals is to develop an extension for this component that is responsible for scheduling and triggering execution of the custom security checks against the computing nodes. This extension should communicate with the Horizon Extension to receive new checks added by the administrator as well as receive scheduling profiles selected by the administrator to be able to actually schedule the necessary checks.

- Security Checks Interface

We should simplify new security checks development. For this purpose we will create a common interface that should be implemented by all new checks to be pluggable to the Nova Extension. Also we are going to provide an example check implementation (adapting OpenAttestation) which could be copied by other developers for implementing their own checks.

System Architecture

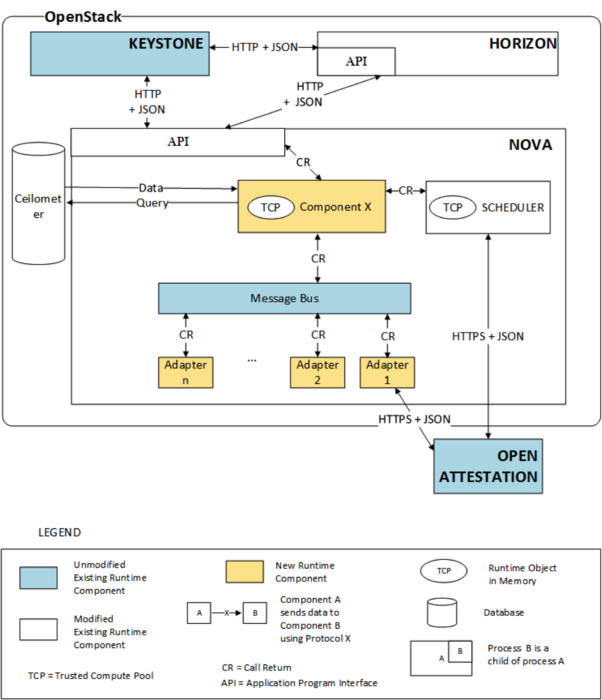

Dynamic view of OpenStack components involved in the described operations is presented on Figure 1.

Figure 1. Dynamic view of OpenStack components involved

The new component will serve as an extension of the Trusted Computing Pool maintained inside the Nova Scheduler. To do that we need to change only one file: “trusted_filter.py” in Nova. The new component will act as an interface between the checks scheduled by the user and the original Trusted Computing Pool. The results of the performed checks will be stored in Ceilometer and will directly affect only the Trusted Computing Pool inside the new component without interacting with the original Trusted Computing Pool inside the Nova Scheduler. Then, after a certain timeout, the Trusted Computing Pool inside Nova Scheduler will be updated using the new components Trusted Computing Pool. When an action is performed on the Trusted Computing Pool inside the Nova Scheduler, we also perform the same updates on the new component’s Trusted Computing Pool. This design prevents the new component from affecting current OpenStack operations and prevents the Trusted Computing Pool from being unavailable if a lot of checks are scheduled or if the new component fails.

System Design

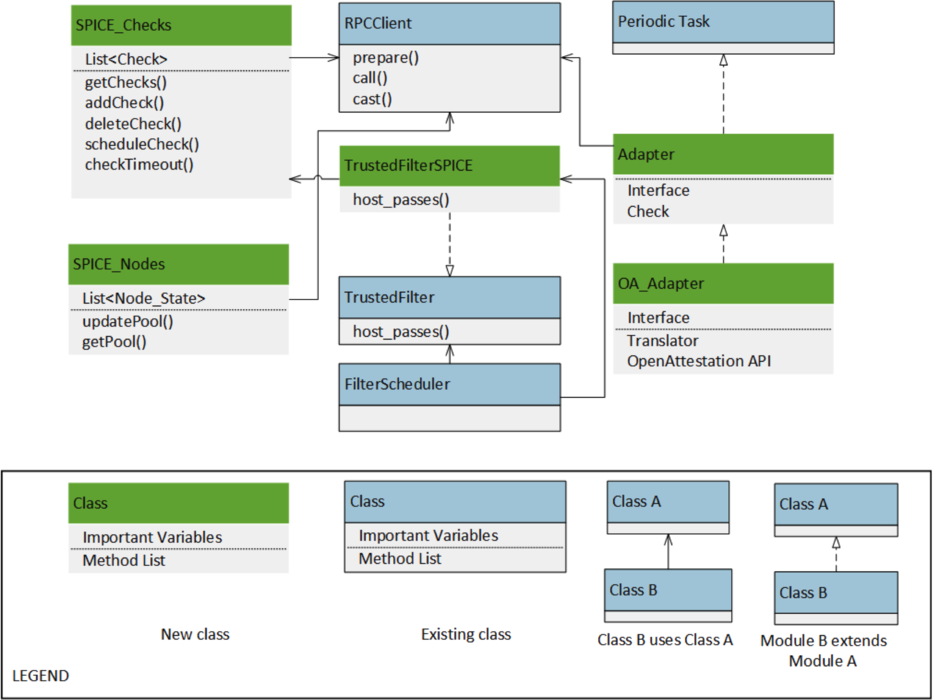

Static view of modules in Nova is presented on Figure 2.

Figure 2. Static view of modules in Nova

There are three responsibilities for functionality of the system that resides in Nova: maintaining list of nodes and their trust state, maintaining list of checks and their schedule, and performing checks. That’s why we introduced three classes: SPICE_Checks, SPICE_Nodes, and Adapter. They are using Message Bus to connect to each other, the dataflow between them is described in more detail on dynamic view.

Dividing functionality into three classes by their responsibility makes them highly cohesive. Decision about Message Bus was described earlier, and it provides loose coupling between static modules. So we can say that this structures promote maintainability and modifiability.

SPICE_Checks class is responsible for maintaining list of checks, their schedule, and timeouts.

SPICE_Nodes class is responsible for maintaining list of nodes and their trust state.

RPCClient class (https://wiki.openstack.org/wiki/Oslo/Messaging) is responsible for performing remote procedure calls in a client-server fashion by means of the message bus which is already an existing part of OpenStack. Usually RabbitMQ is used to allow components of OpenStack to communicate in a loosely coupled fashion. Using the message bus brings the following benefits:

- Decoupling between client and server (such as the client does not need to know where the server is).

- Asynchronous communication between client and server (such as the client does not need the server to run at the same time of the remote call).

Periodic Task is an utility class and is common part of OpenStack across different services.

Adapter is an abstract class extending Periodic Class and adds two layers: interface to Message Bus and check functions.

OA_Adapter is implementation of abstract Adapter class and it is responsible for using OpenAttestation service as a check in our system.

TrustedFilter class (http://docs.openstack.org/developer/nova/api/nova.scheduler.filters.trusted_filter.html) is responsible for supporting Trusted Computing Pools. This is the filter that only schedules tasks on a host if the integrity (trust) of that host matches the trust requested in the `extra_specs’ for the flavor. The `extra_specs’ will contain a key/value pair where the key is `trust’. The value of this pair (`trusted’/`untrusted’) must match the integrity of that host (obtained from the Attestation service) before the task can be scheduled on that host. The task are actually scheduled using the FilterScheduler class (http://docs.openstack.org/grizzly/openstack-compute/admin/content/filter-scheduler.html), which uses the configured filters to filter out “bad” machines.

We want to create our own filter TrustedFilterSPICE class so that it will define its own version of the host_passes() method which will use results of our checks instead of using only OpenAttestation checks.

Schedule

We plan to start development in June 2014 and implement the core scheduling functionality for the administrator, the framework for plugging in more checks, viewing results of the checks and integrating OpenAttestation [1] as the first plugin to our module by the end of July. We have also planned some additional features - like verification of plugged-in checks - if these core functions are completed before the stipulated time.

We are aiming to include our functionality in the Juno release coming on October 16, 2014.

Testing

A series of tests for verifying that the new component is working as intended will be provided by our team later. Performance testing will be performed to verify satisfaction of performance quality attributes.

Testing Setup Guide

This section will be completed as the module is developed.

Scope

The new component can be used only by the administrator and thus we place the responsibility of plugging valid checks on the administrator.

References

[1] "OpenAttestation"-OpenStack. N.p., n.d. Web. 23 May 2014. <https://wiki.openstack.org/wiki/OpenAttestation>.