Neutron/Networking-vSphere

Contents

Overview

OVSvApp Solution for ESX based deployments

When a cloud operator wants to use OpenStack with vSphere using open source elements, he/she can only do so by relying on nova-network. Currently, there is no viable open source reference implementation for supporting ESX deployments that would help the cloud operator to leverage some of the advanced networking capabilities that Neutron provides. Here, we talk about providing cloud operators with a Neutron supported solution for vSphere deployments in the form of a service VM called OVSvApp VM which steers the ESX tenant VMs' traffic through it. The value-add with this solution is a faster deployment of solutions on ESX environments together with minimum effort required for adding new OpenStack features like DVR, LBaaS, VPNaaS etc. To address the above challenge, the OVSvApp solution allows the customers to host VMs on ESX/ESXi hypervisors together with the flexibility of creating port groups dynamically on Distributed Virtual Switch/Virtual Standard Switch, and then steer its traffic through the OVSvApp VM which provides VLAN & VXLAN underlying infrastructure for tenant VMs communication and Security Group features based on OpenStack.

OVSvApp Benefits:

- Allows vendors to migrate their invested ESX workloads to a cloud.

- Allows vendors to deploy ESX-based Clouds with native OpenStack, with less (or) no learning curve.

- Allows vendors to leverage some of the advanced networking capabilities that Neutron provides.

- Not required to rely on nova-network (which is deprecated).

- Does not require special licenses from any vendors to deploy, run and manage.

- Aligned to OpenStack Kilo release.

- Available upstream in OpenStack Neutron under project “openstack/networking-vsphere” https://github.com/openstack/networking-vsphere.

Architecture of OVSvApp Solution

OVSvApp VM:

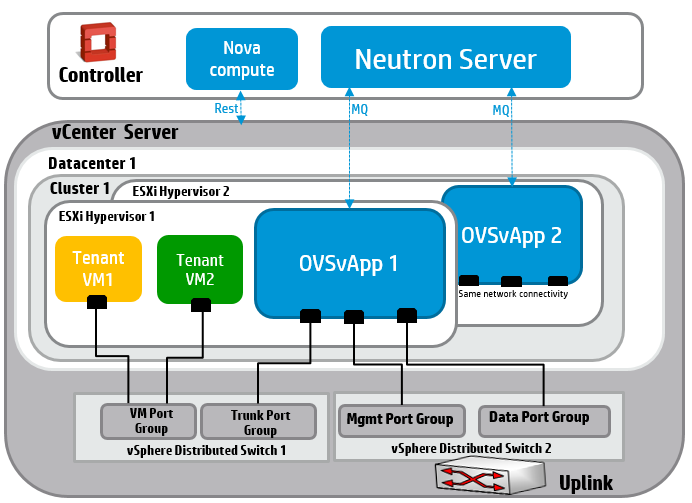

OVSvApp solution comprises of a service VM called OVSvApp VM (in Blue colored) hosted on each ESXi hypervisor within a cluster and two vSphere Distributed Switches (VDS). OVSvApp VM runs Ubuntu 12.04 LTS or above as a guest Operating System and has Open vSwitch 2.1.0 or above installed. It also runs an agent called OVSvApp agent.

For VLAN provisioning, two VDS per datacenter is required and for VXLAN, two VDS per cluster is required. The 1st VDS (named vSphere Distributed Switch 1) do not need any uplinks implying no external network connectivity, but will provide connectivity to tenant VMs and OVSvApp VM. Each tenant VM is associated with a port group (VLAN). The tenant VMs’ data traffic reaches the OVSvApp VM via their respective port group and hence through another port group called Trunk Port group (Port group defined with “VLAN type” as “VLAN Trunking” and “VLAN trunk range” set with range of tenant VMs’ traffic - VLAN ranges, exclusive of management VLAN as explained below) with which OVSvApp VM is associated.

The 2nd VDS (named vSphere Distributed Switch 2) has one or two uplinks and provides management and data connectivity to OVSvApp VM. The OVSvApp VM is also associated with other two port groups namely Management Port group (Port group defined with “VLAN type” as “None” OR “VLAN” with specific VLAN Id in “VLAN ID” OR “VLAN Trunking” with “VLAN trunk range” set with range of management VLANs) and Data Port group (Port group defined with “VLAN type” as “VLAN Trunking” and “VLAN trunk range” set with range of tenant VMs’ traffic - VLAN ranges, exclusive of management VLAN). Management VLAN and Data VLANs can share the same uplink or can be on different uplinks and those uplink ports can be a part of the same VDS or can it can be on separate VDS.

Nova Compute:

The nova compute is the nova-compute service for ESX. Only one instance of this service need to run for entire ESX deployment (not like KVM where nova-compute service needs to run on every KVM Host). This single instance of nova-compute service can be run either in the OpenStack controller node itself (or any other service node in your cloud). The nova-compute comprises of OVSvApp Nova VCDriver that is customized for OVSvApp Solution.

Neutron Server:

The neutron server provides the tenant Network and Port information to OVSvApp Agent. It contains the OVSvApp thin Ml2 Mechanism driver.

Components of OVSvApp VM

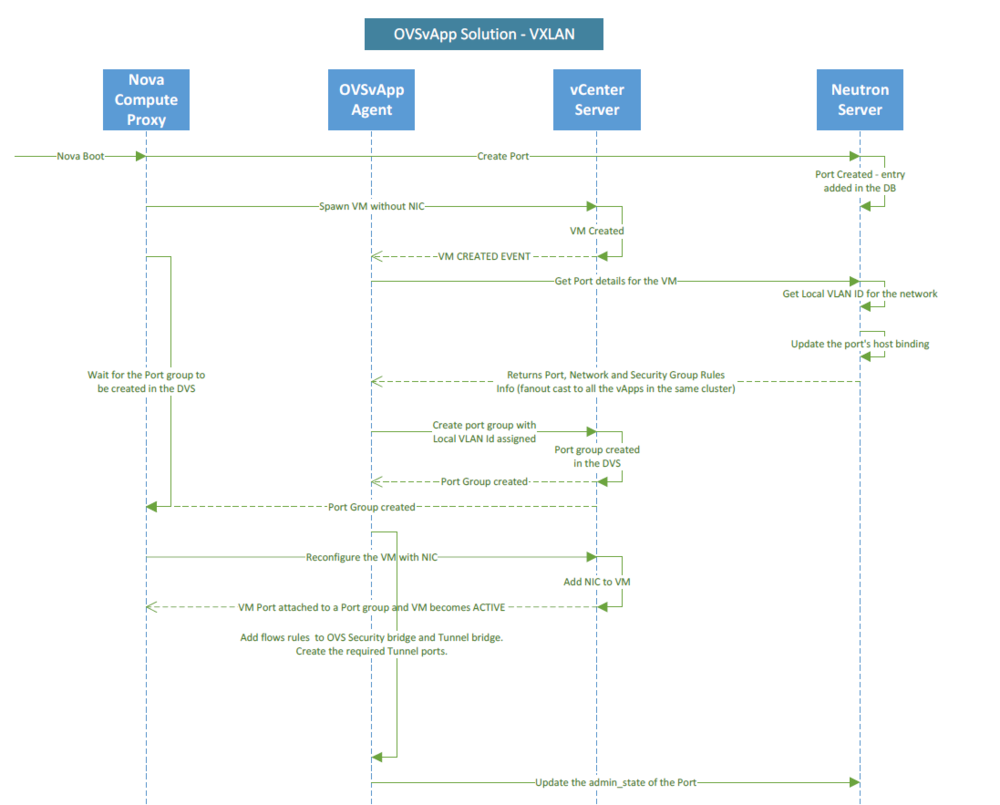

OVSvApp VM which runs OVSvApp L2 Agent waits for cluster events like "VM_CREATE", "VM_DELETE" and "VM_UPDATE" from vCenter Server and acts accordingly. OVSvApp agent also communicates with the Neutron Server to get information like port details per VM and Security Group rules associated with each port of a VM from the Neutron Server to program the OpenvSwitch within OVSvApp VM with FLOWs.

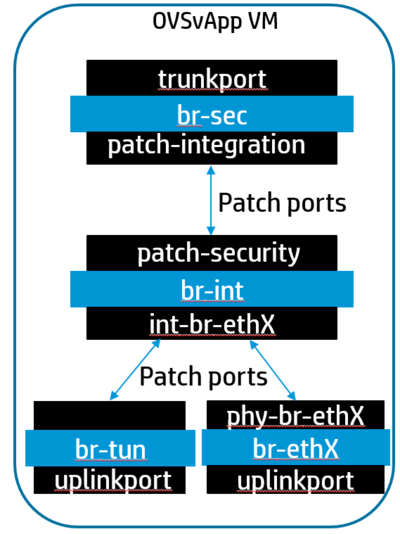

OpenvSwitch comprises of three OVS Bridges namely Security Bridge(br-sec), Integration Bridge(br-int) and Physical Connectivity Bridge(br-ethx) in case of VLAN, and Tunnel Bridge(br-tun) in case of VXLAN.

Security Bridge (br-sec) receives tenant VM traffic where Security group rules are applied at VM port level. It contains Open vSwitch FLOWs based on the tenant’s OpenStack Security Group rules which will either allow/block the traffic from the tenant VMs. Open vSwitch based Firewall Driver is used to accomplish Security Groups functionality, similar to iptable Firewall Driver used in KVM compute nodes.

Integration Bridge (br-int) connects Security Bridge and Physical Connectivity OR Tunnel Bridge. The reason to have Integration Bridge is to leverage existing OpenStack Open vSwitch L2 agent feature to a maximum.

Physical Connectivity Bridge (br-ethx) provides connectivity (VLAN provisioning) to the physical network interface cards.

Tunnel Bridge (br-tun) is used for establishing VXLAN tunnels to forward tenant traffic on the network.

VLAN/VXLAN provisioning

To support 2^24 networks (scalable networks), an indirect mapping approach of global VNI (Virtual Network Identifier) or global VLAN id to local VLAN Id mapping is used and is applicable within a cluster. So, a global VNI or global VLAN can map to different local VLAN Ids across clusters. So, on the vCenter side, at the port group level, a local vlan will be associated with it for a given global VNI or global VLAN.

Security Groups

Security Groups – traffic filtering rules are applied on tenant VM ports. Open vSwitch based Firewall (OVSFirewallDriver) is used to program Open vSwitch FLOWs on Security Bridge to impose security rules. Based on the security rules of the ports provided by neutron server, the OVSFirewallDriver translates the security rules to Open vSwitch rules and programs the Security Bridge accordingly. A thread within the OVSvApp agent keeps continuously monitoring for any security rules related updates and applies on the Security Bridge accordingly.

vMotion

VMWare Distributed Resource Scheduler (DRS) uses vMotion for live migration of VM within a cluster. To support vMotion via OVSvApp solution, similar FLOWs are populated on all OVSvApp VMs within a cluster i.e; when a tenant VM is booted on an ESXi hypervisor by vCenter server, FLOWs related to the tenant VM are not only added on its hosting OVSvApp VM, but also concurrently on all other OVSvApp VMs within a cluster. So, when a tenant VM is vMotioned from one ESXi hypervisor to the other ESXi hypervisor, necessary FLOWs are readily available for uninterrupted network connectivity. Necessary VMWare prerequisites for vMotion should be taken care of.

Deployment

Using Devstack

Enabling in Devstack:

https://github.com/openstack/networking-vsphere/tree/master/devstack

Devstack settings:

https://github.com/openstack/networking-vsphere/blob/master/devstack/settings

References

Source Code: https://github.com/openstack/networking-vsphere

OVSvApp Solution White Paper: https://github.com/hp-networking/ovsvapp/blob/master/OVSvApp_Solution.pdf

Blueprint for ESX with VLAN as per Kilo – neutron-specs specification: https://blueprints.launchpad.net/neutron/+spec/ovsvapp-solution-for-esx-deployments

OVSvApp Solution: ESX with VLAN https://github.com/openstack/networking-vsphere/blob/master/specs/kilo/ovsvapp_esx_vlan.rst

Blueprint for ESX with VXLAN as per Kilo – neutron-specs specification : https://blueprints.launchpad.net/neutron/+spec/ovsvapp-esxi-vxlan

OVSvApp Solution : ESX with VXLAN https://github.com/openstack/networking-vsphere/blob/master/specs/kilo/ovsvapp_esx_vxlan.rst

Open vSwitch-based Security Groups: Open vSwitch Implementation of FirewallDriver: https://blueprints.launchpad.net/neutron/+spec/ovs-firewall-driver