Meteos/Meteos2.0

Contents

Meteos 2.0

Machine Learning Infrastructure As A Service

Mission

To host & provide infrastructure for machine learning technologies and expose the machine learning technologies via robust & opaque APIs for consumers to consume the machine learning intelligence and also for producers to provide machine learning algorithms

Meteos 1.0 Vs 2.0

Meteos 1.0 concentrated on wrapping Apache SparkML inside Openstack wrapper and offered the same as Openstack Service. In that extent, ML intelligence is itself implemented via Openstack. Hence as a side effect, this made Meteos very much tightly coupled with one single ML technology. It also complicated the roadmap of adding multiple ML technologies like TF, PyTorch etc.

In reality, Openstack is best suited to provide infrastructure, rather than the intelligence. Hence in Meteos 2.0, we are re-imagining the project to do what Openstack is good at - Providing infrastructure in a super efficient way.

Scope

- Infrastructure ( CPU , GPU, Memory, NICs ) for

- Serving pre-trained ML models

- Training ML models

- Executing Data Ingestion, Filtering & Data processing pipelines

- Expose APIs & SDKs for

- Connecting user programs ( a.k.a ML Apps ) to ML models served via openstack

- Uploading pre-trained ML models for serving prediction / classification requests

- Uploading ML tasks / jobs as part of distributed cloud based training

- Uploading / Downloading datasets, weights etc for utility functions

No Scope

- Tight integration to any specific ML technology

- Design & Development of any ML algorithm

- Algorithm marketplace

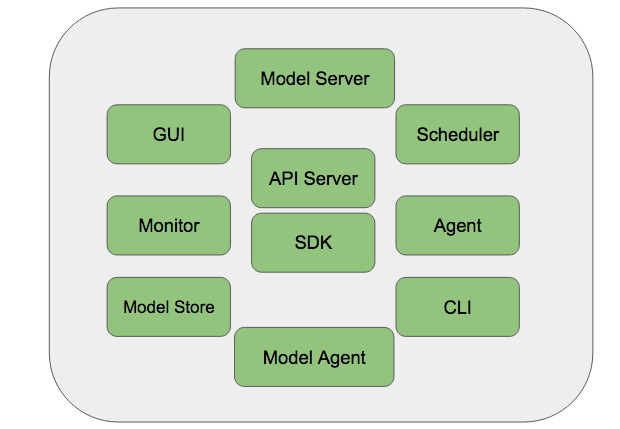

Highlevel Components

| Component | Description |

|---|---|

| ML Stack Store | A store maintaining ML Technology stacks, based on HOT files. Any new technology stack ( TF, PyTorch etc ) can be introduced as a ML Stack and provisioned via Meteos 2.0 |

| API Server | Front ending API server, listening to both REST & SDK traffic. All external communications will be routed & loadbalanced via this endpoint. |

| Model Server | A server that serves hosted ML models. Pre-trained models can be uploaded & made available for consumption via Model Server. Model Servers wraps the model consumption as a RESTful service and exposes APIs ( with unique endpoints ) for clients to consume the ML intelligence. Model Server takes care of deploying a ML model on their respective ML stack and do necessary housekeeping to expose the models in a super simple way. |

| Model Store | An object store based on Protbuf / ONNX format to host uploaded models in a highly available manner. Uploaded models can be versioned, access protected, abstracted & updated on demand. Model Server communicates with Model Store to download & hosts requested models. |

| Scheduler | A scheduler to oversee allocating of ML infra to Training & Serving needs based on input requests. Based on input serving & training requirements such as ML Stack, CPU/GPU, Memory & I/O latency requirements, Scheduler will pick relevant Meteos worker node to serve the request. Scheduler also aids Model Server to pick relevant machine for serving pre-trained models. |

| Agent | A Helper process that sits along with Meteos node. Collaborate with Scheduler & Model Server to perform operations. |

| Model Agent | Same as agent, but serves for Model store requests. |

| Monitor | Monitors entire Meteos Operation and provides a birds eye view of entire operation as part of Horizon Dashboard |

| SDKs | Python & Golang ( future ) based client utilities that connects to Meteos in more native way. |

| CLI & GUI | Interaction Tools |