Barbican/Discussion-Plugin-Design

Contents

Contents

This wiki page is a work in progress, intended to get contributors thinking about how to manage Barbican plugins and workflows for the Juno effort and beyond.

This page explores design concepts for Barbican plugin interfaces. Barbican currently uses plugins to interface with cryptographic resources such as hardware security modules (HSMs). This page also discusses how the plugin approach could accommodate the planned addition of SSL certificate generation and management to the orders resource.

Overview

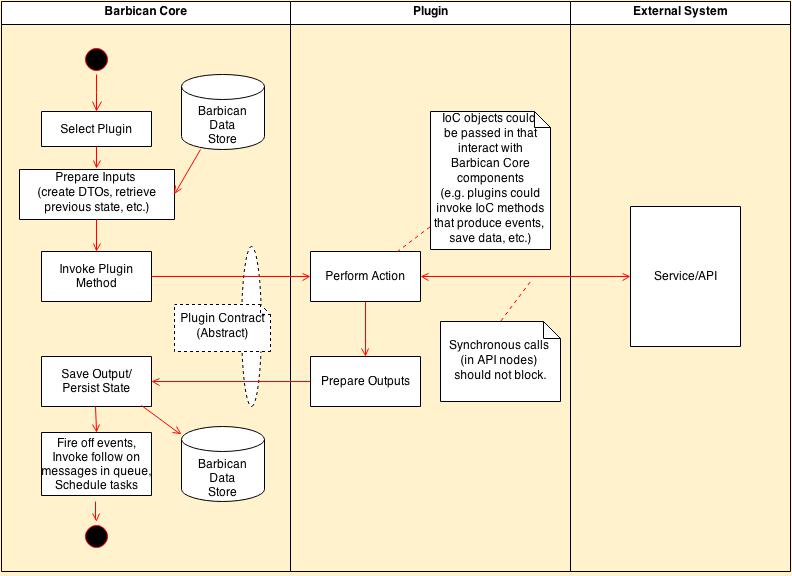

The following figure depicts a generic plugin dataflow within Barbican. Note the separation of 'core' Barbican functionality (available in the main Barbican repository and representing work done on behalf of plugins) from 'plugin' functionality to perform some type of work, which might include interaction with external services. Plugins can be invoked via synchronous or asynchronous processes, such as for encryption/decryption/validation or for order processing, respectively. The source code for these 'plugins' may or may not be available in the Barbican code base.

Focusing on Barbican Core, a plugin must be selectable from more than one potential implementing plugin based on some criteria, such as first one to support a feature. Plugin selection is discussed in a later section.

Barbican must then provide inputs to the plugin to do its work. If the plugin is stateful across multiple calls to the plugin, then Barbican should store this state on the plugin's behalf, keying this data to a flow instance such as a specific order process. Note that Barbican may also pass an 'inversion of control' (IoC) component into the plugin, which would allow the plugin to interact with Barbican services (such as event generation) without knowledge of how Barbican implements these services.

When the plugin is invoked, the plugin performs its work, which may include interacting with an external service. For synchronous work flows (such as Barbican API processing), these service calls should be made as fast as possible since the response back to the client will be blocked until they complete.

Once a plugin returns, Barbican Core can persist the results. State can also be persisted into the Barbican Core data store if required for follow on plugin calls (such as extended workflow processing of a given SSL certificate). Barbican Core could also support if a plugin needs to be called again on a scheduled basis.

Asynchronous Order Processing Plugins

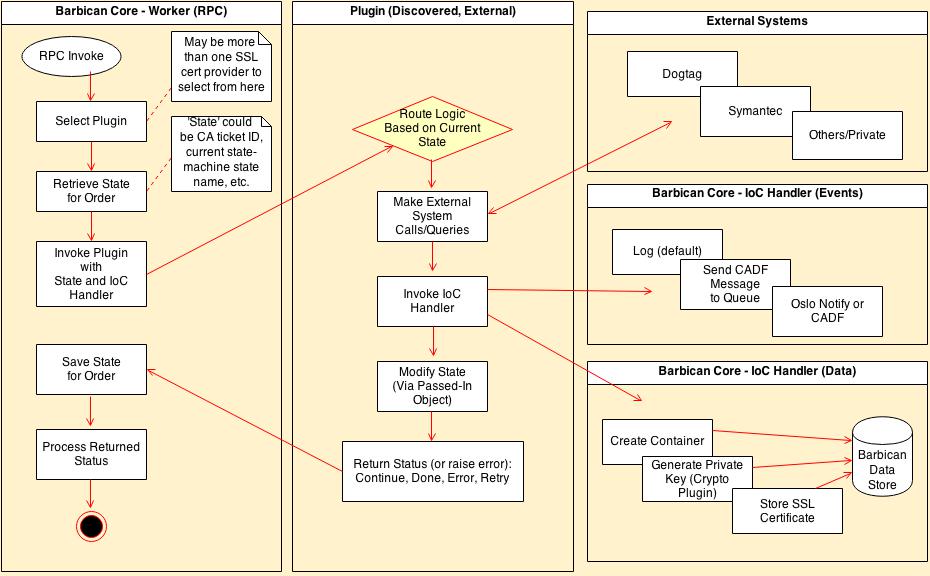

The Overview section detailed Barbican plugin flows. This section adds more detail for asynchronous order process flows, especially for SSL certification generation involving interacting with a certification authority (CA). The following figure depicts asynchronous processing by the Barbican Core worker process, invoked via RPC calls from the oslo.messaging queue service.

For SSL certification generation, more than one vendor plugin may be available such as for Dogtag or Symantec, hence the order's details should include which vendor to use for the selection criteria, or else Barbican should support specifying a default vendor/plugin to use. The same vendor plugin could be use to validate inputs (esp. for the many fields needed for a CSR) as well as for asynchronous worker-side order processing.

Barbican then retrieves any state associated with a given order instance, probably via order meta data information stored along with the order record. The plugin could define this status as key/value pairs for example. Since the same plugin may be called multiple times for the same order instance, this persisted state might include a state machine state name that directs which business logic to use within the vendor plugin. If the order instance needs to 'link' to an external system's order reference (such as for Symantec) this could be stored in the meta data as well (as determined by the plugin).

Next Barbican Core must provide IoC components to allow plugins to perform system interaction (such as database updates and event notification) without them directly accessing these critical core components. As depicted in the figure, one IoC handler could present specific methods such as 'notify_ssl_cert_is_ready()' which are handled by Barbican Core as simple log messages for out-of-the-box deployments, or else as CADF messages sent via oslo incubator or Ceilometer for external systems to consume in deployment/company-specific ways. Another IoC handler could 'wrap' data model operations such as 'generate_private_key()' which Barbican Core would implement as a generate/encrypt/store operation in the crypto package.

The order processing plugin can be invoked, perhaps routing flow based on the previous state information, such as for state machine processing for SSL certification processing. The plugin might respond with a status that the Barbican Core logic could use to determine what to do with the plugin next...for example, 'Done' might indicate order processing is completed, 'Continue' might mean persist plugin state with the order for a future plugin call (say via invoked scheduled batch update from the CA), and 'Retry' might mean call the plugin again at a future time to retry an operation.

Scheduled and Batch Processing

The discussion so far has focused on synchronous and asynchronous invocation of plugins for a given workflow or order instance, but some processes might require batch processing across multiple instances. For example, SSL certification processing may involve requesting status from a CA on a scheduled basis. This status might be a batch of multiple SSL certificate order statuses at once, so Barbican would need to iterate through these order statuses and individually invoke plugin tasks for them. The plugin might provide a batch method that Barbican Core could invoke on a scheduled basis, with a callback function passed in that the plugin calls for each order instance seen in the batch. Barbican Core would implement the callback by enqueueing a plugin RPC task for a worker nodes to process.

To implement the scheduled processes, Barbican could use the Nova approach, that uses oslo-incubator's periodic_tasks annotation on Service methods that should be scheduled. It uses Eventlet greenpools under the hood. Currently the worker servers extend olso's Service, so they are the logical place for the scheduled processes to reside as well. A concern here though is that for reliability, each of the multiple workers should be able to schedule tasks such as the SSL batch status task above. If these separate scheduled tasks are in turn enqueuing single-order update tasks, it would be possible for more than one worker to be processing the same order instance.

Plugin Source Code Organization

The source code for 'Barbican Core' is found in the stackforge/barbican repository and includes logic supported the left hand side of the figures above, and an abstract base class defining the interactions to the plugins in the middle of the diagrams. Core should also always include simple example and standalone plugin implementations that are enabled out-of-the-box on local installations. They shouldn't require network access to function and demonstrate, should be well unit-tested and should provide a good example to developers of new plugins.

Beyond these simple default plugins however, it is not as obvious how to manage specific plugin implementations' source code. On one hand it is convenient to bundle with Core source code for specific plugin implementations that are likely to be used for production Barbican installations. For example, Barbican Core does currently include PKCS11 and Dogtag based crypto plugins. On the other hand, these plugins usually have dependencies on libraries that are not part of the OpenStack global requirements, and therefore have to accommodate out-of-the-box deployments that don't have those dependencies installed. Hence thorough unit testing is more difficult (via patching) and code logic is a bit more complicated to deal with missing imports.

Another option is to create separate git repositories for the plugin implementation source files, with a dependency on the Barbican Core source base such as to extend abstract plugin contracts. This approach would simplify the Barbican Core code base, but would require integrating multiple repositories for testing purposes. It would also require mechanisms to extend Barbican to include these external dependencies at package time. This is explored in the next section.

Discovering 3rd Party Plugins

With the bundled crypto plugin implementations that Barbican Core includes now (such as PKCS11 and Dogtag), activating them for usage just requires including their dependencies in the deployed Python package or deployment, and then enabling them via configurations in the /etc/barbican/barbican-api.conf file. Stevedore provides the ability to load these plugins and then to select/use them at run time.

For plugins developed outside of Barbican Core, Stevedore could still be used and in addition to installing non-OpenStack dependencies and adding configuration items to barbican-api.conf, would also require adding a setup.cfg file that defines the new plugin namespaces, aliases and classpaths. A new custom-deployment package could then be created and installed.

Another option is to use a plugin module discovery process similar to the Heat project's Resource discovery. Heat defines a folder location that is searched for new Resources, in the form of Python source files that extend a base Resource. A similar approach could be used to discover plugin implementations.