TripleO/TuskarJunoInitialDiscussion

Contents

Overview

This information is based on discussions from the TripleO mid-cycle Icehouse meetup in Sunnyvale.

One concept independently stressed by many people is: Tuskar should take advantage of existing OpenStack services. Although we have made strides in following this philosophy in the Icehouse development cycle, several discussions described how we might go further down this path. Following these suggestions results in changes in both the current architecture and in feature planning.

Re-Architecture

Cloud Planning and Swift

In Icehouse, user-configurable cloud planning is represented by the state that is kept in Tuskar API: the roles, the role counts, the stack parameters, etc. At deploy-time, this information is passed down and used to construct a Heat template which is then used to create a stack that is deployed by Heat. The template does not exist separately from the deployed stack.

However, if we take a step back, the intent of a Heat template is: a plan. If we can simply use a Heat template for cloud planning, we eliminate the need for a stateful Tuskar API. The problem is that Heat only stores the plan (template) within the context of the deployment (stack); it does not separate the two. But there is no reason why we cannot create a template to capture the plan and then store it elsewhere.

Using Swift for template storage solves this problem. At a high level, this would work as follows:

- during planning stages, the template is written and updated in Swift

- when a user deploys a cloud, the template is read from Swift and directly used to create the Heat stack.

Additional notes:

- Swift will store the Heat template and deployment "answers" (parameters, config options)

- Swift object IDs stored in template metadata

- Parser needed to break apart and write template and answers (Tuskar service)

- UI does not edit template directly; it edits values that the parser exposes, and the parser reconstitutes the template

- Removes need for stateful Tuskar API

- Principally a Tuskar API (Tuskar service) change; UI may not be greatly affected

- Swift has built-in versioning (future consideration)

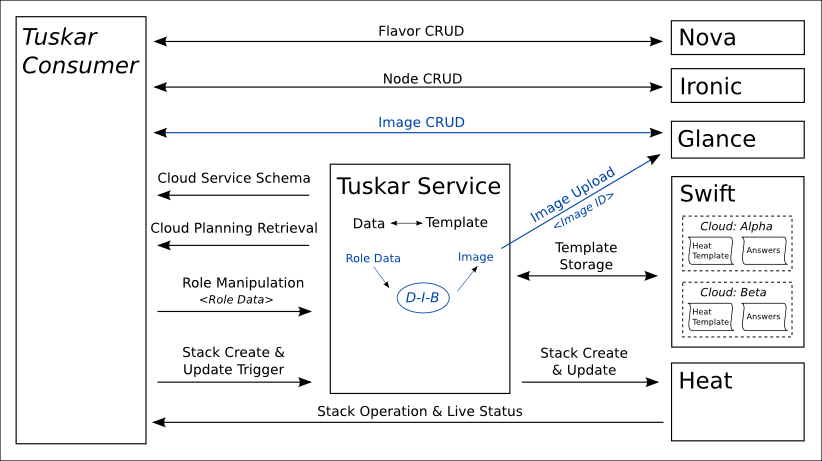

Architecture Diagram

This diagram details Tuskar's proposed interactions with OpenStack services:

Feature Planning

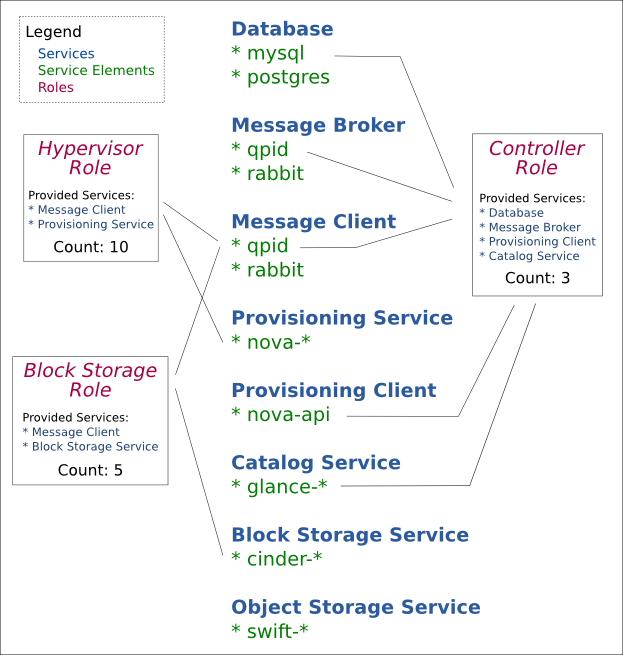

Services

Service is a new concept for Tuskar. A service represents a cloud function - provisioning service, storage service, message broker, database server, etc. A service is fulfilled by a service element; for example, a user can fulfill the message broker service by either choosing the qpid-server service element or the rabbitmq-server service element.

With the service concept in play, roles are updated as follows: instead of being associated with images, they are associated with a list of service elements. The user can then either supply the appropriate image for those elements; or use diskimage-builder to create a custom image.

This provides greater flexibility for the Tuskar user when designing the scalable components of a cloud. For example, instead of being limited to a Controller role, the user can separate out the network components of that role into a Network role and scale it individually.

Tuskar can still provide role defaults or suggestions corresponding to current default roles, as well as an "All-in-One" role.

Other

One outcome of the discussions is the idea that Tuskar should be developed based on existing OpenStack functionality. This means that Tuskar should accept current limitations and not try to design around them. If we wish to surpass a limitation, we need to work within the related OpenStack project.

From a practical standpoint, this implies that Tuskar development will need to be further distributed within various OpenStack projects.

Heterogeneous node support

Requirements for this include:

- Add additional Node attributes (rack, data center) - Ironic (node metadata?)

- Update scheduler filters for more than just 'exact match' - Nova

- Multiple flavors per role - Heat/Tuskar

Deployment updates

Use cases include:

- Scaling down

- Updating deployment plan (node profile, flavor)

Heat is having similar discussions, so we should follow Heat.

Deployment configuration

- High availability - TripleO

- Network management - Neutron and Heat

- Metrics - Ceilometer and Heat

Node management

We would like to use Ironic, and we would like to allow for auto-discovery of nodes. This is entirely Ironic dependent.

Auditing of operations

This feature is really a more general OpenStack service that does not yet exist. It is not a high priority for us, but if it becomes one, it should not be solved within Tuskar.

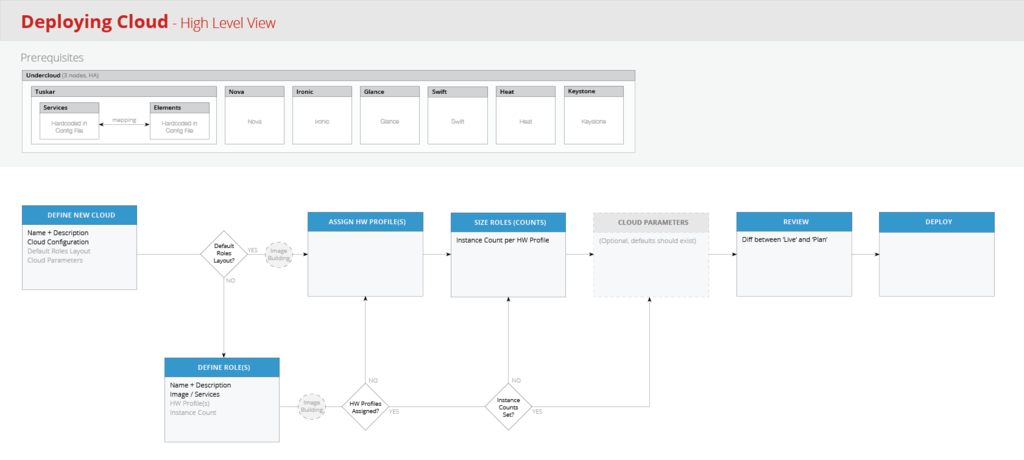

User Workflow

More details will follow.