Scheduled-images-service

- Launchpad Entry: QonoS scheduling service

- Created: 29 Oct 2012

- Contributors: Alex Meade, Eddie Sheffield, Andrew Melton, Iccha Sethi, Nikhil Komawar, Brian Rosmaita

Contents

Obsolete

The original blueprint for backups within Nova was superseded by a newer blueprint for scheduled images. The new blueprint was assigned to Brian Rosmaita from Rackspace, and was implemented by Rackspace as a Nova API extension. The code completed for the blueprint was rejected by Russell Bryant on 2013-05-02 in favor of a more general scheduling approach. Adrian Otto from Rackspace proposed the EventScheduler on 2013-05-02 to address the general purpose use case. See the Rackspace API extension if you want to find the Rackspace implementation for this feature.

Summary

This blueprint introduces a new service, QonoS, for scheduling periodic snapshots of an instance. Although periodic snapshots could be implemented very quickly using cron or as one of nova's current periodic tasks, it would not prove reliable.

Service responsibilities include:

- Create scheduled tasks

- Perform scheduled tasks

- Handle rescheduling failed jobs

- Maintain persistent schedules

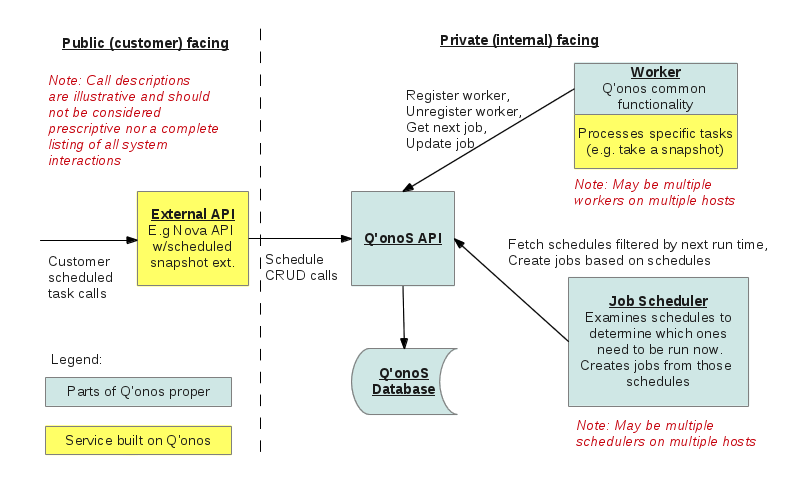

Overall System Diagram:

Scalability

Creating a new, self-standing service allows for scaling the feature independently of the rest of the system.

Reliability

Users of the API may come to rely on this feature working every time or notifying them of failures.

It is important to have a scheduling service that understands information such as instances, tenants, etc if there is any desire to recover from errors or make performance decisions based on such information. This is opposed to having a more generic 'cron' service that knows nothing of the concept of an instance or image.

For example, listing schedules of a particular tenant would be much more efficient if the tenant was in a DB column instead of a blob in the DB.

System Picked Schedules

Instead of the user being able to pick the time of snapshot, the system can make (potentially) informed decisions about how to spread out the schedules. This way there could not be cases where a majority of the users pick midnight and produce too much load for that time.

Design

Entities

- Schedule

- the general description of what the service will do

- looks something like

{

"tenant_id" : <tenantId>,

"schedule_id" : <scheduleId>,

"job_type" : <keyword>,

"metadata" : {

// all the information for this job_type

"key" : "value"

}

"schedule" : <the schedule info, exact format TBD>

}

- Job

- a particular instance of a scheduled job_type

- e.g., 'snapshot'

- a particular instance of a scheduled job_type

- i.e., this is the thing that will be executed by a worker

- Worker

- a process that performs a Job

The QonoS scheduling service has the following functional components:

- API

- handles communication, both external requests and internal communication

- creates the schedule for a request and stores it in DB

- the only job_type we will implement is 'scheduled_image'

- Job Maker

- creates Jobs from schedules; the idea is that the Jobs table will consist of Jobs that are ready to be executed for the current time period

- Job Monitor

- keeps the Job table updated

- Worker monitor

- looks for dead workers

- Worker

- executes a job, keeps the job's 'status' updated

- does "best effort" ... if an error is encountered, it will log and terminate job

API

CRUD for schedules

POST /v1/schedules

GET /v1/schedules

GET /v1/schedules/{scheduleId}

DELETE /v1/schedules/{scheduleId}

PUT /v1/schedules/{scheduleId}

Request body for POST, PUT will be roughly the Schedule entity described above. POST would return the scheduleId.

CRUD for jobs

GET /v1/jobs

GET /v1/jobs/{jobId}

DELETE /v1/jobs/{jobId}

GET /v1/jobs/{jobId}/status

GET /v1/jobs/{jobId}/heartbeat

PUT /v1/jobs/{jobId}/status

* status in request body

PUT /v1/jobs/{jobId}/heartbeat

* heartbeat for this job (exact format TBD) in request body

NOTES:

- No POST, the job maker handles job creation.

- The worker will mark the job status as 'done' (or whatever) when it finishes.

- The /status and /heartbeat may be combined into a single call, not sure yet

GET /v1/workers

GET /v1/workers/{workerId}

GET /v1/workers/{workerId}/jobs/next

* return job info, format TBD

POST /v1/workers

* returns a workerId, is done when a worker is instantiated, allows the system to keep track of the worker

DELETE /v1/workers/{workerId}

* should be called by the worker if/when it's safely taken down

Service

The service shall consist of a set of apis, worker nodes, and a DB.

API - Provides a RESTful interface for adding schedules to the DB

Worker - References schedules in the DB to schedule and perform jobs

DB - Tracks schedules and currently executing jobs

Database

- schedules

- jobs

- job faults

- must be useful!

Implementation

Typical flow of the system is as follows.

- User makes request to Nova extension

- Nova extension passes request to API

- API picks time of day to schedule

- Adds schedule entry to DB

- Worker polls DB for schedules needing action

- Worker creates job entry in DB

- Worker initiates image snapshot

- Worker waits for completion while updating 'last_touched' field in the job table (to indicate the Worker has not died)

- Worker updates DB to show the job has been completed

- Worker polls until a schedule needs action

Edge cases:

Worker dies in middle of job:

- A different worker will see the job has not been updated in awhile and take over, performing any cleanup it can.

- Jobs contain information of where they left off and what image they were working on (this allows a job whose worker died in the middle of an upload to be resumed)

Image upload fails

- Retry a certain number of times, afterwards leave image in error state

Instance no longer exists

- Remove schedule for instance