Difference between revisions of "Sahara/PluggableProvisioning"

| Line 22: | Line 22: | ||

# tt | # tt | ||

# dn | # dn | ||

| + | |||

| + | === User-Savanna-Plugin interoperability === | ||

| + | |||

| + | [[File:Savanna-plugin-interop.png|700px|thumb|center]] | ||

| Line 130: | Line 134: | ||

# ability to register image with some tags and description | # ability to register image with some tags and description | ||

# ability to add/remove tag to/from image | # ability to add/remove tag to/from image | ||

| − | |||

| − | |||

| − | |||

| − | |||

Revision as of 14:20, 26 April 2013

Contents

Overview

Savanna Pluggable Provisioning Mechanism aims to deploy Hadoop clusters and integrate them with 3rd party vendor management tools like Cloudera Management Console, Hortonworks Ambari, Intel Hadoop Distribution.

Additionally we changes/provides some objects: node processes and node types.

Node Process is just a process that could be runned at some node in cluster. Here is a list of the supported node processes:

- management / mgmt

- jobtracker / jt

- namenode / nn

- tasktracker / tt

- datanode/ dn

Node Type is a description of which (one or several) node processes should be executed at the specific node of cluster. Here is a list of some node types:

- mgmt

- jt+nn

- jt

- nn

- tt+dn

- tt

- dn

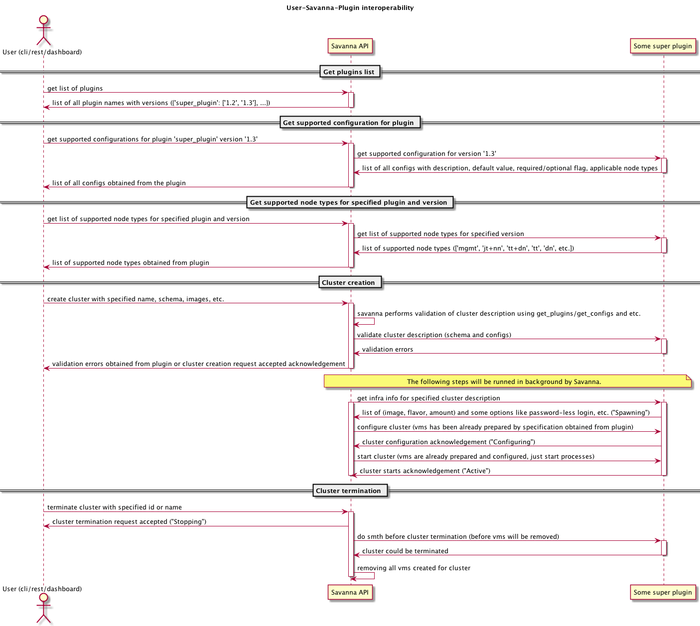

User-Savanna-Plugin interoperability

Savanna Plugable Mechanism consists of three components:

- Image Registry;

- VM Manager;

- Plugins.

Components responsibility:

- Image Registry:

- register image in Savanna;

- add/remove tags to/from images;

- get images by tags;

- VM Manager:

- launch/terminate vms;

- get vm status;

- ssh/scp/etc to vm;

- Plugins:

- get extra conf (specific for the concrete plugin);

- launch / terminate clusters;

- add / remove node;

- validation ops.

Zones of responsibility

- Savanna:

- provides resources and infrastructure (pre-configured vms, dns, etc.);

- cluster topologies, nodes and storage placement;

- cluster/hadoop/tooling configurations and state storage;

- Plugins:

- cluster monitoring;

- additional tools installation and management (Pig, Hive, etc.);

- final cluster configuration and hadoop management;

- add/remove nodes to/from cluster (with prepared by Savanna resources).

Workflows

Cluster creation workflow for User:

- get list of plugins;

- specify cluster name;

- choose plugin version and hadoop version (only minor variation);

- specifies cluster configuration:

- choose a common cluster configuration if needed;

- specify flavors for job tracker and name node;

- [optional] choose flavor for the management node (if applicable);

- add worker nodes with specific node type (data node, task tracker or data node + task tracker) and flavor (each of them could be specified several # times with different flavors or templates);

- [optional] fetch list of custom templates and override the cluster configuration, using these templates;

- [optional] override some cluster parameters;

- launch cluster;

- savanna performs basic validation and passes cluster configuration to the plugin;

- plugin validates request, if it’s valid then the infrastructure request will be generated;

- infrastructure request will contain:

- list of tuples (flavor, image, number of instances);

- list of actions that are needed to be done after machine started e.g. password-less ssh, setup DNS;

- savanna creates and prepares infrastructure and passes description to plugin;

- plugin launches Hadoop cluster.

Savanna - plugins interoperability workflow:

- User fetches extra cluster configs from Savanna API (Savanna delegates this call to the concrete provisioning plugin);

- User launches cluster (adds/removes nodes) using Savanna API;

- Savanna parses request and run common validations on it;

- Savanna determines which provisioning plugin should be used;

- Savanna runs plugin-specific validation for the current operation;

- Savanna creates (modifies) cluster object in DB, returns response to user and starts background job that will provision and launch cluster;

- User receives response with info about created (modified) cluster from Savanna API;

- Savanna calls in background “launch cluster” (add/remove nodes) method if the provisioning plugin;

- Plugin receives cluster configuration and can start vms from tagged images optionally using VM Manager and Image Registry;

- VM Manager provides helpers for ssh/scp/etc to vms;

- Plugin should configure and start 3rd party vendor management tool at the management vm and this tool will control Hadoop cluster;

- Plugin can update cluster status and info to expose information about it.

Python API level functions

Provisioning plugin functions:

- get_versions() - get all versions of hadoop that could be used with plugin

- get_configs() - list of all configs supported by plugin with descriptions, defaults and node process for which this config is applicable

- get_supported_types() - list of all supported NodeTypes, for example, nn+jt and tt+dn

- validate_cluster(cluster_description) - custom validation

- get_infra(cluster_description) - cluster should return list of triplets (flavor, image, count, config=”reset_pswd, generate_keys, etc.”)

- configure_cluster(cluster_description, vms)

- start_cluster(cluster_description, vms)

- on_terminate_cluster(cluster_description)

Image registry will provide an ability to set Glance properties to store some info about image, for example:

- _savanna_tag_<tag-name>: True

- _savanna_description: “short description”

- _savanna_os: “ubuntu-12.04-x86_64”

- _savanna_hadoop: “hadoop-1.1.1”

Image Registry functions:

- cluster image-related properties:

- base image info (applied to all nodes in cluster)

- base_image_tag

- base_image_id

- management image info (applied to management node only)

- management_image_tag

- management_image_id

- base image info (applied to all nodes in cluster)

- ability to register image with some tags and description

- ability to add/remove tag to/from image