Difference between revisions of "KeyManager"

(→Keys) |

(→Key Manager in OpenStack) |

||

| Line 74: | Line 74: | ||

= Key Manager in OpenStack = | = Key Manager in OpenStack = | ||

| + | |||

| + | == Keys == | ||

| + | # API | ||

| + | get <key-id> <authorization-token> | ||

| + | |||

| + | put <key-id> <encrypted-key-string> <authorization-token> | ||

| + | |||

| + | delete<key-id> <authorization-token> | ||

| + | |||

| + | update<key-id> <authorization-token> | ||

| + | |||

| + | === Key Scope: === | ||

| + | * Per entity (entity could be a volume, an object, a VM image/snapshot) | ||

| + | * Per user | ||

| + | * Per project (within a domain) | ||

| + | * Per domain | ||

| + | |||

| + | For strong encryption, typically a key is used in conjunction with an initialization vector (IV). The per-entity key would serve as an IV. It could be used alone or in conjunction with a wider scoped key, such as a domain scope key. | ||

| + | |||

| + | === Key-Size === | ||

| + | * 128, 192, 256, .. 2048 .. longer or shorter (possibly used with padding). | ||

| + | Some algorithms require longer keys, so we support a wide range. | ||

| + | |||

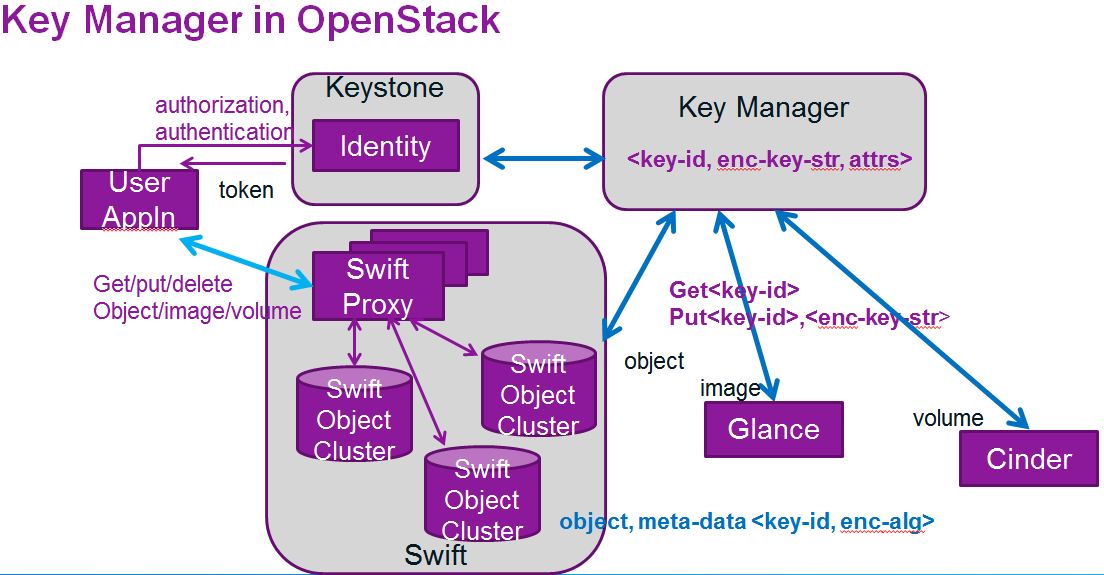

[[File:Key-manager.JPG||Key Manager in OpenStack]] | [[File:Key-manager.JPG||Key Manager in OpenStack]] | ||

Revision as of 23:37, 6 March 2013

Contents

Key Manager

Server side encryption with key management would make data protection more readily available, enable harnessing of any special hardware encryption support on the servers, make available a larger set of encryption algorithms and reduce client maintenance effort. Amazon and Google’s object storage systems provide transparent data encryption. Recently interest has grown in [[1]] to provide server side encryption in Cinder ( Volume ) [1], Swift ( Object ) [2], Glance ( Snapshot ).

Protecting data involves not only encryption support but also key management, the creating, storing, protecting, and providing ready access to the encryption keys. The keys would need to be stored on a device separate from that housing the data they seek to protect. Key management could be a separate OpenStack service or a sub-service of Keystone, OpenStack's identity service.

The keys themselves ideally would be random, of the desired length, with associated meta data such as ownership and themselves encrypted before being stored.

Security Model

- Protection of data at rest: the encrypted data and the encryption keys are held in separate locations. Stealing the data disk still leaves the data protected.

- Keys opaque: The keys themselves are encrypted using Master" keys.

- Master Key Protection: Master keys are protected in Hardware using Trusted Platform Module (TPM) technology. Keys are released to only trusted host machines (BIOS and initial boot sequences that are measurably good ).

- Secure Master Key Transmission: TPM technology is used to transfer master keys, from cooperating services and between sibling services (in the case of horizontal scaling).

- Support Dual Locking: High value data could be protected with a user/project/domain specific key and a service key. This is akin to using two keys such as with bank safe-deposit boxes, a bank key and a customer key.

- Limited Knowledge: Key Manager will not maintain mapping between keys to encrypted entities. Encrypted entities will maintain as meta data key-id (a pointer to a key to be used to unlock the same. But with dual keys, the customer key is not referenced, it is implicit as part of the authentication.

- Limited Access: Authorization and access control mechanisms limit access to keys.

- Protection from denial of service: multiple replicas of key manager.

- Data Isolation: Should some audit or law enforcement authority demand access for a certain customer, data belonging to other customers not exposed because they use

different keys.

Keys in use

Swift (object storage) example: assume an object X is stored in encrypted for on the Swift object store. Let enc-object-x be the encrypted representation of object X. Then the Swift file system would contain: enc-object-x, with meta_data: <enc:true, algorithm:aes-cbc, key-id: 1234567899 >

Similarly, an encrypted Cinder volume might be represented as Volume<id>, meta_data: <enc:true, algorithm:aes-xts, key-id:abcdefghijklmnopqrstuvxyz>

Encryption Algorithm

These would be obtained by the OpenStack services by directly querying the libraries used to provide encryption support. The options would also be provided as options during user/project/domain creation, to set defaults. The options may further be offered with each entity creation (could get too chatty for high volume data such as objects). Typical options would be RSA, AES, DES etc.

Design Considerations

High Availability

Think of the key manager as a dictionary of <key-ids> <key-strings> The keys have to be as accessible as the objects they encrypt. Either the Key Manager backing store should be something along the lines of Swift which provides high availability and redundancy by way of Swift Proxies and multiple replication sites. Alternately, the backing store could be mirrored databases. Ideally the mirrors or replication sites should be in different geographical zones.

For security, keys and the data they seek to protect should not be co-resident on the same physical device. Given this constraint, should one take a Swift backing store solution approach, it may be simple to introduce a separate Swift cluster to store the keys. The storage needs just for the keys would be less than the typical storage needs of Swift for object and snapshot/image storage.

Other high availability solutions that are typically used instead of Swift in a production environment also meet the needs of the Key Manager storage.

Opaque Keys

Keys while in storage will be encrypted for security. This calls for master keys to encrypt the key strings.

Protecting Master Keys

Master keys are long-lived and used to encrypt a large number of keys and require strong protection. These criteria recommend that master keys be readily accessible, stored locally, and as securely as possible. Trusted Compute Platform storage meets these requirements. [3]

Restricted Service Access

To improve security, access to the Key Manager service will be restricted to only OpenStack services, that is bearers of service/admin tokens. Further, Compute Nodes (the least trusted of the hosts), will not be provided access, which is also the reason behind the no-db-compute feature.

Restricted Key Access

Keys are owned by the service that creates them, and access to such keys is limited to the service introducing them.

The exception to the above are the wider scope keys that are used in dual locking. That is the User/Project/Domain keys, which belong to the Identity Service, which is a part of Keystone. Keystone's Trust feature will be used to delegate access to such keys to the encryption service needing them. Delegation comes with an expiry period. Delegation brings with a need for services to access the Keystone Identity service master key. Transfer of the Identity Keystone Master key from one service to the other can be securely performed using TPM symmetric key sharing protocols. [4]

Side Benefits

- Communication between the service and the key manager do not need to be further encrypted using ssl or https because they keys flying between them are at all times encrypted. The decrypted key string would at any time only reside on the service that seeks to save it or use.

- Keys used by different open stack services could reside in a single storage system but if one service were to be compromised, the keys from other services would still be safe.

- Further, should there be a desire to change a master key, only keys stored by that service need to be re-encrypted. The actual data that they were used to encrypt do not need to be re-encrypted.

Key Manager in OpenStack

Keys

- API

get <key-id> <authorization-token> put <key-id> <encrypted-key-string> <authorization-token>

delete<key-id> <authorization-token>

update<key-id> <authorization-token>

Key Scope:

- Per entity (entity could be a volume, an object, a VM image/snapshot)

- Per user

- Per project (within a domain)

- Per domain

For strong encryption, typically a key is used in conjunction with an initialization vector (IV). The per-entity key would serve as an IV. It could be used alone or in conjunction with a wider scoped key, such as a domain scope key.

Key-Size

- 128, 192, 256, .. 2048 .. longer or shorter (possibly used with padding).

Some algorithms require longer keys, so we support a wide range.

Phased Implementation

Master Key Handling

A phase I implementation would be to use the Python Key Ring int he first pass. This is readily available through OpenStack Common today. Phase II would be to move to the full fledged TPM solution.

Key Flow

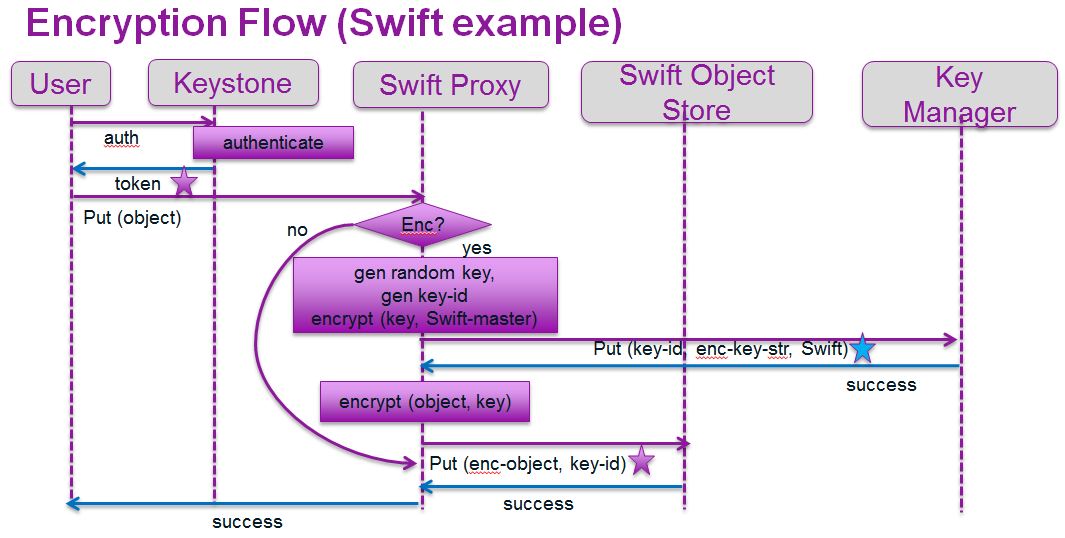

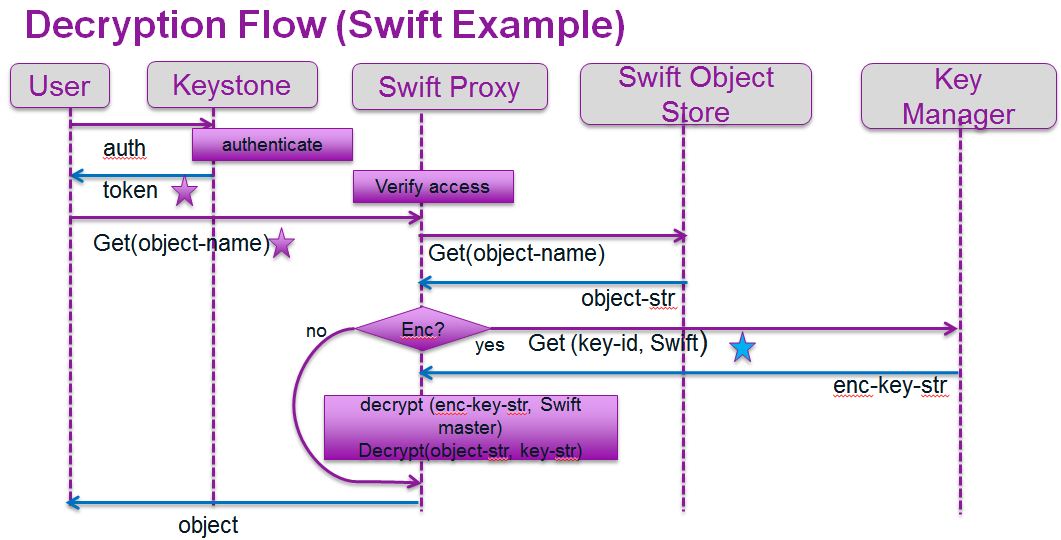

The figures below illustrate how the Key Manager fits into the regular flow of putting and getting an object in Swift. For simplicity, caching of keys and secondary key handling (for dual locking) is omitted.

Caching Keys

Key Manager’s keys need to be accessible at the same level as the objects they encrypt, to ensure ready access. The keys themselves could be cached at the service endpoint using them with an expiration equal to or less than that of the access token lifetime used to obtain them. Caching reduces network traffic and the load on the key manager. With dual keys, where the wider scope key is obtained through access delegation, the lifetime would be that of the delegation period.

Concerns and Questions

- With another service, Key Manager, in the picture, we have another component that could fail. But encryption will always need keys to be maintained, so this would be

a cost of the feature. Caching keys mitigates some of the problems, and using TPM protects the keys while they are being saved and transmitted by way of encryption, and only decrypted at point of use.

- Do we need to support KMIP in the key Manager. If the keys are just of end user encryption provision, perhaps not. However if we desire to use the key manager to save

private and public keys of the services in OpenStack, KMIP would be good to have.

- Data transfer overhead: Swift uses Rsync for file transfer during replication. Any encryption algorithm that uses some form of block cipher chaining or new initialization vector each time would result in the object representation changing drastically on each update. This would result in a larger network payload for transmission.

- Unauthorized key deletion: If we use a Swift based system to store keys and insert tombstone records to mimic a legitimate deletion after breaking into a Swift key storage node, yes, keys could indeed be deleted by a reaper task, but this would be no new security hazard from what Swift deals with today. Perhaps we could introduce a check that there was a logged request to delete a key before deleting a key.

- Wary of losing control of encryption key(s): Support the use case where the end user provides the encryption key (and stores a copy of their own key, and is responsible for maintaining safety of the key). The said key will not then be saved in the Key Manager.

7• Do we need an IV (initialization vector) for each object encrypted. Yes, if we take the common key for a project or domain approach. In this case the IV would need to be encrypted, and could be stored against a key-id. We could specify “compound-encryption” to imply use a master key in conjunction with the IV (accessed via the iv-id attached to the object meta-data).

8• No re-keying in phase-1. Not addressing background tasks of object re-keying such as that mentioned in Mirantis blog.

Implementation versions

Phase 1: Develop stub Key Manager service and specify encryption parameters in the url

Key manager could just be a hash table in the first version to get all the APIs specified and implement, to get the plumbing correct. Support a single most popular encryption algorithm. This would fully implement object encryption.

Phase-2: Make Key Manager is Swift instance, with multiple zones for storage. This would support true HA and fault tolerance.

Phase-3: Support multiple encryption algorithms. For instance, volume encryption may prefer XTS, an encryption strategy that uses sector address.

Phase-4: Reaper routine to change a master key for a service

Glossary

Key-string: A string of bits used to encrypt data. Ideally auto-generated using a random number generator that exploits entropy. Intel's hardware random number generator is a high speed source of quality randomness.

Key-id: a unique ID used to index a key-string in the system. The key-id will be attached as meta data with the encrypted object/volume/.

Master-key: a key-string used to encrypt the keys (key-strings) before saving in the key manager, saved in trusted storage at the service end-point.

TPM: Trusted Platform Module

References

- ↑ https://blueprints.launchpad.net/nova/+spec/encrypt-cinder-volumes

- ↑ http://www.mirantis.com/blog/openstack-swift-encryption-architecture

- ↑ http://opensecuritytraining.info/IntroToTrustedComputing_files/Day1-7-tpm-keys.pdf

- ↑ http://shazkhan.files.wordpress.com/2010/10/http__www-trust-rub-de_media_ei_lehrmaterialien_trusted-computing_keyreplication_.pdf