Heat/AutoScaling

Contents

- 1 Heat Autoscaling now and beyond

- 1.1 Now

- 1.2 Dependencies

- 1.3 When a stack is created with these resources the following happens:

- 1.4 When an alarm is triggered in watchrule.py the following happens:

- 1.5 Beyond

- 1.6 Use Cases

- 1.7 General Ideas

- 1.8 AutoScaling

- 1.9 Using AutoScale from Heat templates

- 1.10 When an alarm is triggered in Ceilometer the following happens:

- 1.11 The AutoScaling Resources

- 1.12 Outstanding Questions

- 1.13 Authentication

- 1.14 Securing Webhooks

Heat Autoscaling now and beyond

AS = AutoScaling

Now

The AWS AS is broken into a number of logical objects

- AS group (heat/engine/resources/autoscaling.py)

- AS policy (heat/engine/resources/autoscaling.py)

- AS Launch Config (heat/engine/resources/autoscaling.py)

- Cloud Watch Alarms (heat/engine/resources/cloud_watch.py, heat/engine/watchrule.py)

Dependencies

Note the in template resource dependencies are:

- Alarm

- Group

- Policy

- Group

- Launch Config

- [Load Balancer] - optional

- Group

This mean the creation order should be [LB, LC, Group, Policy, Alarm].

When a stack is created with these resources the following happens:

- Alarm: the alarm rule is written into the DB

- Policy: nothing interesting

- LaunchConfig: it is just storage

- Group: the Launch config is used to create the initial number of servers.

- the new server starts posting samples back to the cloud watch API

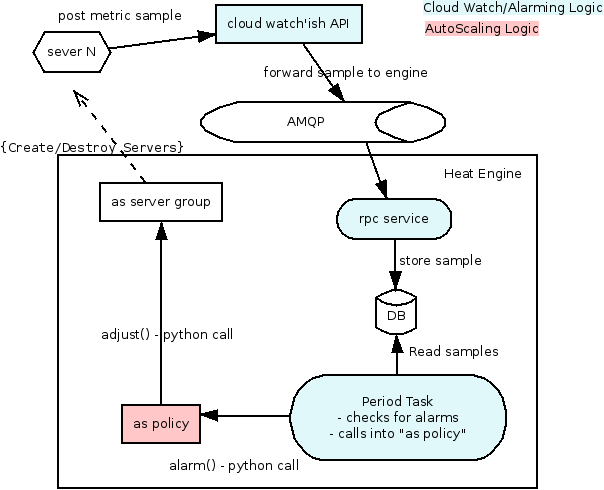

When an alarm is triggered in watchrule.py the following happens:

- the periodic task runs the watch rule

- when an alarm is triggered it calls (python call) the policy resource (policy.alarm())

- the policy figures out if it needs to adjust the group size, if it does it calls (via python again) group.adjust()

Beyond

The following blueprint and its dependents currently reflects the design laid out in this document: https://blueprints.launchpad.net/heat/+spec/heat-autoscaling

Use Cases

- Users want to use AutoScale without using Heat templates.

- Users want to use AutoScale *with* Heat templates.

- Administrators or automated processes want to add or remove *specific* instances from a scaling group. (one node was compromised or had some critical error?)

- Users want to scale arbitrary resources.

General Ideas

- Implement scaling groups, policies, and monitoring integration in a separate API

- That separate API will be usable by end-users directly, or via Heat templates.

- That API will create a Heat template and its own Heat stack whenever an AutoScalingGroup is created within it.

- As events happen which trigger a policy that changes the number of instances in a scaling group, the AutoScale API will generate a new template, and update-stack the stack that it manages.

AutoScaling

AutoScaling will be delegated to a service external to Heat (but implemented inside the Heat project/codebase). It will be responsible for AutoScalingGroups and ScalingPolicies. Monitoring services (e.g. Ceilometer) will communicate with the AutoScaling service to execute policies, and the AutoScaling service will execute those policies by updating a stack in Heat.

The communication is as thus:

- When AutoScaling resources are created in Heat, they will register the data with the AutoScaling service via POSTs to its API. This includes the AutoScalingGroup and the ScalingPolicy.

- When Ceilometer (or any other monitoring service) hits an AutoScaling webhook, the AutoScaling service will execute the associated policy (unless it's on cooldown).

- During policy execution, the AutoScaling service will talk to Heat to manipulate the stack that lives within Heat.

Using AutoScale from Heat templates

The following (new) resources will do the following things.

- OS::Heat::AutoScalingGroup: Invokes the AS API to create a group.

- OS::Heat::AutoScalingPolicy: Invokes the AS API to create a policy.

- OS::Heat::AutoScalingAlarm: First invokes the AS API to create a webhook-type trigger, then invokes the Ceilometer alarm which points at the webhook URL.

When an alarm is triggered in Ceilometer the following happens:

- Ceilometer will invoke the webhook associated with the alarm (served by the AS API)

- the AS Policy figures out if it needs to adjust the group size, if it does, it updates the internal Heat template and posts an update-stack on the stack that it manages.

The AutoScaling Resources

ScalingGroup

A scaling group that can manage the scaling of arbitrary Heat resources.

- Properties:

- name

- max_size

- min_size

- cooldown

- resources: The mapping of resources that will be duplicated in order to scale.

The 'resources' mapping is duplicated for each scaling unit. For example, if the 'resources' property is specified as follows:

resources:

my_web_server:

type: AWS::EC2::Instance

...

then if we scale to "2", the concrete resources included in the private stack's template will be as follows:

my_web_server-1:

type: AWS::EC2::Instance

...

my_web_server-2:

type: AWS::EC2::Instance

...

And multiple resources are supported and scaled in lockstep. For example, if the 'resources' property is specified as follows:

resources:

my_web_server:

type: AWS::EC2::Instance

...

my_db_server:

type: AWS::EC2::Instance

...

Then the resulting template (when scaled to "2") will be

my_web_server-1: ... my_db_server-1: ...

ScalingPolicy

A scaling policy describes a particular type of change to a scaling group, such as "change to -1 capacity" or "+change to 10% capacity" or "change to 5 capacity".

- Properties:

- name

- group_id: the ID of the group that this policy will affect

- cooldown

- change: a number that has an effect based on change_type.

- change_type: one of "change_in_capacity", "percentage_change_in_capacity", or "exact_capacity" -- describes what this policy does (and the meaning of "change")

WebHookTrigger

Represents a revokable webhook endpoint for executing a policy.

For example, when you create a webhook for a policy, a new URL endpoint will be created in the form of http://as-api/webhooks/<random_hash>. When that URL is requested, the policy will be executed.

- Properties:

- policy_id: The ID of the policy.

- Attributes:

- webhook_url: The webhook URL.

ScheduleTrigger

Defines a trigger that will execute the policy at scheduled times. Useful for scaling up before regular expected load.

- Properties:

- policy_id: The ID of the policy.

- cron: a cron-style schedule string (optional; exclusive with 'at')

- at: an at-style schedule string (optional; exclusive with 'cron')

Outstanding Questions

- Load Balancer integration: How will LB integration work? In CFN's autoscaling resource, there is a reference to the load balancer (that the user must create) in the AutoScalingGroup. In Heat's implementation, that load balancer is updated to refer to the instance IDs of the instances that are dynamically created. The AS API service will not have access to that Load Balancer resource living in the original stack, so how will it update the LB?

- More general inter-stack references: There are certainly other cases where a resource being autoscaled will need to have a relationship with a resource living in the user's stack. It would be nice if we can find a solution that solves the load balancer case along with the more general problem.

Authentication

- how do we authenticate the request from ceilometer to AS?

- is this a special unprivileged user "ceilometer-alarmer" that we trust?

- The AS API should have access to a Trust for the user who owns the resources it manages, and pass that Trust to Heat.

Securing Webhooks

Many systems just treat the webhook URL as a secret (with a big random UUID in it, generated *per client*). I think think this is actually fine, but it has two problems we can easily solve:

- there are lots of places other than the actual SSL stream that URLs can be seen. Logs of the Autoscale HTTP server, for example.

- it's susceptible to replay attacks (if sniff one request, you can send the same request to keep doing the same operation, like scaling up or down)

The first one is easy to solve by putting some important data into the POST body. The second one can be solved with a nonce with timestamp component.

The API for creating a webhook in the autoscale server should return two things, the webhook URL and a random signing secret. When Ceilometer (or any client) hits the webhook URL, it should do the following:

- include a "timestamp" argument with the current timestamp

- include another random nonce

- sign the request with the signing secret

(to solve the first problem from above, the timestamp and nonce should be in the POST request body instead of the URL)

And anytime the AS service receives a webhook it should:

- verify the signature

- ensure that the timestamp is reasonably recent (no more than minutes old, and no more than minutes into the future)

- check to see if the timestamp+nonce has been used recently (we only need to store the nonces used within that "reasonable" time window)

On top of all of this, of course, webhooks should be revokable.

[Qu] if we do this in the context of Heat (db not accessible from the API daemon).

- We are going to have to send all webhooks to the heat-engine for verification.

- This is because we can't check the uuid in the API, thus making it very easy for a DOS attack. Any idea on how to solve this?

[An] This doesn't sound like a unique problem, which should be solved by rate limiting, as other parts of OpenStack do.

[Qu] Why make Autoscale a separate service?

[An] To clarify, service = REST server (to me)

Initially because someone wanted it separate (rackers). But I think it is the right approach long term.

Heat should not be in the business of implementing too many services internally, but rather having resources to orchestrate them.

monitoring <> Xaas.policy <> heat.resource.action()

Some cool things we could do with this:

- better instance HA (restarting servers when they are ill) - and smarter logic defining what is "ill"

- autoscaling

- energy saving (could be linked to autoscaling)

- automated backup (calling snapshots at regular time periods)

- autoscaling using shelving? (maybe for faster response)

I guess we could put all this into one service (an all purpose policy service)?