Difference between revisions of "Edge Computing Group/Edge Reference Architectures"

(→Centralized Control Plane Scenario) |

(→Deployment Scenarios) |

||

| (36 intermediate revisions by 3 users not shown) | |||

| Line 2: | Line 2: | ||

Define a reference architecture for edge and far edge deployments including OpenStack services and other open source components as building blocks. | Define a reference architecture for edge and far edge deployments including OpenStack services and other open source components as building blocks. | ||

| + | |||

| + | "Edge" is a term with varying definitions depending on the particular problem a deployer is attempting to solve. These permutations of perspectives drive a paucity of aligned user stories to share with the OpenStack and StarlingX communities. | ||

= Overview = | = Overview = | ||

| Line 13: | Line 15: | ||

== Tiers of computing sites == | == Tiers of computing sites == | ||

| − | The below table captures the discussions at the PTG and refers to the definitions we created earlier in collaboration with the OPNFV Edge Cloud Project as they are described in their [https:// | + | The below table captures the discussions at the PTG and refers to the definitions we created earlier in collaboration with the OPNFV Edge Cloud Project as they are described in their [https://opnfv-edgecloud.readthedocs.io/en/stable-gambia/development/requirements/index.html whitepaper]. |

{| class="wikitable" | {| class="wikitable" | ||

| Line 19: | Line 21: | ||

! scope="col"| OpenStack Denver PTG (2018) | ! scope="col"| OpenStack Denver PTG (2018) | ||

! scope="col"| OPNFV Edge Cloud Project | ! scope="col"| OPNFV Edge Cloud Project | ||

| + | ! scope="col"| Edge Glossary | ||

|- | |- | ||

| − | | ''' | + | | '''Central Datacenter''' |

* A large centralized facility located within 100ms of consumers. | * A large centralized facility located within 100ms of consumers. | ||

* Typically one deployer will have less than 10 of these. | * Typically one deployer will have less than 10 of these. | ||

| − | | | + | | |

|- | |- | ||

| '''Edge Site''' | | '''Edge Site''' | ||

| − | * A smaller site | + | * A smaller site with delay 2.5ms - 4ms |

* One deployer could have hundreds of these. | * One deployer could have hundreds of these. | ||

| − | | [https:// | + | | [https://opnfv-edgecloud.readthedocs.io/en/stable-gambia/development/requirements/requirements.html#medium-edge Medium Edge]/[https://opnfv-edgecloud.readthedocs.io/en/stable-gambia/development/requirements/requirements.html#large-edge Large Edge] |

| + | | [https://github.com/lf-edge/glossary/blob/master/edge-glossary.md#aggregation-edge-layer Aggregation Edge Layer] | ||

|- | |- | ||

| '''Far Edge Site/Cloudlet''' | | '''Far Edge Site/Cloudlet''' | ||

| − | * A smaller site | + | * A smaller site with delay around 2ms |

* One deployer could have thousands of these. | * One deployer could have thousands of these. | ||

| − | | [https:// | + | | [https://opnfv-edgecloud.readthedocs.io/en/stable-gambia/development/requirements/requirements.html#small-edge Small Edge] |

| + | | [https://github.com/lf-edge/glossary/blob/master/edge-glossary.md#access-edge-layer Access Edge Layer] | ||

|- | |- | ||

| '''Fog computing''' | | '''Fog computing''' | ||

* Devices physically adjacent to consumer (typically within the same building) within 1-2ms of consumers. | * Devices physically adjacent to consumer (typically within the same building) within 1-2ms of consumers. | ||

* One deployer could have tens of thousands of these. | * One deployer could have tens of thousands of these. | ||

| + | | | ||

| | | | ||

|} | |} | ||

| Line 45: | Line 51: | ||

There isn't a one size fits all solution to infrastructure. One must select a design pattern which best addresses their needs. | There isn't a one size fits all solution to infrastructure. One must select a design pattern which best addresses their needs. | ||

| − | The following patterns have been developed to address specific user stories in edge compute architecture. They assume the deployer has tens of regional datacenters, 50+ edge sites, and hundreds or thousands of far edge cloudlets. | + | The following patterns have been developed to address specific user stories in edge compute architecture. They assume the deployer has tens of regional datacenters, 50+ edge sites, and hundreds or thousands of far edge cloudlets.<br/> |

| + | User Stories are tracked in [https://storyboard.openstack.org/#!/worklist/539 Storyboard] <br/> | ||

| + | The team is working on to define related [[Image_handling_in_edge_environment#Edge_Scenarios_for_Glance|Glance]] and [[Keystone_edge_architectures#Identity_Provider_.28IdP.29_Master_with_shadow_users|Keystone]] scenarios. | ||

| − | + | = Design decisions = | |

| − | * | + | * Use bare metal installation of OpenStack |

| − | * | + | * no control plane HA |

| − | + | * Vanilla ML2 OvS Neutron should be used | |

| − | * | ||

| − | |||

| − | |||

| − | |||

= Deployment Scenarios = | = Deployment Scenarios = | ||

| Line 60: | Line 64: | ||

== Distributed Control Plane Scenario == | == Distributed Control Plane Scenario == | ||

| − | This design describes an architecture in which an independent control plane is placed within each edge site. A deployment of this type will benefit from greater autonomy in the event of a network partition between the edge site and the main datacenter. This comes at the cost of greater effort in maintaining a large quantity of independent control planes | + | This design describes an architecture in which an independent control plane is placed within each edge site. A deployment of this type will benefit from greater autonomy in the event of a network partition between the edge site and the main datacenter. This comes at the cost of greater effort in maintaining a large quantity of independent control planes. |

| + | |||

| + | [[File: Edge_Ref_Mod_Distributed.jpeg|700px]] | ||

| + | |||

| + | === OpenStack Example === | ||

| + | |||

| + | [[File: Edge_reference_architectures_Distributed_003.png|700px]] | ||

| + | |||

| + | While we defined multiple layers of edge sites in a hierarchical structure we don't require all of them present in order to apply the minimal reference architecture. In a distributed control plane scenario we consider a deployment without the 'small edge' nodes present to be a valid architecture option as well as it is depicted below. | ||

| + | |||

| + | [[File: Edge_reference_architectures_Distributed_004.png|700px]] | ||

| − | + | Note: An optional IdP node at the edge site is required if full autonomy is required at the edge site in the event of network isolation; i.e. such that local users at the edge site can locally authenticate with their normal userid and credentials in order to manage the edge site and the workloads on the edge site. | |

== Centralized Control Plane Scenario == | == Centralized Control Plane Scenario == | ||

| Line 68: | Line 82: | ||

This design describes an architecture in which edge and far edge cloudlets are managed from a control plane in a Main datacenter. A deployment of this type will enjoy simplified management of compute resources across regions. This comes at the cost of losing the ability to manage instances in an edge or far edge cloudlets during a network partition between the Regional data center and Edge. | This design describes an architecture in which edge and far edge cloudlets are managed from a control plane in a Main datacenter. A deployment of this type will enjoy simplified management of compute resources across regions. This comes at the cost of losing the ability to manage instances in an edge or far edge cloudlets during a network partition between the Regional data center and Edge. | ||

| − | [[File: | + | [[File: Edge_Ref_Mod_Centralized.jpeg|700px]] |

| + | |||

| + | === OpenStack Example === | ||

| + | |||

| + | [[File: Edge_reference_architectures_Central_001.png|700px]] | ||

| + | |||

| + | While we defined multiple layers of edge sites in a hierarchical structure we don't require all of them present in order to apply the minimal reference architecture. In a centralized control plane scenario we consider a deployment without the 'large/medium edge' nodes present to be a valid architecture option as well as it is illustrated below. | ||

| + | |||

| + | In this case the centralized DC has all the required control plane services which doesn't require the large/medium edge site present if the use case doesn't demand it in order to have the expected functionality available on the small edge site. | ||

| + | |||

| + | [[File: Edge_reference_architectures_Central_002.png|700px]] | ||

== Other edge architecture options == | == Other edge architecture options == | ||

| − | * [https://specs.openstack.org/openstack/tripleo-specs/specs/rocky/split-controlplane.html Tripleo edge team] | + | * Tripleo |

| + | ** [https://specs.openstack.org/openstack/tripleo-specs/specs/rocky/split-controlplane.html Tripleo edge team] | ||

| + | ** [https://access.redhat.com/documentation/en-us/red_hat_openstack_platform/13/html-single/deploying_distributed_compute_nodes_to_edge_sites/index Config guide from RedHat] | ||

= Links = | = Links = | ||

* [https://www.dropbox.com/s/255x1cao14taer3/MVP-Architecture_edge-computing_PTG.pptx?dl=0#] | * [https://www.dropbox.com/s/255x1cao14taer3/MVP-Architecture_edge-computing_PTG.pptx?dl=0#] | ||

| + | |||

| + | = Old Diagrams = | ||

| + | |||

| + | [[File: Large_Scale_Centralized_Control.png|600px]] | ||

| + | [[File: Centralized_Control.png|400px]] | ||

| + | [[File: Large_Scale_Distributed_Control.png|600px]] | ||

| + | [[File: Distributed_Control.png|400px]] | ||

Latest revision as of 10:54, 1 June 2020

Contents

Objective

Define a reference architecture for edge and far edge deployments including OpenStack services and other open source components as building blocks.

"Edge" is a term with varying definitions depending on the particular problem a deployer is attempting to solve. These permutations of perspectives drive a paucity of aligned user stories to share with the OpenStack and StarlingX communities.

Overview

"The most mature view of edge computing is that it is offering application developers and service providers cloud computing capabilities, as well as an IT service environment at the edge of a network." - Cloud Edge Computing: Beyond the Data Center by the OSF Edge Computing Group

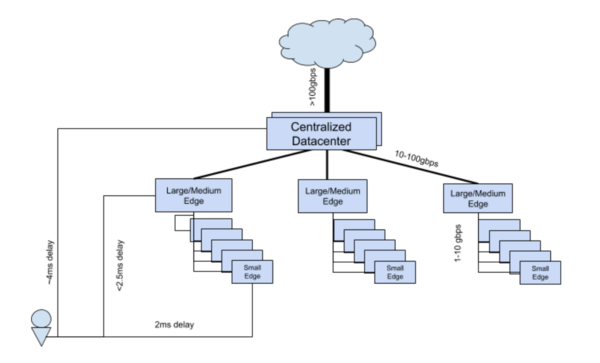

"We define Edge computing as an infrastructure deployment focused on reducing latency between an application and its consumer by increasing geographical proximity to the consumer." - Denver PTG (2018) definition

Tiers of computing sites

The below table captures the discussions at the PTG and refers to the definitions we created earlier in collaboration with the OPNFV Edge Cloud Project as they are described in their whitepaper.

| OpenStack Denver PTG (2018) | OPNFV Edge Cloud Project | Edge Glossary |

|---|---|---|

Central Datacenter

|

||

Edge Site

|

Medium Edge/Large Edge | Aggregation Edge Layer |

Far Edge Site/Cloudlet

|

Small Edge | Access Edge Layer |

Fog computing

|

User Stories

There isn't a one size fits all solution to infrastructure. One must select a design pattern which best addresses their needs.

The following patterns have been developed to address specific user stories in edge compute architecture. They assume the deployer has tens of regional datacenters, 50+ edge sites, and hundreds or thousands of far edge cloudlets.

User Stories are tracked in Storyboard

The team is working on to define related Glance and Keystone scenarios.

Design decisions

- Use bare metal installation of OpenStack

- no control plane HA

- Vanilla ML2 OvS Neutron should be used

Deployment Scenarios

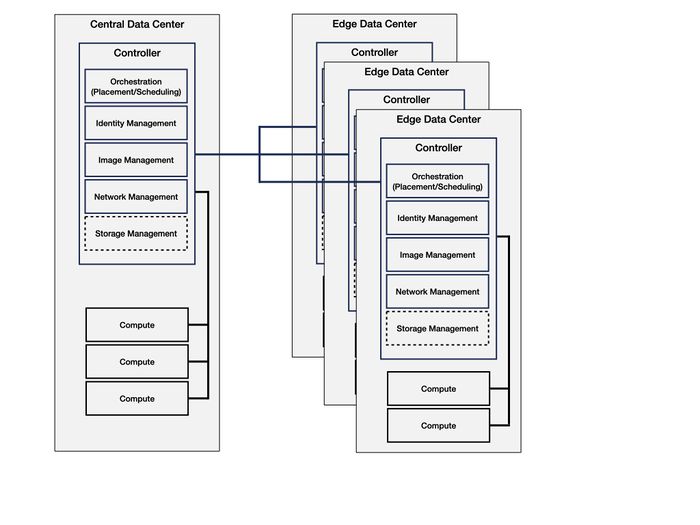

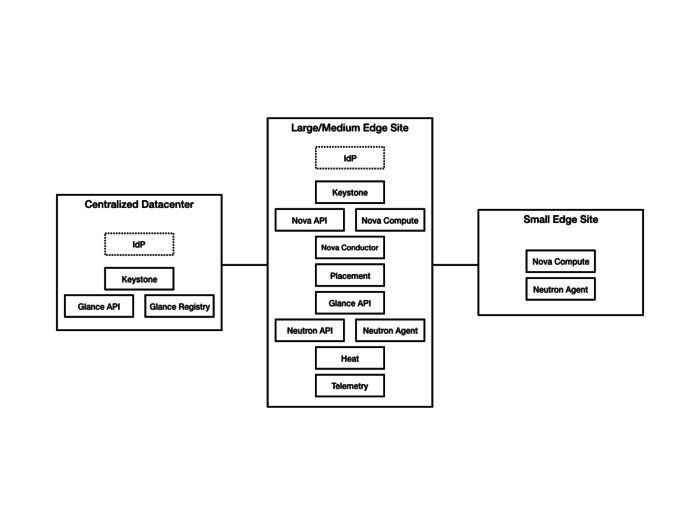

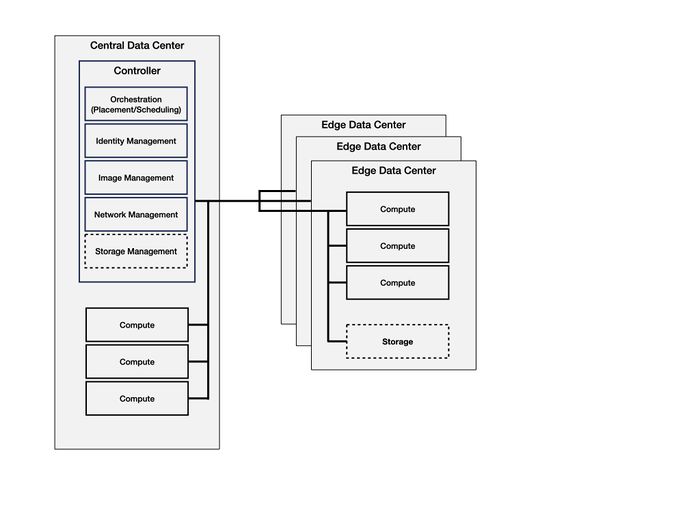

Distributed Control Plane Scenario

This design describes an architecture in which an independent control plane is placed within each edge site. A deployment of this type will benefit from greater autonomy in the event of a network partition between the edge site and the main datacenter. This comes at the cost of greater effort in maintaining a large quantity of independent control planes.

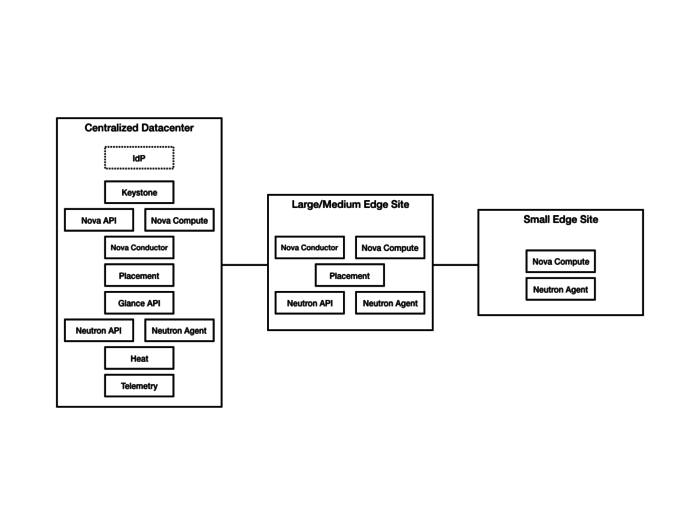

OpenStack Example

While we defined multiple layers of edge sites in a hierarchical structure we don't require all of them present in order to apply the minimal reference architecture. In a distributed control plane scenario we consider a deployment without the 'small edge' nodes present to be a valid architecture option as well as it is depicted below.

Note: An optional IdP node at the edge site is required if full autonomy is required at the edge site in the event of network isolation; i.e. such that local users at the edge site can locally authenticate with their normal userid and credentials in order to manage the edge site and the workloads on the edge site.

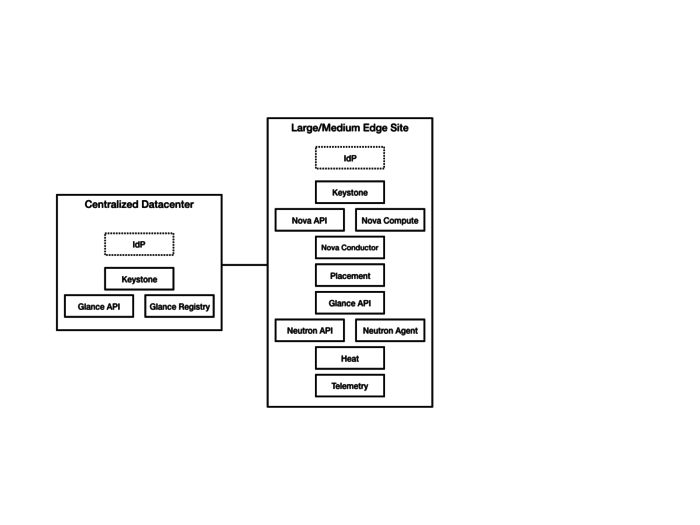

Centralized Control Plane Scenario

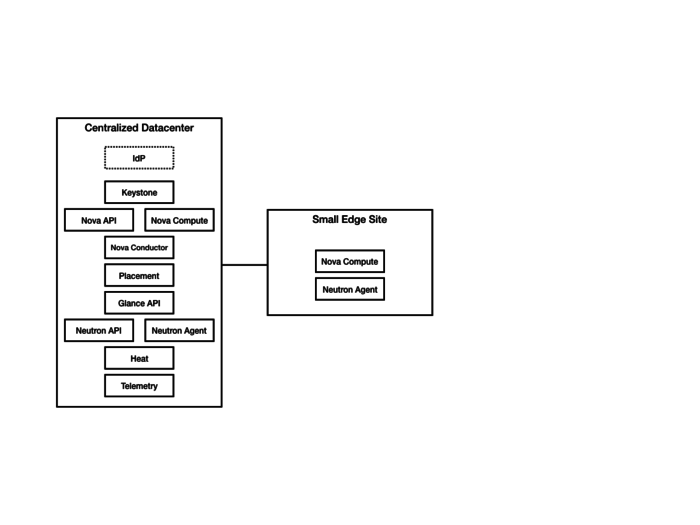

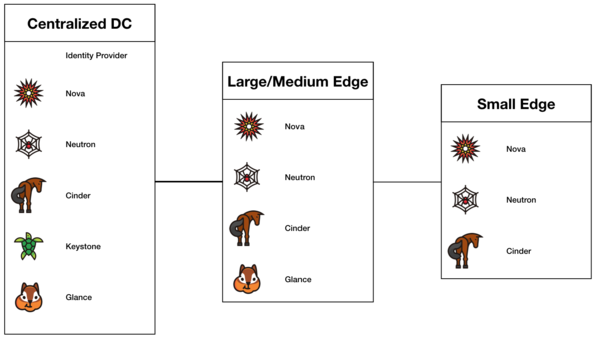

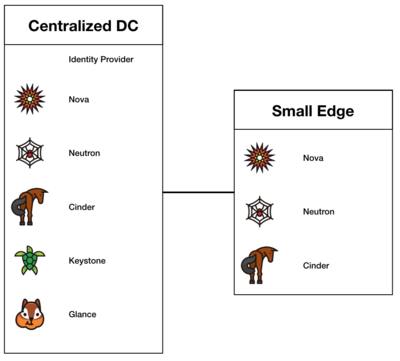

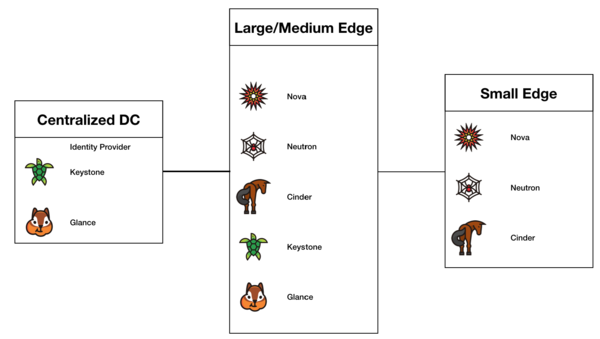

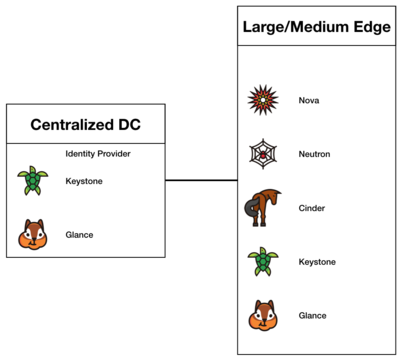

This design describes an architecture in which edge and far edge cloudlets are managed from a control plane in a Main datacenter. A deployment of this type will enjoy simplified management of compute resources across regions. This comes at the cost of losing the ability to manage instances in an edge or far edge cloudlets during a network partition between the Regional data center and Edge.

OpenStack Example

While we defined multiple layers of edge sites in a hierarchical structure we don't require all of them present in order to apply the minimal reference architecture. In a centralized control plane scenario we consider a deployment without the 'large/medium edge' nodes present to be a valid architecture option as well as it is illustrated below.

In this case the centralized DC has all the required control plane services which doesn't require the large/medium edge site present if the use case doesn't demand it in order to have the expected functionality available on the small edge site.