Blueprint-networking-vpp-taas

Contents

Purpose of this BP

The goal of this document is to propose to add an implementation of a TaaS driver for networking-vpp. Actual OVS driver for Tap as a Service uses openflow rules. With the port mirroring plugin in VPP, we should be able to implement the same feature, using the same API.

Relationship with other blueprint

TaaS has no blueprint, but here are usefull links:

As we leverage the existing TaaS model, we rely mainly on it. The main points are: - tenant isolation - selection of the direction of the captured packets (ingress, egress, booth)

Use cases

Same as TaaS: debug, probe, IDS, etc.

Possible implementations

Two sides of the implementation should be considered:

- the VPP part, on which the python API is built on, needs to have the port mirroring feature enabled. This is not addressed in this document

- the Neutron plugin part, which is the object of this documentation

Two main possibilities exist to implement this feature:

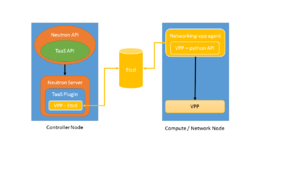

- Implement a TaaS driver side by side with the existing networking-vpp ml2 plugin

- Implement at the TaaS Plugin level, leveraging the work done on etcd to store all required information, as well as the integration with VPP python api.

In booth cases, we should stick to the existing TaaS api to ensure cross project compatibility,

In this latter case, the TaaS server can update the etcd database used by networking-vpp using a new root path: Existing etcd paths are : /networking-vpp/nodes/<node-name>/ports/ /networking-vpp/state/<node-name>/ports/ /networking-vpp/state/<node-name>/physnets/

We may add taas imformation per node under '/networking-vpp/nodes/<node-name>/taas/'. Upon notification of a new element under this path, the networking-vpp agent will configure accordingly vpp and report the state under '/networking-vpp/state/node-name>/taas/'.

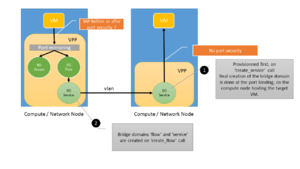

In order to support a meshed TaaS (ie multiple flows to multiple services), the possible implementation is the following: - the vhost-user interface is spanned to a loopback interface, this loop0belongs to the 'flow' bridge domain - we copy the packets from the flow bridge domain to the 'service' bridge domain, which includes:

- the appropriate vlan sub interface

- possibly the destination vhost-user.