Baremetal/Historical

- Launchpad Entry: NovaSpec:general-bare-metal-provisioning-framework

Contents

Summary

This page contains information of historical interest to the GeneralBareMetalProvisioningFramework.

Code Added

#!wiki red/solid * [[https://github.com/NTTdocomo-openstack/nova/tree/master/nova/virt/baremetal | nova/nova/virt/baremetal/*]] * [[https://github.com/NTTdocomo-openstack/nova/tree/master/nova/tests/baremetal | nova/nova/tests/baremetal/*]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/bin/bm_deploy_server | nova/bin/bm_deploy_server]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/bin/nova-bm-manage | nova/bin/nova-bm-manage]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/baremetal_host_manager.py | nova/nova/scheduler/baremetal_host_manager.py]]

Code Changed

#!wiki red/solid * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/compute/manager.py | nova/compute/manager.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/compute/resource_tracker.py | nova/compute/resource_tracker.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/manager.py | nova/manager.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/driver.py | nova/scheduler/driver.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/filter_scheduler.py | nova/scheduler/filter_scheduler.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/host_manager.py | nova/scheduler/host_manager.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/tests/compute/test_compute.py | nova/tests/compute/test_compute.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/virt/driver.py | nova/virt/driver.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/tests/test_virt_drivers.py | nova/tests/test_virt_drivers.py]]

(1) (Merged) Updated scheduler and compute for multiple bare-metal capabilities: : https://review.openstack.org/13920

- nova/compute/manager.py

- nova/compute/resource_tracker.py

- nova/manager.py

- nova/scheduler/driver.py

- nova/scheduler/filter_scheduler.py

- nova/scheduler/host_manager.py

- nova/tests/compute/test_compute.py

- nova/tests/test_virt_drivers.py

- nova/virt/driver.py

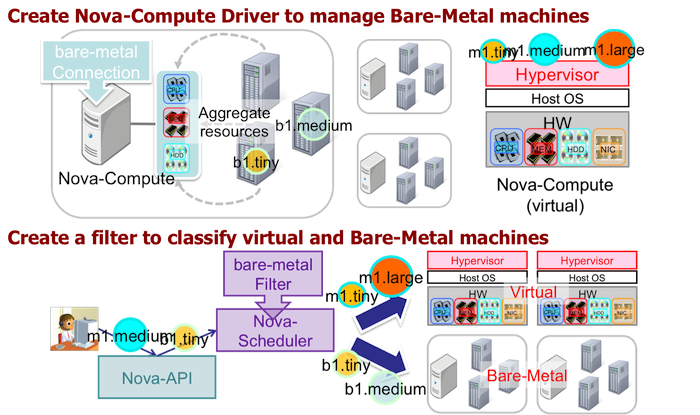

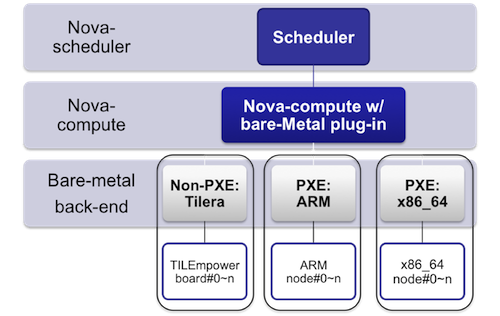

- This patch includes updates on scheduler and compute codes for multiple bare-metal capabilities. In bare-metal provisioning, a bare-metal nova-compute manages multiple bare-metal nodes where instances are provisioned. Nova DB's compute_nodes entry needs to be created for each bare-metal node, and a scheduler can choose an appropriate bare-metal node to provision an instance. With this patch, one service entry with multiple bare-metal compute_node entries is registered at the start of bare-metal nova-compute. Distinct 'node name' is given for each bare-metal node. For this purpose, we extended FilterScheduler to put <nodename> to Nova DB's instance_system_metadata, and bare-metal nova-compute use <host> and <hypervisor_hostname> instead of <host> to identify the bare-metal node to provision the instance. Also, 'capability’ is extended from a dictionary to a list of dictionaries to describe the multiple capabilities of the multiple bare-metal nodes.

(2) (Merged) Added separate bare-metal MySQL DB: https://review.openstack.org/10726

- nova/virt/baremetal/bmdb/*

- nova/tests/baremetal/bmdb/*

- Previously, bare-metal provisioning used text files to store information of bare-metal machines. In this patch, a MySQL is used to store the information. The DB is designed to support PXE/non-PXE booting methods, heterogeneous hypervisor types, and architectures. Using a MySQL makes maintenance and upgrades easier than using text files. The DB for bare-metal nodes is implemented as a separate DB from the main Nova DB. The DB can be on any machines/places. The location of the DB and its server needs to be specified as a flag in the nova.conf file (as in the case of glance). There are a couple of reasons for this approach. First, the information needed for bare-metal nodes is different from that for non-bare-metal nodes. With a separate database for bare-metal nodes, the database can be customized without affecting the main Nova DB. Second, fault tolerance can be embedded in bare-metal nova-compute. Since one bare-metal nova-compute manages multiple bare-metal nodes, fault tolerance of a bare-metal nova-compute node is very important. With a separate DB for bare-metal nodes, fault-tolerance can be achieved independently from the main Nova DB. Replication of the bare-metal DB and implementation of fault-tolerance are not part of this patch. The implementation models nova and its DB as much as possible. The bare-metal driver must be upgraded to use this DB.

(6) (Merged) Added bare-metal host manager: https://review.openstack.org/11357

- nova/scheduler/baremetal_host_manager.py

- The bare-metal host manager is used when multiple bare-metal instances are created by one request. The bare-metal host manager detects if a nova-compute is for VM or bare-metal. For VM instance provisioning, it simply calls the original host_manager. For bare-metal instance provisioning, BaremetalNodeState is used to manage bare-metal node's resources when an instance is provisioned to a bare-metal node.

Code Added

#!wiki red/solid * [[https://github.com/NTTdocomo-openstack/nova/tree/master/nova/virt/baremetal | nova/nova/virt/baremetal/*]] * [[https://github.com/NTTdocomo-openstack/nova/tree/master/nova/tests/baremetal | nova/nova/tests/baremetal/*]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/bin/bm_deploy_server | nova/bin/bm_deploy_server]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/bin/nova-bm-manage | nova/bin/nova-bm-manage]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/baremetal_host_manager.py | nova/nova/scheduler/baremetal_host_manager.py]]

Code Changed

#!wiki red/solid * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/compute/manager.py | nova/compute/manager.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/compute/resource_tracker.py | nova/compute/resource_tracker.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/manager.py | nova/manager.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/driver.py | nova/scheduler/driver.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/filter_scheduler.py | nova/scheduler/filter_scheduler.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/scheduler/host_manager.py | nova/scheduler/host_manager.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/tests/compute/test_compute.py | nova/tests/compute/test_compute.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/virt/driver.py | nova/virt/driver.py]] * [[https://github.com/NTTdocomo-openstack/nova/blob/master/nova/tests/test_virt_drivers.py | nova/tests/test_virt_drivers.py]]

(3) A script for bare-metal node management: https://review.openstack.org/#/c/11366/

- bin/nova-baremetal-manage

- doc/source/man/nova-baremetal-manage.rst

- nova/tests/baremetal/test_nova_baremetal_manage.py

- This script allows the system administrator to manage bare-metal nodes. Bare-metal node creation/deletion, PXE ip address creation/listing, bare-metal interface creation/deletion/list, and other routines are implemented. The script manipulate bare-metal DB accordingly.

(4) Updated bare-metal provisioning framework: https://review.openstack.org/11354

- etc/nova/rootwrap.d/baremetal_compute.filters

- nova/tests/baremetal/test_driver.py

- nova/tests/baremetal/test_volume_driver.py

- nova/virt/baremetal/baremetal_states.py

- nova/virt/baremetal/driver.py

- nova/virt/baremetal/fake.py

- nova/virt/baremetal/interfaces.template

- nova/virt/baremetal/utils.py

- nova/virt/baremetal/vif_driver.py

- nova/virt/baremetal/volume_driver.py

- New Baremetal driver is implemented in this patch. This patch does not include Tilera or PXE back-ends, which will be

provided by subsequent patches. With this driver, nova compute registers multiple entries of baremetal nodes. It periodically updates the capabilities of the multiple bare-metal nodes and reports it as a list of capabilities.

(5) Added pxe back-end bare-metal.: https://review.openstack.org/11088

- nova/tests/baremetal/test_ipmi.py

- nova/tests/baremetal/test_pxe.py

- nova/virt/baremetal/ipmi.py

- nova/virt/baremetal/pxe.py

- nova/virt/baremetal/vlan.py

- nova/virt/baremetal/doc/pxe-bm-installation.rst

- nova/virt/baremetal/doc/pxe-bm-instance-creation.rst

- This patch includes only PXE back-end with IPMI. The documents describe how to install and configure bare-metal nova-compute to support PXE back-end nodes. It includes the packages needed for installation, how to create a bare-metal instance type, how to register bare-metal nodes and NIC, and how to run an instance. Nova flags for the configuration of bare-metal nova-compute are described.

(7) Add Tilera back-end for baremetal: https://review.openstack.org/#/c/16608/

- etc/nova/rootwrap.d/baremetal_compute.filters

- nova/tests/baremetal/test_tilera.py

- nova/tests/baremetal/test_tilera_pdu.py

- nova/virt/baremetal/tilera.py

- nova/virt/baremetal/tilera_pdu.py

- nova/virt/baremetal/doc/tilera-bm-installation.rst

- nova/virt/baremetal/doc/tilera-bm-instance-creation.rst

- This patchset was split out from (4) at the request of Nova core members.

(8) PXE bare-metal provisioning helper server: https://review.openstack.org/#/c/15830/

- bin/nova-baremetal-deploy-helper

- etc/nova/rootwrap.d/baremetal-deploy-helper.filters

- nova/tests/baremetal/test_nova_baremetal_deploy_helper.py

- doc/source/man/nova-baremetal-deploy-helper.rst

/!\ NOTE /!\ This section is out of date and needs to be updated based on code which has landed in Grizzly trunk. -Devananda, 2013-01-18

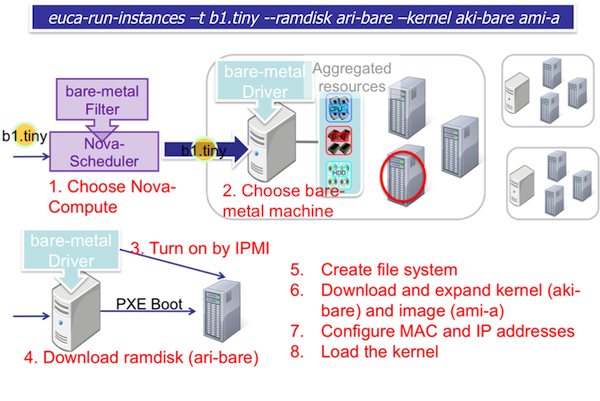

1) A user requests a baremetal instance.

- Non-PXE (Tilera):

euca-run-instances -t tp64.8x8 -k my.key ami-CCC

- PXE

euca-run-instances -t baremetal.small --kernel aki-AAA --ramdisk ari-BBB ami-CCC

2) nova-scheduler selects a baremetal nova-compute with the following configuration.

- Here we assume that:

$IP

MySQL for baremetal DB runs at the machine whose IP address is $IP(127.0.0.1). It must be changed if a different IP address is used.

$ID

$ID should be replaced by MySQL user id

$Password

$Password should be replaced by MySQL password

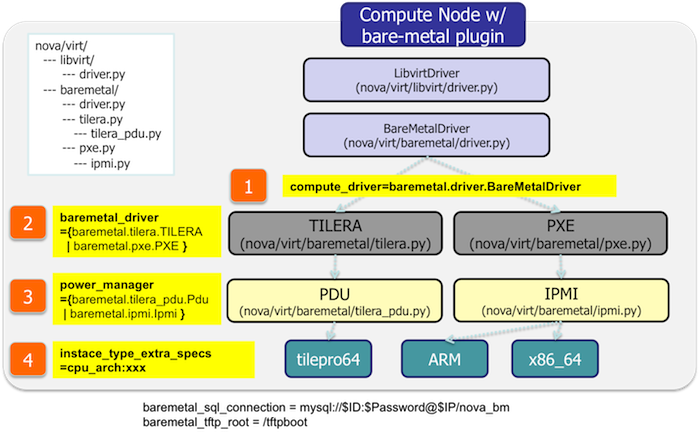

- Non-PXE (Tilera) [nova.conf]:

baremetal_sql_connection=mysql://$ID:$Password@$IP/nova_bm

compute_driver=nova.virt.baremetal.driver.BareMetalDriver

baremetal_driver=nova.virt.baremetal.tilera.TILERA

power_manager=nova.virt.baremetal.tilera_pdu.Pdu

instance_type_extra_specs=cpu_arch:tilepro64

baremetal_tftp_root = /tftpboot

scheduler_host_manager=nova.scheduler.baremetal_host_manager.BaremetalHostManager

- PXE [nova.conf]:

baremetal_sql_connection=mysql://$ID:$Password@$IP/nova_bm

compute_driver=nova.virt.baremetal.driver.BareMetalDriver

baremetal_driver=nova.virt.baremetal.pxe.PXE

power_manager=nova.virt.baremetal.ipmi.Ipmi

instance_type_extra_specs=cpu_arch:x86_64

baremetal_tftp_root = /tftpboot

scheduler_host_manager=nova.scheduler.baremetal_host_manager.BaremetalHostManager

baremetal_deploy_kernel = xxxxxxxxxx

baremetal_deploy_ramdisk = yyyyyyyy

3) The bare-metal nova-compute selects a bare-metal node from its pool based on hardware resources and the instance type (# of cpus, memory, HDDs).

4) Deployment images and configuration are prepared.

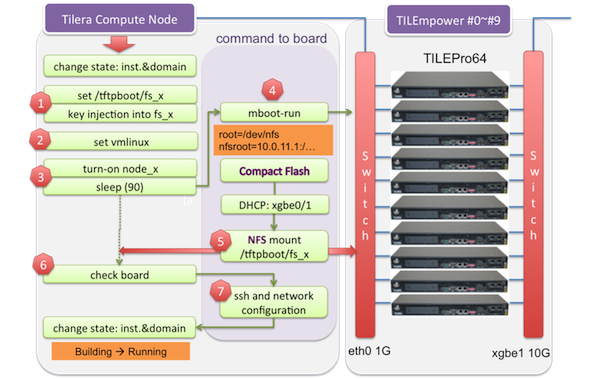

- Non-PXE (Tilera):

- The key injected file system is prepared and then NFS directory is configured for the bare-metal nodes. The kernel is already put to CF(Compact Flash Memory) of each tilera board and the ramdisk is not used for the tilera bare-metal nodes. For NFS mounting, /tftpboot/fs_x (x=node_id) should be set before launching instances.

- PXE:

- kernel and ramdisk for the deployment, and the user specified kernel and ramdisk are put to TFTP server. PXE are configured for the baremetal host.

5) The baremetal nova-compute powers on the baremetal node thorough

- Non-PXE (Tilera): PDU(Power Distribution Unit)

- PXE: IPMI

6) The image is deployed to bare-metal node.

- Non-PXE (Tilera): The images are deployed to bare-metal nodes. nova-compute mounts AMI into NFS directory based on the id of the selected tilera bare-metal node.

- PXE: The host uses the deployment kernel and ramdisk, and the baremetal nova-copute writes AMI to the host's local disk via iSCSI.

7) Bare-metal node is booted.

- Non-PXE (Tilera):

- PXE:

Packages A: Non-PXE (Tilera)

- This procedure is for RHEL. Reading 'tilera-bm-instance-creation.txt' may make this document easy to understand.

- TFTP, NFS, EXPECT, and Telnet installation:

$ yum install nfs-utils.x86_64 expect.x86_64 tftp-server.x86_64 telnet

- TFTP configuration:

$ cat /etc/xinetd.d/tftp

# default: off

# description: The tftp server serves files using the trivial file transfer \

# protocol. The tftp protocol is often used to boot diskless \

# workstations, download configuration files to network-aware printers,

# \

# and to start the installation process for some operating systems.

service tftp

{

socket_type = dgram

protocol = udp

wait = yes

user = root

server = /usr/sbin/in.tftpd

server_args = -s /tftpboot

disable = no

per_source = 11

cps = 100 2

flags = IPv4

}

$ /etc/init.d/xinetd restart

- NFS configuration:

$ mkdir /tftpboot

$ mkdir /tftpboot/fs_x (x: the id of tilera board)

$ cat /etc/exports

/tftpboot/fs_0 tilera0-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_1 tilera1-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_2 tilera2-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_3 tilera3-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_4 tilera4-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_5 tilera5-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_6 tilera6-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_7 tilera7-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_8 tilera8-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

/tftpboot/fs_9 tilera9-eth0(sync,rw,no_root_squash,no_all_squash,no_subtree_check)

$ sudo /etc/init.d/nfs restart

$ sudo /usr/sbin/exportfs

- TileraMDE install: TileraMDE-3.0.1.125620:

$ cd /usr/local/

$ tar -xvf tileramde-3.0.1.125620_tilepro.tar

$ tar -xjvf tileramde-3.0.1.125620_tilepro_apps.tar.bz2

$ tar -xjvf tileramde-3.0.1.125620_tilepro_src.tar.bz2

$ mkdir /usr/local/TileraMDE-3.0.1.125620/tilepro/tile

$ cd /usr/local/TileraMDE-3.0.1.125620/tilepro/tile/

$ tar -xjvf tileramde-3.0.1.125620_tilepro_tile.tar.bz2

$ ln -s /usr/local/TileraMDE-3.0.1.125620/tilepro/ /usr/local/TileraMDE

- Installation for 32-bit libraries to execute TileraMDE:

$ yum install glibc.i686 glibc-devel.i686

Packages B: PXE

- This procedure is for Ubuntu 12.04 x86_64. Reading 'baremetal-instance-creation.txt' may make this document easy to understand.

- dnsmasq (PXE server for baremetal hosts)

- syslinux (bootloader for PXE)

- ipmitool (operate IPMI)

- qemu-kvm (only for qemu-img)

- open-iscsi (connect to iSCSI target at berametal hosts)

- busybox (used in deployment ramdisk)

- tgt (used in deployment ramdisk)

- Example:

$ sudo apt-get install dnsmasq syslinux ipmitool qemu-kvm open-iscsi

$ sudo apt-get install busybox tgt

- Ramdisk for Deployment

- To create a deployment ramdisk, use 'baremetal-mkinitrd.sh' in [baremetal-initrd-builder](https://github.com/NTTdocomo-openstack/baremetal-initrd-builder):

$ cd baremetal-initrd-builder

$ ./baremetal-mkinitrd.sh <ramdisk output path> <kernel version>

$ ./baremetal-mkinitrd.sh /tmp/deploy-ramdisk.img 3.2.0-26-generic

working in /tmp/baremetal-mkinitrd.9AciX98N

368017 blocks

Register the kernel and the ramdisk to Glance.

$ glance add name="baremetal deployment ramdisk" is_public=true container_format=ari disk_format=ari < /tmp/deploy-ramdisk.img

Uploading image 'baremetal deployment ramdisk'

===========================================[100%] 114.951697M/s, ETA 0h 0m 0s

Added new image with ID: e99775cb-f78d-401e-9d14-acd86e2f36e3

$ glance add name="baremetal deployment kernel" is_public=true container_format=aki disk_format=aki < /boot/vmlinuz-3.2.0-26-generic

Uploading image 'baremetal deployment kernel'

===========================================[100%] 46.9M/s, ETA 0h 0m 0s

Added new image with ID: d76012fc-4055-485c-a978-f748679b89a9

- ShellInABox

- Baremetal nova-compute uses [ShellInABox](http://code.google.com/p/shellinabox/) so that users can access baremetal host's console through web browsers.

- Build from source and install:

$ sudo apt-get install gcc make

$ tar xzf shellinabox-2.14.tar.gz

$ cd shellinabox-2.14

$ ./configure

$ sudo make install

- PXE Boot Server

- Prepare TFTP root directory:

$ sudo mkdir /tftpboot

$ sudo cp /usr/lib/syslinux/pxelinux.0 /tftpboot/

$ sudo mkdir /tftpboot/pxelinux.cfg

- Start dnsmasq. Example: start dnsmasq on eth1 with PXE and TFTP enabled:

$ sudo dnsmasq --conf-file= --port=0 --enable-tftp --tftp-root=/tftpboot --dhcp-boot=pxelinux.0 --bind-interfaces --pid-file=/dnsmasq.pid --interface=eth1 --dhcp-range=192.168.175.100,192.168.175.254

(You may need to stop and disable dnsmasq)

$ sudo /etc/init.d/dnsmasq stop

$ sudo sudo update-rc.d dnsmasq disable

- How to create an image:

- Example: create a partition image from ubuntu cloud images' Precise tarball:

$ wget http://cloud-images.ubuntu.com/precise/current/precise-server-cloudimg-amd64-root.tar.gz $ dd if=/dev/zero of=precise.img bs=1M count=0 seek=1024 $ mkfs -F -t ext4 precise.img $ sudo mount -o loop precise.img /mnt/ $ sudo tar -C /mnt -xzf ~/precise-server-cloudimg-amd64-root.tar.gz $ sudo mv /mnt/etc/resolv.conf /mnt/etc/resolv.conf_orig $ sudo cp /etc/resolv.conf /mnt/etc/resolv.conf $ sudo chroot /mnt apt-get install linux-image-3.2.0-26-generic vlan open-iscsi $ sudo mv /mnt/etc/resolv.conf_orig /mnt/etc/resolv.conf $ sudo umount /mnt

Nova Directories

$ sudo mkdir /var/lib/nova/baremetal

$ sudo mkdir /var/lib/nova/baremetal/console

$ sudo mkdir /var/lib/nova/baremetal/dnsmasq

Baremetal Database

- Create the baremetal database. Grant all provileges to the user specified by the 'baremetal_sql_connection' flag. Example:

$ mysql -p mysql> create database nova_bm; mysql> grant all privileges on nova_bm.* to '$ID'@'%' identified by '$Password'; mysql> exit

- Create tables:

$ bm_db_sync

Create Baremetal Instance Type

- First, create an instance type in the normal way.

$ nova-manage instance_type create --name=tp64.8x8 --cpu=64 --memory=16218 --root_gb=917 --ephemeral_gb=0 --flavor=6 --swap=1024 --rxtx_factor=1 $ nova-manage instance_type create --name=bm.small --cpu=2 --memory=4096 --root_gb=10 --ephemeral_gb=20 --flavor=7 --swap=1024 --rxtx_factor=1 (about --flavor, see 'How to choose the value for flavor' section below)

- Next, set baremetal extra_spec to the instance type:

$ nova-manage instance_type set_key --name=tp64.8x8 --key cpu_arch --value 'tilepro64' $ nova-manage instance_type set_key --name=bm.small --key cpu_arch --value 'x86_64'

How to choose the value for flavor

- Run nova-manage instance_type list, find the maximum FlavorID in output. Use the maximum FlavorID+1 for new instance_type.

$ nova-manage instance_type list

m1.medium: Memory: 4096MB, VCPUS: 2, Root: 40GB, Ephemeral: 0Gb, FlavorID: 3, Swap: 0MB, RXTX Factor: 1.0, ExtraSpecs {}

m1.small: Memory: 2048MB, VCPUS: 1, Root: 20GB, Ephemeral: 0Gb, FlavorID: 2, Swap: 0MB, RXTX Factor: 1.0, ExtraSpecs {}

m1.large: Memory: 8192MB, VCPUS: 4, Root: 80GB, Ephemeral: 0Gb, FlavorID: 4, Swap: 0MB, RXTX Factor: 1.0, ExtraSpecs {}

m1.tiny: Memory: 512MB, VCPUS: 1, Root: 0GB, Ephemeral: 0Gb, FlavorID: 1, Swap: 0MB, RXTX Factor: 1.0, ExtraSpecs {}

m1.xlarge: Memory: 16384MB, VCPUS: 8, Root: 160GB, Ephemeral: 0Gb, FlavorID: 5, Swap: 0MB, RXTX Factor: 1.0, ExtraSpecs {}

- In the example above, the maximum Flavor ID is 5, so use 6 and 7.

Start Processes

(Currently, you might have trouble if run processes as a user other than the superuser...) $ sudo bm_deploy_server & $ sudo nova-scheduler & $ sudo nova-compute &

Register Baremetal Node and NIC

- First, register a baremetal node.

- non-PXE (Tilera): Next, register the baremetal node's NICs.

- PXE: First, register a baremetal node. In this step, one of the NICs must be specified as a PXE NIC. Ensure the NIC is PXE-enabled and the NIC is selected as a primary boot device in BIOS. Next, register all the NICs except the PXE NIC specified in the first step.

- To register a baremetal node, use 'nova-bm-manage node create'. It takes the parameters listed below.

--host: baremetal nova-compute's hostname --cpus=: number of CPU cores --memory_mb: memory size in MegaBytes --local_gb: local disk size in GigaBytes --pm_address: tilera node's IP address / IPMI address --pm_user: IPMI username --pm_password: IPMI password --prov_mac_address: tilera node's MAC address / PXE NIC's MAC address --terminal_port: TCP port for ShellInABox. Each node must use unique TCP port. If you do not need console access, use 0.

# Tilera example $ nova-bm-manage node create --host=bm1 --cpus=64 --memory_mb=16218 --local_gb=917 --pm_address=10.0.2.1 --pm_user=test --pm_password=password --prov_mac_address=98:4b:e1:67:9a:4c --terminal_port=0 # PXE/IPMI example $ nova-bm-manage node create --host=bm1 --cpus=4 --memory_mb=6144 --local_gb=64 --pm_address=172.27.2.116 --pm_user=test --pm_password=password --prov_mac_address=98:4b:e1:11:22:33 --terminal_port=8000

- To verify the node registration, run 'nova-bm-manage node list':

$ nova-bm-manage node list ID SERVICE_HOST INSTANCE_ID CPUS Memory Disk PM_Address PM_User TERMINAL_PORT PROV_MAC PROV_VLAN 1 bm1 None 64 16218 917 10.0.2.1 test 0 98:4b:e1:67:9a:4c None 2 bm1 None 4 6144 64 172.27.2.116 test 8000 98:4b:e1:11:22:33 None

- To register NIC, use 'nova-bm-manage interface create'. It takes the parameters listed below.

--node_id: ID of the baremetal node owns this NIC (the first column of 'bm_node_list') --mac_address: this NIC's MAC address in the form of xx:xx:xx:xx:xx:xx --datapath_id: datapath ID of OpenFlow switch this NIC is connected to --port_no: OpenFlow port number this NIC is connected to (--datapath_id and --port_no are used for network isolation. It is OK to put 0, if you do not have OpenFlow switch.)

# example: node 1, without OpenFlow $ nova-bm-manage interface create --node_id=1 --mac_address=98:4b:e1:67:9a:4e --datapath_id=0 --port_no=0 # example: node 2, with OpenFlow $ nova-bm-manage interface create --node_id=2 --mac_address=98:4b:e1:11:22:34 --datapath_id=0x123abc --port_no=24

- To verify the NIC registration, run 'bm_interface_list':

$ bm_interface_list ID BM_NODE_ID MAC_ADDRESS DATAPATH_ID PORT_NO 1 1 98:4b:e1:67:9a:4e 0x0 0 2 2 98:4b:e1:11:22:34 0x123abc 24

Run Instance

- Run instance using the baremetal instance type. Make sure to use kernel and image that support baremetal hardware (i.e contain drivers for baremetal hardware ).

euca-run-instances -t tp64.8x8 -k my.key ami-CCC euca-run-instances -t bm.small --kernel aki-AAA --ramdisk ari-BBB ami-CCC