Difference between revisions of "Auto-scaling SIG/Theory of Auto-Scaling"

Joseph Davis (talk | contribs) (Initial framework) |

(→Considerations and Guidelines) |

||

| (10 intermediate revisions by 2 users not shown) | |||

| Line 4: | Line 4: | ||

<fill in> | <fill in> | ||

| + | <what is the scope of auto-scaling, how does it differ from self-healing, what does it have in common with self-healing> | ||

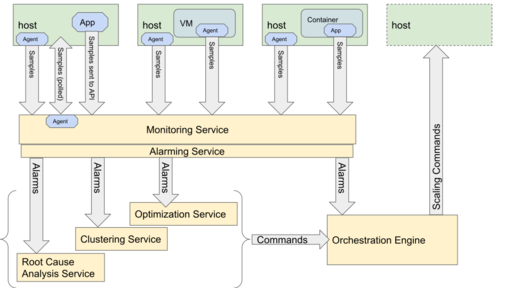

== Conceptual Diagram == | == Conceptual Diagram == | ||

| Line 9: | Line 10: | ||

[[File:OpenStack-Auto-Scaling.svg|Auto-Scaling Architecture Component Diagram]] | [[File:OpenStack-Auto-Scaling.svg|Auto-Scaling Architecture Component Diagram]] | ||

| − | + | == Components of Auto-Scaling == | |

| − | + | OpenStack offers a rich set of services to build, manage, orchestrate, and provision a cloud. This gives administrators some choices in how to best serve their customer's needs. | |

| − | + | * Scaling units - There are a number of components that can be controlled with Auto-Scaling. | |

| − | + | ** Compute Host | |

| − | + | ** VM running on a Compute Host | |

| − | + | ** Container running on a Compute Host | |

| − | + | ** Network Attached Storage | |

| − | + | ** Virtual Network Functions | |

| − | + | * Monitoring Service - either using an agent installed on the Scaling unit, or using a polling method to retrieve metrics | |

| − | + | ** [https://wiki.openstack.org/wiki/Monasca Monasca] | |

| − | + | ** [https://wiki.openstack.org/wiki/Telemetry Ceilometer from the Telemetry project] | |

| − | + | ** Prometheus | |

| − | + | * Alarming Service | |

| − | + | ** Monasca has a built in alarm thresholding service and notification service | |

| − | agent | + | ** [https://wiki.openstack.org/wiki/Telemetry Aodh from the Telemetry project] |

| − | + | * Decision Services - There are a number of services in OpenStack that can interpret metrics and alarms based on configured logic and produce commands to Orchestration Engines | |

| − | + | ** Congress | |

| − | + | ** Heat | |

| − | + | ** Vitrage | |

| − | + | ** Watcher | |

| − | + | * Orchestration Engines | |

| − | + | ** Heat | |

| − | + | ** Senlin is a clustering engine for OpenStack, and can orchestrate auto-scaling | |

| − | + | ** [https://wiki.openstack.org/wiki/Tacker Tacker] | |

| − | |||

| − | |||

| − | |||

| − | Heat - | ||

| − | |||

| − | |||

| − | |||

== Considerations and Guidelines == | == Considerations and Guidelines == | ||

* Monitoring takes resources, plan accordingly | * Monitoring takes resources, plan accordingly | ||

* Avoid scaling too quickly or too often | * Avoid scaling too quickly or too often | ||

| + | ** This can be done by specifying appropriate cooldown periods. | ||

| + | ** Another technique is to average the scaling metric over a longer time period to avoid reacting to sudden fluctuations | ||

* Don't expect instantaneous scaling (see above) | * Don't expect instantaneous scaling (see above) | ||

| + | ** Define thresholds to be predictive of scale needs, not reactive to a bad state | ||

* Be aware of where the logic for scaling is (alarm thresholds, decision services) | * Be aware of where the logic for scaling is (alarm thresholds, decision services) | ||

| + | * Define appropriate scaling limits in terms of minimum and maximum instances. | ||

| + | ** Minimum number of instances will prevent all the instances from being removed. | ||

| + | ** Maximum number of instances safeguards against provisioning too many resources that could adversely affect other workloads. | ||

| + | * Applications must be horizontally scalable in order to auto-scale the underlying instances. | ||

| + | ** Applications must be stateless or be able to drain existing stateful connections so that the underlying instances can be removed during a scale down. | ||

| + | ** Incoming requests must be dynamically load balanced among the instances running the application. | ||

| + | |||

| + | === Anecdotes and Stories === | ||

Latest revision as of 17:23, 28 May 2019

Contents

Theory of Auto-Scaling

General Description

<fill in> <what is the scope of auto-scaling, how does it differ from self-healing, what does it have in common with self-healing>

Conceptual Diagram

Components of Auto-Scaling

OpenStack offers a rich set of services to build, manage, orchestrate, and provision a cloud. This gives administrators some choices in how to best serve their customer's needs.

- Scaling units - There are a number of components that can be controlled with Auto-Scaling.

- Compute Host

- VM running on a Compute Host

- Container running on a Compute Host

- Network Attached Storage

- Virtual Network Functions

- Monitoring Service - either using an agent installed on the Scaling unit, or using a polling method to retrieve metrics

- Monasca

- Ceilometer from the Telemetry project

- Prometheus

- Alarming Service

- Monasca has a built in alarm thresholding service and notification service

- Aodh from the Telemetry project

- Decision Services - There are a number of services in OpenStack that can interpret metrics and alarms based on configured logic and produce commands to Orchestration Engines

- Congress

- Heat

- Vitrage

- Watcher

- Orchestration Engines

- Heat

- Senlin is a clustering engine for OpenStack, and can orchestrate auto-scaling

- Tacker

Considerations and Guidelines

- Monitoring takes resources, plan accordingly

- Avoid scaling too quickly or too often

- This can be done by specifying appropriate cooldown periods.

- Another technique is to average the scaling metric over a longer time period to avoid reacting to sudden fluctuations

- Don't expect instantaneous scaling (see above)

- Define thresholds to be predictive of scale needs, not reactive to a bad state

- Be aware of where the logic for scaling is (alarm thresholds, decision services)

- Define appropriate scaling limits in terms of minimum and maximum instances.

- Minimum number of instances will prevent all the instances from being removed.

- Maximum number of instances safeguards against provisioning too many resources that could adversely affect other workloads.

- Applications must be horizontally scalable in order to auto-scale the underlying instances.

- Applications must be stateless or be able to drain existing stateful connections so that the underlying instances can be removed during a scale down.

- Incoming requests must be dynamically load balanced among the instances running the application.