Rally

Contents

What is Rally?

If you are here, you are probably familiar with OpenStack and you also know that it's a really huge ecosystem of cooperative services. When something fails, performs slowly or doesn't scale, it's really hard to answer different questions on "what", "why" and "where" has happened. Another reason why you could be here is that you would like to build an OpenStack CI/CD system that will allow you to improve SLA, performance and stability of OpenStack continuously.

The OpenStack QA team mostly works on CI/CD that ensures that new patches don't break some specific single node installation of OpenStack. On the other hand it's clear that such CI/CD is only an indication and does not cover all cases (e.g. if a cloud works well on a single node installation it doesn't mean that it will continue to do so on a 1k servers installation under high load as well). Rally aims to fix this and help us to answer the question "How does OpenStack work at scale?". To make it possible, we are going to automate and unify all steps that are required for benchmarking OpenStack at scale: multi-node OS deployment, verification, benchmarking & profiling.

- Deploy engine is not yet another deployer of OpenStack, but just a pluggable mechanism that allows to unify & simplify work with different deployers like: DevStack, Fuel, Anvil on hardware/VMs that you have.

- Verification - (work in progress) uses tempest to verify the functionality of a deployed OpenStack cloud. In future Rally will support other OS verifiers.

- Benchmark engine - allows to create parameterized load on the cloud based on a big repository of benchmarks.

For more information about how it works take a look at Rally Architecture

Use Cases

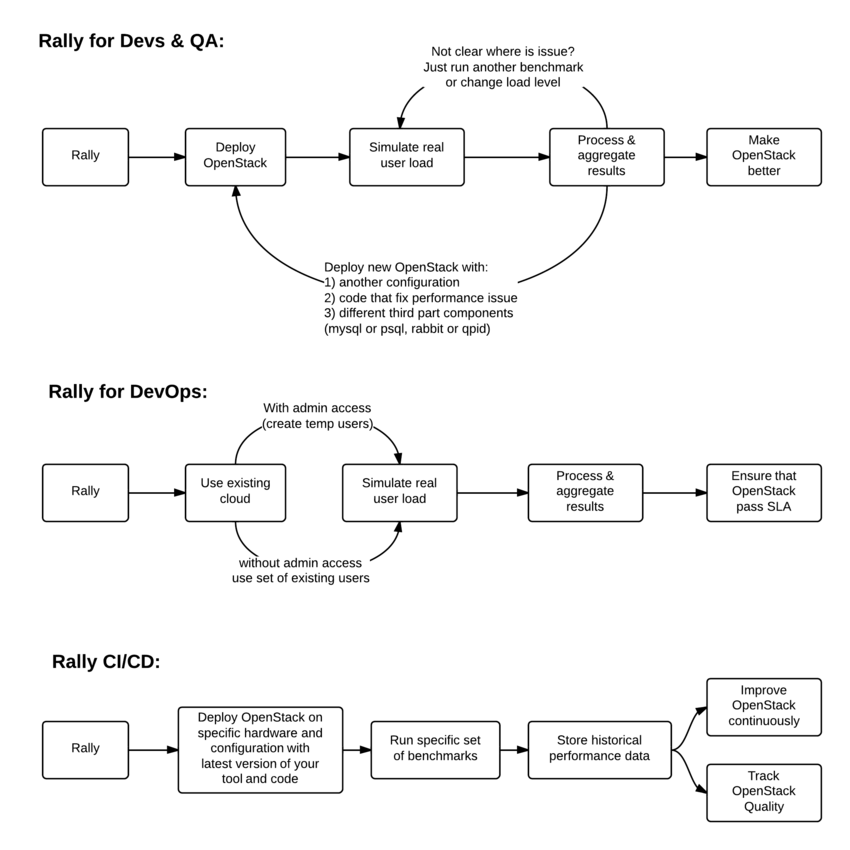

Before diving deep in Rally architecture let's take a look at 3 major high level Rally Use Cases:

Typical cases where Rally aims to help are:

- Automate measuring & profiling focused on how new code changes affect the OS performance;

- Using Rally profiler to detect scaling & performance issues;

- Investigate how different deployments affect the OS performance:

- Find the set of suitable OpenStack deployment architectures;

- Create deployment specifications for different loads (amount of controllers, swift nodes, etc.);

- Automate the search for hardware best suited for particular OpenStack cloud;

- Automate the production cloud specification generation:

- Determine terminal loads for basic cloud operations: VM start & stop, Block Device create/destroy & various OpenStack API methods;

- Check performance of basic cloud operations in case of different loads.

Architecture

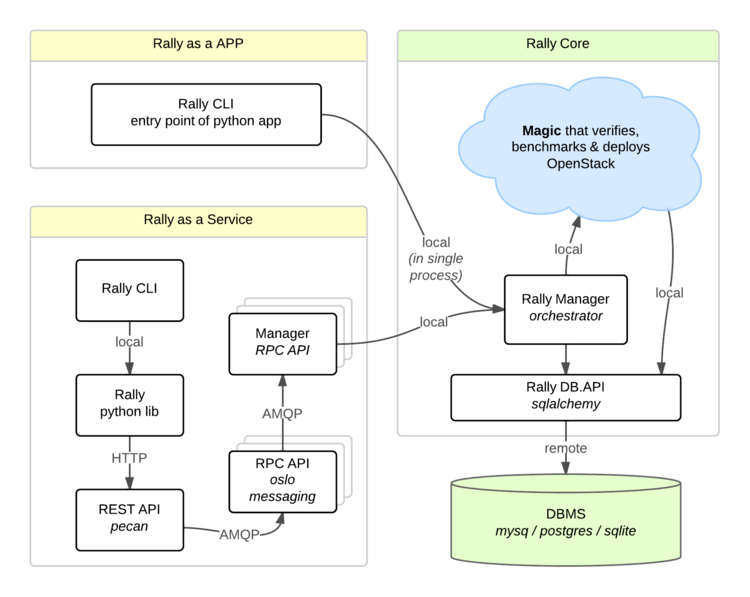

Usually OpenStack projects are as-a-Service, so Rally provides this approach and a CLI driven approach that does not require a daemon:

- Rally as-a-Service: Run rally as a set of daemons that present Web UI (work in progress) so 1 RaaS could be used by whole team.

- Rally as-an-App: Rally as a just lightweight CLI app (without any daemons), that makes it simple to develop & much more portable.

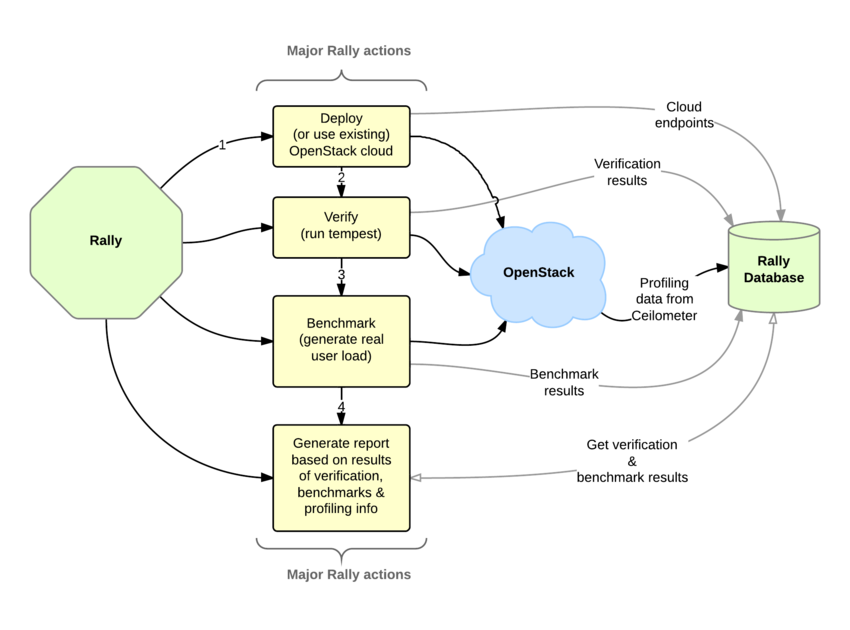

How is this possible? Take a look at diagram below:

So what is behind Rally?

Rally Components

Rally consists of 4 main components:

- Server Providers - provide servers (virtual servers), with ssh access, in one L3 network.

- Deploy Engines - deploy OpenStack cloud on servers that are presented by Server Providers

- Verification - component that runs tempest (or another pecific set of tests) against a deployed cloud, collects results & presents them in human readable form.

- Benchmark engine - allows to write parameterized benchmark scenarios & run them against the cloud.

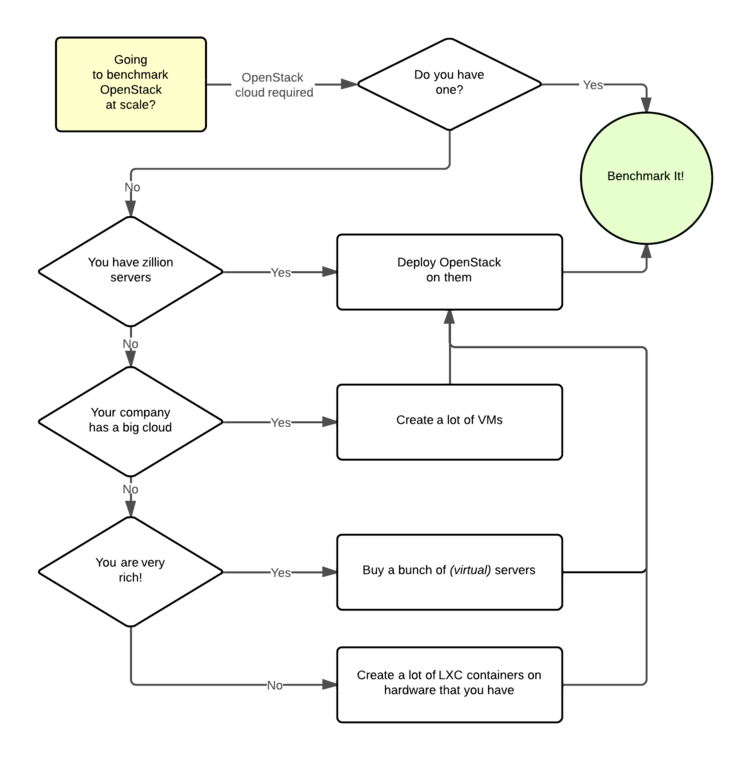

But why does Rally need these components?

It becomes really clear if we try to imagine: how I will benchmark cloud at Scale, if ...

TO BE CONTINUED

Rally in action

How amqp_rpc_single_reply_queue affects performance

To show Rally's capabilities and potential we used NovaServers.boot_and_destroy scenario to see how amqp_rpc_single_reply_queue option affects VM bootup time. Some time ago it was shown that cloud performance can be boosted by setting it on so naturally we decided to check this result. To make this test we issued requests for booting up and deleting VMs for different number of concurrent users ranging from one to 30 with and without this option set. For each group of users a total number of 200 requests was issued. Averaged time per request is shown below:

So apparently this option affects cloud performance, but not in the way it was thought before.

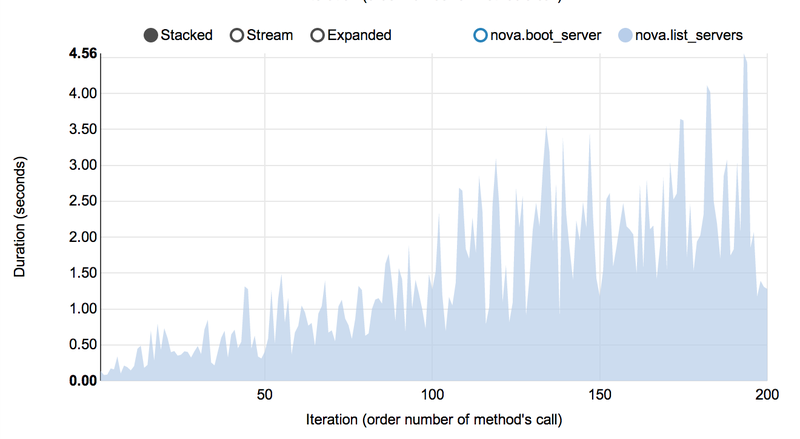

Performance of Nova instance list command

Context: 1 OpenStack user

Scenario: 1) boot VM from this user 2) list VM

Runner: Repeat 200 times.

As a result, on every next iteration user has more and more VMs and performance of VM list is degrading quite fast:

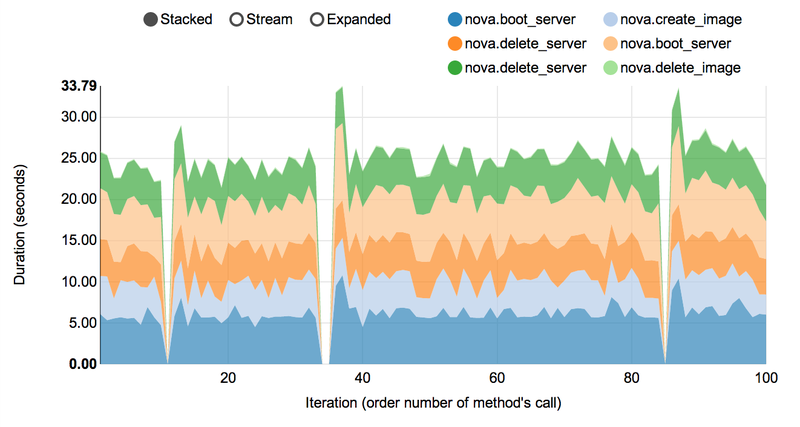

Complex scenarios & detailed information

For example NovaServers.snapshot contains a lot of "atomic" actions:

- boot VM

- snapshot VM

- delete VM

- boot VM from snapshot

- delete VM

- delete snapshot

Fortunately Rally collects information about duration of all these operation for every iteration.

As a result we are generating beautiful graphs:

How To

Actually there are only 3 steps that should be interesting for you:

- Install Rally

- Use Rally

- Add rally performance jobs to your project

- Main concepts of Rally

- Improve Rally

Updates

Periodically, we write up on a special updates page what sort of things have been accomplished in Rally recently and what are our plans for the future. Below you can find the most recent report (December 15, 2014).

Let us share with you our recent accomplishments in Rally:

- CLI improvements:

- The rally info command (which is a kind of built-in Rally reference) has been enhanced in such a way that it now prints detailed explanations of main concepts used in Rally whenever you type something like rally info BenchmarkScenarios or rally info SLA. We've also improved the output formatting so that now it is much easier to get through.

- The rally task list command now supports filters. You can filter the task list either by deployment (using the "... --deployment <deployment_name_or_id>" parameter) or by status ("... --status <status_name>")

- New benchmark scenarios:

- NovaSecGroup.boot_and_delete_server_with_secgroups: creates a number of Nova security groups with rules, then creates a Neutron network with one subnet, finally boots a VM with created security groups, lists the created resources and performs cleanup.

- CinderVolumes.create_nested_snapshots_and_attach_volume: creates a volume, its snapshot, and then (recursively) a volume from that snapshot. The recursion depth can be set by the user through the --nested_level parameter.

- Other improvements:

- Work on Trove support in Rally has been started with the integration of its client;

- New hacking rules are there to provide better codestyle throughout Rally.

Current work is centered aroud code refactoring (both major, as in the benchmark engine or the contexts, and minor, as introducing some syntax sugar via decorators to mark deprecated stuff and scenario samples). We also constantly work on expanding our scenario base. Last but not least, we're about to merge the Network Context class that enables easy Neutron network management.

We encourage you to take a look at new patches in Rally pending for review and to help us make Rally better!

Source code for Rally is hosted at GitHub: https://github.com/stackforge/rally

You can track the overall progress in Rally via Stackalytics: http://stackalytics.com/?release=kilo&metric=commits&project_type=all&module=rally

Open reviews for Rally: https://review.openstack.org/#/q/status:open+rally,n,z

Stay tuned!

Regards,

The Rally team

Rally in the World

| Date | Authors | Title | Location |

|---|---|---|---|

| 29/May/2014 |

|

Rally: OpenStack Tempest Testing Made Simple(r) | https://www.mirantis.com/blog |

| 01/May/2014 |

|

KVM and Docker LXC Benchmarking with OpenStack | http://bodenr.blogspot.ru/ |

| 01/Mar/2014 |

|

Benchmark as a Service OpenStack-Rally | OpenStack Meetup Bangalore |

| 28/Feb/2014 |

|

Benchmarking OpenStack With Rally | http://www.thegeekyway.com/ |

| 26/Feb/2014 |

|

Benchmarking OpenStack at megascale: How we tested Mirantis OpenStack at SoftLayer | http://www.mirantis.com/blog/ |

| 07/Nov/2013 |

|

Benchmark OpenStack at Scale | Openstack summit Hong Kong |

Project Info

Useful links

- Source code

- Rally road map

- Project space

- Bugs

- Patches on review

- Meeting logs

- IRC logs, server: irc.freenode.net channel: #openstack-rally

How to track project status?

The main directions of work in Rally are documented via blueprints. The most high-level ones are *-base blueprints, while more specific tasks are defined in derived blueprints (for an example of such a dependency tree, see the base blueprint for Benchmarks). Each “base” blueprint description contains a link to a google doc with detailed informations about its contents.

While each blueprint has an assignee, single patchsets that implement it may be owned by different developers. We use a Trello board to track the distribution of tasks among developers. The tasks are structured there both by labels (corresponding to top-level blueprints) and by their completion progress. Please note the Good for start category containing very simple tasks, which can serve as a perfect introduction to Rally for newcomers.

Where can I discuss & propose changes?

- Our IRC channel: IRC server

#openstack-rallyon irc.freenode.net; - Weekly Rally team meeting: held on Tuesdays at 1700 UTC in IRC, at the

#openstack-meetingchannel (irc.freenode.net); - Openstack mailing list: openstack-dev@lists.openstack.org (see subscription and usage instructions);

- Rally team on Launchpad: Answers/Bugs/Blueprints.